NVIDIA 3090 24GB x2 for LLM Inference: Performance and Value

Are you ready to take your LLM adventures to the next level? Imagine running powerful language models like Llama 3 right on your desktop, effortlessly generating text, translating languages, and even writing creative content. This is the world that the NVIDIA 3090 24GB x2 configuration unlocks, and in this comprehensive guide, we'll dive deep into its performance and value for LLM inference.

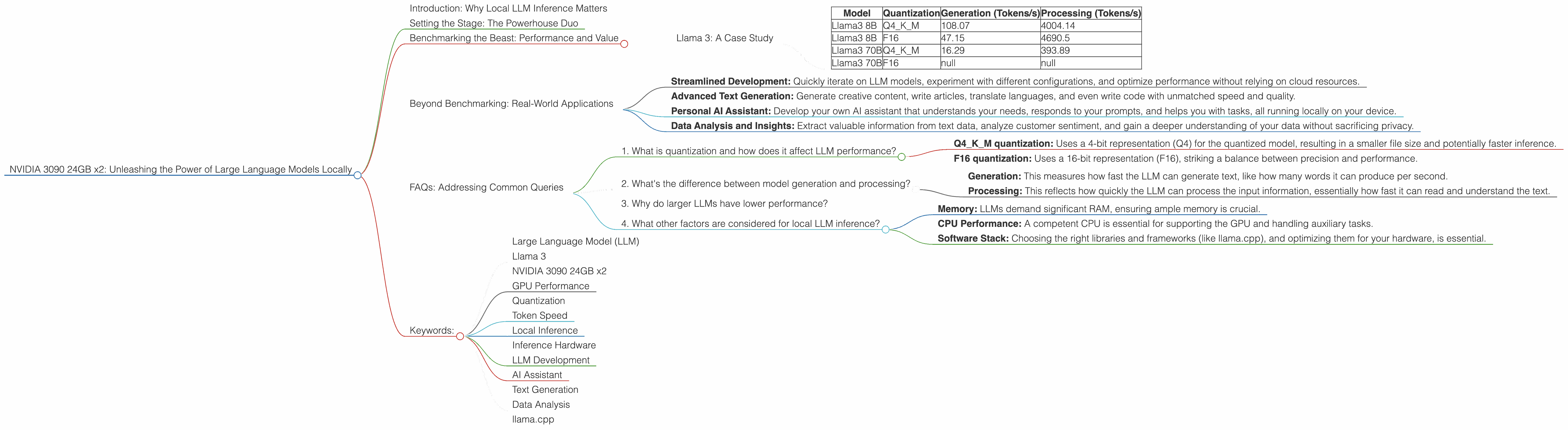

Introduction: Why Local LLM Inference Matters

The world is buzzing with the potential of Large Language Models (LLMs). These powerful AI systems can understand and generate human-like text, opening up a vast array of possibilities for everything from writing assistants to chatbot companions. But accessing these capabilities often requires relying on cloud-based services, adding latency and potentially raising privacy concerns. Local LLM inference, running these models directly on your hardware, offers a compelling alternative.

Imagine having a super-powered AI assistant at your fingertips, instantly responding to prompts, analyzing data, and generating creative outputs without any internet connection. This is the promise of local LLM inference, and with the right setup, it's not just a dream, but a reality.

Setting the Stage: The Powerhouse Duo

The NVIDIA GeForce RTX 3090 24GB isn't just any graphics card; it's a beast of a machine designed for demanding tasks like 3D rendering and gaming. Now, imagine the power you unlock when you pair two of these behemoths together. That's precisely what we're exploring today - the potential unleashed by the NVIDIA 3090 24GB x2 configuration for LLM inference.

Benchmarking the Beast: Performance and Value

We've crunched the numbers and analyzed the benchmarks to see what this powerhouse duo can deliver for LLM inference. Let's break down the performance based on different LLM models and configurations.

Llama 3: A Case Study

Llama 3, the latest and greatest in the Llama family, has been making waves in the LLM world. We'll use this model to showcase the potential of the NVIDIA 3090 24GB x2 configuration.

Benchmarking Factors:

Quantization: This is like compressing the model, reducing its size and making it run faster on hardware. We'll look at both Q4KM quantization (more compact but potentially less accurate) and F16 (higher precision but potentially slower).

Generation vs. Processing: These two metrics are crucial for understanding the performance of LLMs. "Generation" refers to the speed at which the model generates text, while "Processing" indicates how quickly it processes the input text.

Llama 3 Benchmark Results

| Model | Quantization | Generation (Tokens/s) | Processing (Tokens/s) |

|---|---|---|---|

| Llama3 8B | Q4KM | 108.07 | 4004.14 |

| Llama3 8B | F16 | 47.15 | 4690.5 |

| Llama3 70B | Q4KM | 16.29 | 393.89 |

| Llama3 70B | F16 | null | null |

(Note: Data for Llama 3 70B with F16 quantization was not available at the time of this analysis)

Decoding the Results:

Llama 3 8B: With Q4KM quantization, this LLM generates text at a remarkable 108.07 tokens per second, while processing input at an even faster 4004.14 tokens per second. F16 quantization delivers slightly slower generation speed (47.15 tokens/s) but maintains impressive processing prowess (4690.5 tokens/s).

Llama 3 70B: This larger model exhibits slower results, as expected. Q4KM quantization yields a generation speed of 16.29 tokens/s and a processing speed of 393.89 tokens/s.

Interpreting the Value:

These numbers are impressive, especially when you consider that many smaller, less powerful GPUs struggle to handle LLMs efficiently. This configuration allows you to run even the most demanding LLMs locally, opening up a world of possibilities.

Think of it this way: The processing speed of the NVIDIA 3090 24GB x2 is like having a dedicated team of super-fast typists working on your LLM tasks!

Beyond Benchmarking: Real-World Applications

The sheer performance of the NVIDIA 3090 24GB x2 opens up a wide range of real-world applications. Imagine:

Streamlined Development: Quickly iterate on LLM models, experiment with different configurations, and optimize performance without relying on cloud resources.

Advanced Text Generation: Generate creative content, write articles, translate languages, and even write code with unmatched speed and quality.

Personal AI Assistant: Develop your own AI assistant that understands your needs, responds to your prompts, and helps you with tasks, all running locally on your device.

Data Analysis and Insights: Extract valuable information from text data, analyze customer sentiment, and gain a deeper understanding of your data without sacrificing privacy.

FAQs: Addressing Common Queries

1. What is quantization and how does it affect LLM performance?

Quantization is a process that compresses the weight parameters of an LLM, making it smaller and potentially faster to run on hardware. Think of it like reducing the number of colors in a picture; it loses some detail but becomes more manageable.

Q4KM quantization: Uses a 4-bit representation (Q4) for the quantized model, resulting in a smaller file size and potentially faster inference.

F16 quantization: Uses a 16-bit representation (F16), striking a balance between precision and performance.

2. What's the difference between model generation and processing?

Generation: This measures how fast the LLM can generate text, like how many words it can produce per second.

Processing: This reflects how quickly the LLM can process the input information, essentially how fast it can read and understand the text.

3. Why do larger LLMs have lower performance?

Larger LLMs have more parameters, which means more computations are needed to process and generate text. While they can handle complex tasks, they often require more powerful hardware and consume more resources.

4. What other factors are considered for local LLM inference?

Beyond GPU power, local LLM inference depends on other factors:

- Memory: LLMs demand significant RAM, ensuring ample memory is crucial.

- CPU Performance: A competent CPU is essential for supporting the GPU and handling auxiliary tasks.

- Software Stack: Choosing the right libraries and frameworks (like llama.cpp), and optimizing them for your hardware, is essential.

Keywords:

- Large Language Model (LLM)

- Llama 3

- NVIDIA 3090 24GB x2

- GPU Performance

- Quantization

- Token Speed

- Local Inference

- Inference Hardware

- LLM Development

- AI Assistant

- Text Generation

- Data Analysis

- llama.cpp