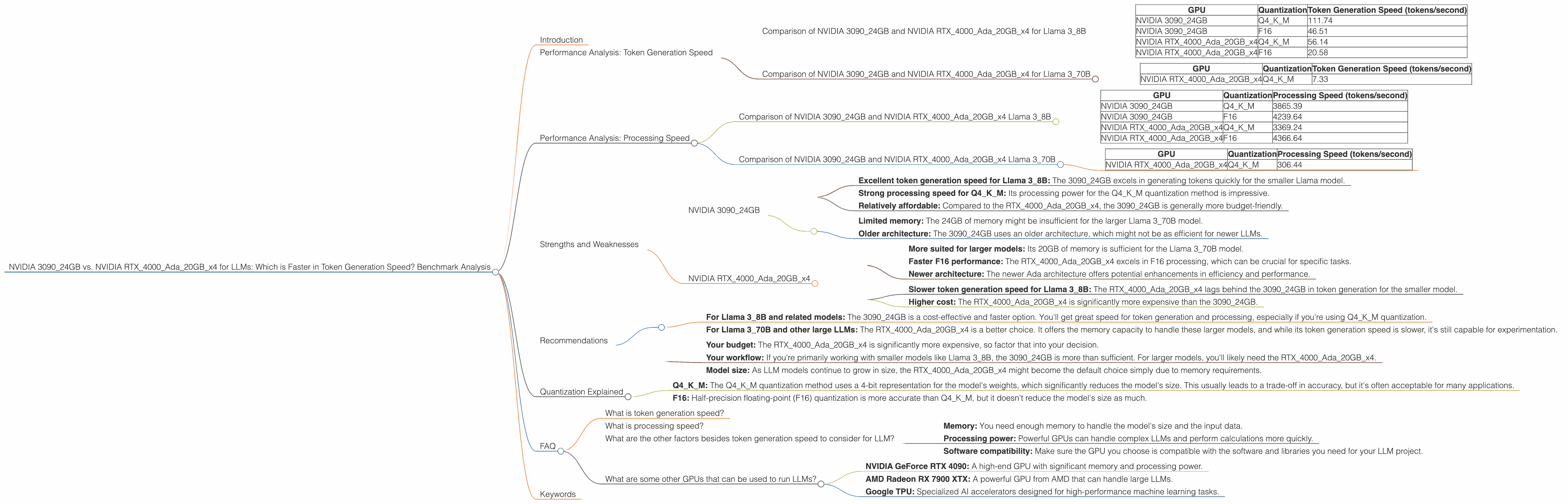

NVIDIA 3090 24GB vs. NVIDIA RTX 4000 Ada 20GB x4 for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

Welcome, fellow AI enthusiasts! We're diving into the exciting world of large language models (LLMs) and the hardware that fuels them. In this deep dive, we'll be comparing two popular GPUs, the NVIDIA 309024GB and the NVIDIA RTX4000Ada20GB_x4, to see which one reigns supreme in token generation speed for LLMs.

Imagine this: You're building a powerful chatbot or creating a next-generation AI assistant. Speed is crucial. You want your LLM to generate responses quickly and efficiently, avoiding those frustrating pauses and delays that can kill the user experience. This is where the right hardware comes into play.

We'll be using real-world benchmarks to get a glimpse into the token generation prowess of each GPU. To make this more digestible for everyone, we'll focus on the Llama 3 family of LLMs (Llama 38B and Llama 370B) - these are popular open-source options that are widely used for experimentation and development.

Performance Analysis: Token Generation Speed

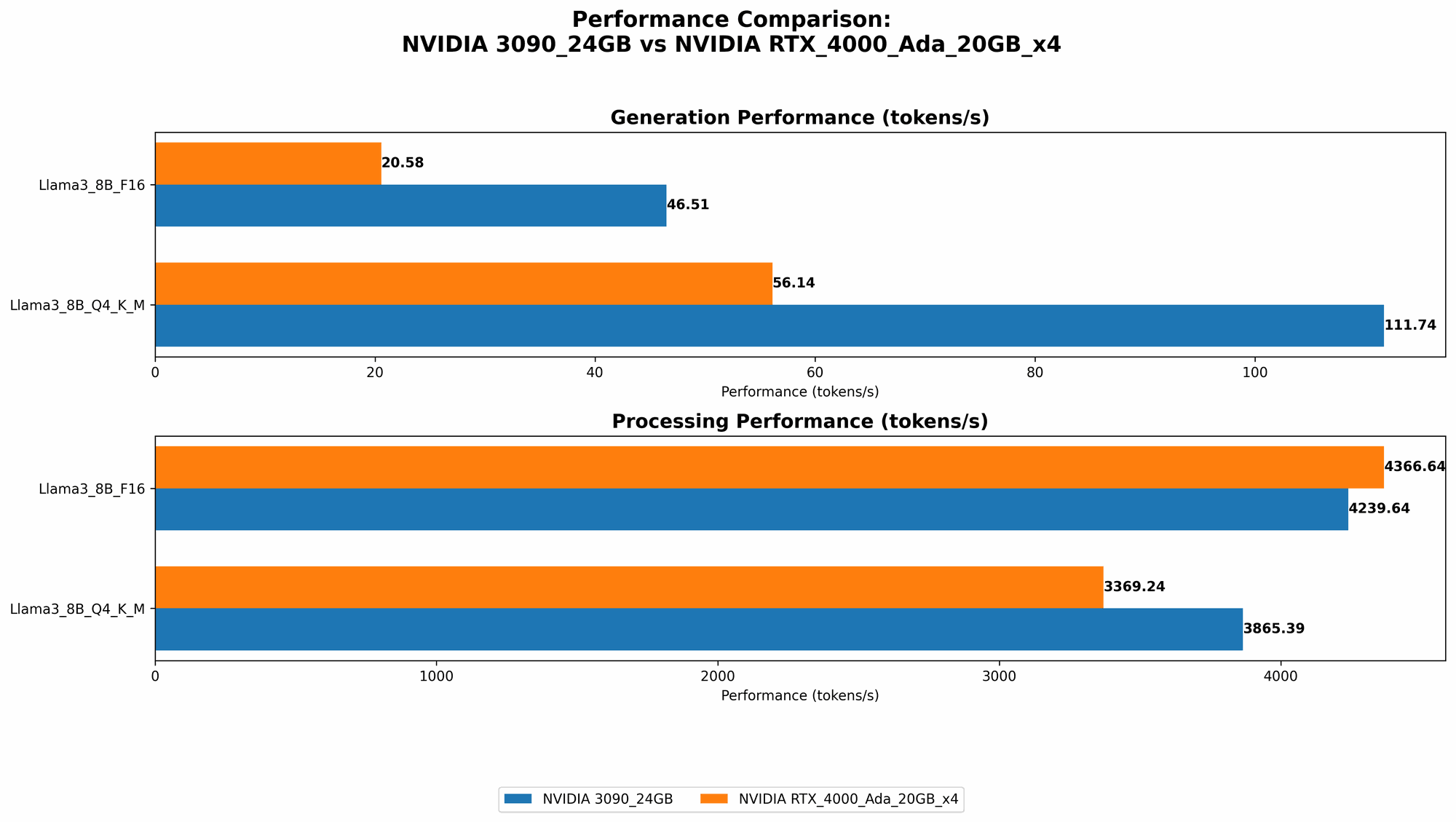

Comparison of NVIDIA 309024GB and NVIDIA RTX4000Ada20GBx4 for Llama 38B

Let's start with the smaller model, Llama 3_8B. This model is a good starting point for testing and experimentation, and it's relatively lightweight, making it suitable for a wider range of hardware.

| GPU | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|

| NVIDIA 3090_24GB | Q4KM | 111.74 |

| NVIDIA 3090_24GB | F16 | 46.51 |

| NVIDIA RTX4000Ada20GBx4 | Q4KM | 56.14 |

| NVIDIA RTX4000Ada20GBx4 | F16 | 20.58 |

Observations:

- Quantization: We're focusing on two common quantization methods: Q4KM (4-bit quantization with Kernel and Matrix) and F16 (half-precision floating-point).

- NVIDIA 309024GB is the clear winner: In the Q4KM scenario, the 309024GB delivers roughly double the token generation speed compared to the RTX4000Ada20GBx4.

- F16 Performance: The 3090_24GB also maintains its lead when using F16 quantization, although the difference is more pronounced.

Simple Analogy: Think of token generation like a race. The NVIDIA 309024GB is a cheetah, effortlessly leaping ahead. The RTX4000Ada20GB_x4 is a fast runner, but it can't quite keep up.

Comparison of NVIDIA 309024GB and NVIDIA RTX4000Ada20GBx4 for Llama 370B

Now, let's shift our attention to the larger, more complex Llama 3_70B model. Handling these massive models requires more processing power and memory.

| GPU | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|

| NVIDIA RTX4000Ada20GBx4 | Q4KM | 7.33 |

Observations:

- The 309024GB does not have data for the Llama 370B model. This means that either the model was not tested on that specific GPU, or the performance was not recorded.

- The RTX4000Ada20GBx4 delivers moderate performance: Even with its relative memory advantage, the RTX4000Ada20GBx4 struggles to handle the Llama 3_70B model.

Important Note: The lack of data for the 309024GB with the Llama 370B model suggests that the 3090_24GB's memory might be insufficient for this larger model.

Performance Analysis: Processing Speed

Token generation is crucial, but we also need to consider processing speed, which is how fast the LLM can process input data.

Comparison of NVIDIA 309024GB and NVIDIA RTX4000Ada20GBx4 Llama 38B

| GPU | Quantization | Processing Speed (tokens/second) |

|---|---|---|

| NVIDIA 3090_24GB | Q4KM | 3865.39 |

| NVIDIA 3090_24GB | F16 | 4239.64 |

| NVIDIA RTX4000Ada20GBx4 | Q4KM | 3369.24 |

| NVIDIA RTX4000Ada20GBx4 | F16 | 4366.64 |

Observations:

- The 309024GB shines in Q4KM processing: The 309024GB demonstrates superior processing speed in the Q4KM mode.

- F16 Processing: In the F16 mode, the RTX4000Ada20GBx4 takes the lead, showcasing its efficiency in handling floating-point operations.

Comparison of NVIDIA 309024GB and NVIDIA RTX4000Ada20GBx4 Llama 370B

| GPU | Quantization | Processing Speed (tokens/second) |

|---|---|---|

| NVIDIA RTX4000Ada20GBx4 | Q4KM | 306.44 |

Observations:

- Similar to the token generation results: The RTX4000Ada20GBx4 provides moderate processing speed for the Llama 370B, while the 309024GB does not have data for this model.

Strengths and Weaknesses

NVIDIA 3090_24GB

Strengths:

- Excellent token generation speed for Llama 38B: The 309024GB excels in generating tokens quickly for the smaller Llama model.

- Strong processing speed for Q4KM: Its processing power for the Q4KM quantization method is impressive.

- Relatively affordable: Compared to the RTX4000Ada20GBx4, the 3090_24GB is generally more budget-friendly.

Weaknesses:

- Limited memory: The 24GB of memory might be insufficient for the larger Llama 3_70B model.

- Older architecture: The 3090_24GB uses an older architecture, which might not be as efficient for newer LLMs.

NVIDIA RTX4000Ada20GBx4

Strengths:

- More suited for larger models: Its 20GB of memory is sufficient for the Llama 3_70B model.

- Faster F16 performance: The RTX4000Ada20GBx4 excels in F16 processing, which can be crucial for specific tasks.

- Newer architecture: The newer Ada architecture offers potential enhancements in efficiency and performance.

Weaknesses:

- Slower token generation speed for Llama 38B: The RTX4000Ada20GBx4 lags behind the 309024GB in token generation for the smaller model.

- Higher cost: The RTX4000Ada20GBx4 is significantly more expensive than the 3090_24GB.

Recommendations

Here's a quick breakdown of which GPU to choose based on your needs:

- For Llama 38B and related models: The 309024GB is a cost-effective and faster option. You'll get great speed for token generation and processing, especially if you're using Q4KM quantization.

- For Llama 370B and other large LLMs: The RTX4000Ada20GB_x4 is a better choice. It offers the memory capacity to handle these larger models, and while its token generation speed is slower, it's still capable for experimentation.

Important Considerations:

- Your budget: The RTX4000Ada20GBx4 is significantly more expensive, so factor that into your decision.

- Your workflow: If you're primarily working with smaller models like Llama 38B, the 309024GB is more than sufficient. For larger models, you'll likely need the RTX4000Ada20GBx4.

- Model size: As LLM models continue to grow in size, the RTX4000Ada20GBx4 might become the default choice simply due to memory requirements.

Quantization Explained

Quantization is a powerful technique that allows us to compress LLMs, making them smaller and more efficient. It's like taking a giant file and squeezing it down to a smaller size without losing too much detail. This is great for deploying LLMs on devices with limited memory.

- Q4KM: The Q4KM quantization method uses a 4-bit representation for the model's weights, which significantly reduces the model's size. This usually leads to a trade-off in accuracy, but it's often acceptable for many applications.

- F16: Half-precision floating-point (F16) quantization is more accurate than Q4KM, but it doesn't reduce the model's size as much.

Choosing the right quantization: It depends on your needs. For speed and efficient memory usage, Q4KM often works great. If accuracy is paramount, F16 might be the better choice.

FAQ

What is token generation speed?

Token generation speed refers to how fast a GPU can generate individual units of text, called tokens. These tokens are like the building blocks of language, and a higher token generation speed means the LLM produces outputs more rapidly.

What is processing speed?

Processing speed refers to how fast a GPU can process input data and perform computations related to the LLM. This involves things like matrix multiplications and other complex operations.

What are the other factors besides token generation speed to consider for LLM?

While token generation speed is important, there are several other factors to consider when choosing hardware for LLMs:

- Memory: You need enough memory to handle the model's size and the input data.

- Processing power: Powerful GPUs can handle complex LLMs and perform calculations more quickly.

- Software compatibility: Make sure the GPU you choose is compatible with the software and libraries you need for your LLM project.

What are some other GPUs that can be used to run LLMs?

The GPU market is constantly evolving, and there are many other options besides the ones we discussed. Here are a few examples:

- NVIDIA GeForce RTX 4090: A high-end GPU with significant memory and processing power.

- AMD Radeon RX 7900 XTX: A powerful GPU from AMD that can handle large LLMs.

- Google TPU: Specialized AI accelerators designed for high-performance machine learning tasks.

Keywords

LLM, token generation speed, NVIDIA 309024GB, NVIDIA RTX4000Ada20GBx4, GPU, Llama 3, quantization, Q4K_M, F16, processing speed, performance analysis, benchmark, AI, deep learning, machine learning, hardware, software, model size, memory, budget, speed, efficiency, accuracy, cost, comparison, recommendation, FAQ.