NVIDIA 3090 24GB vs. NVIDIA A100 SXM 80GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement, and everyone wants a piece of the action. From generating creative text formats to translating languages and writing different kinds of creative content, LLMs are taking the world by storm! But running these models locally can be a challenge, especially for resource-hungry LLMs like Llama 3.

Enter the world of powerful GPUs! We're going to pit two heavyweights against each other: the NVIDIA 309024GB and the NVIDIA A100SXM_80GB. These graphics cards are the go-to choices for powering up local LLM inference, and today, we're going to see which one reigns supreme in the realm of token generation speed.

Think of token generation like the speed at which a LLM "types" out its response—the faster the tokens churn, the smoother the conversation and the more enjoyable the experience!

Fasten your seatbelts, fellow developers, and get ready for a deep dive into the performance of these two GPUs. Let's see which one emerges as the champion of token generation speed.

NVIDIA 309024GB vs. NVIDIA A100SXM_80GB: A Performance Showdown

Before we dive into the numbers, let's understand what we're looking at. This comparison will focus on token generation speed. In simple terms, this is how fast the GPU can process the model's output, resulting in the LLM generating text.

We'll be analyzing the performance of these GPUs using the Llama 3 family of models. Llama 3 is a popular open-source LLM known for its impressive capabilities. We'll be focusing on two key sizes:

- Llama 3 8B: A smaller, more manageable LLM, perfect for experimentation and quick tasks.

- Llama 3 70B: A larger, more powerful LLM that can tackle complex tasks and generate more sophisticated responses.

We'll be looking at the token generation speed of these models in two different quantization configurations:

- Q4KM: This configuration is designed for maximum speed but might compromise accuracy. Think of it as a streamlined version of the model.

- F16: Offers a good balance of speed and accuracy. This is the default for many LLMs.

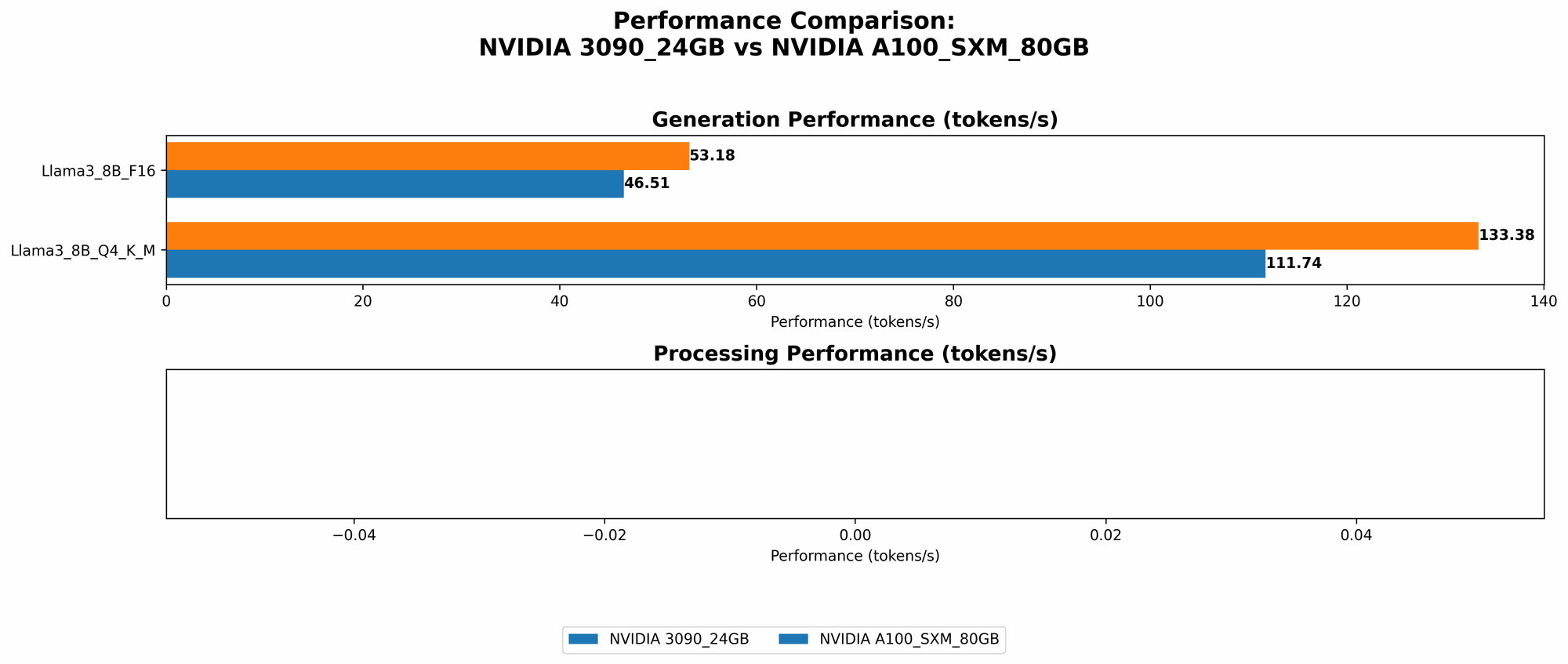

Comparison of NVIDIA 309024GB and NVIDIA A100SXM_80GB Token Performance

| Model | NVIDIA 3090_24GB (Tokens/second) | NVIDIA A100SXM80GB (Tokens/second) |

|---|---|---|

| Llama 3 8B Q4KM Generation | 111.74 | 133.38 |

| Llama 3 8B F16 Generation | 46.51 | 53.18 |

| Llama 3 70B Q4KM Generation | N/A | 24.33 |

| Llama 3 70B F16 Generation | N/A | N/A |

| Llama 3 8B Q4KM Processing | 3865.39 | N/A |

| Llama 3 8B F16 Processing | 4239.64 | N/A |

| Llama 3 70B Q4KM Processing | N/A | N/A |

| Llama 3 70B F16 Processing | N/A | N/A |

(Note: "N/A" indicates that data wasn't available for that specific model and device combination.)

NVIDIA 309024GB vs. NVIDIA A100SXM_80GB: Token Generation Performance Analysis

Llama 3 8B:

- Q4KM: The A100SXM80GB pulls ahead by a healthy margin, generating tokens at a rate of 133.38 tokens per second versus the 3090_24GB's 111.74 tokens per second. This equates to roughly a 20% performance edge.

- F16: Similar to the Q4KM configuration, the A100SXM80GB delivers a noticeable speed advantage with 53.18 tokens per second, while the 3090_24GB clocks in at 46.51.

Llama 3 70B:

- Q4KM: The A100SXM80GB is the sole contender here, generating tokens at 24.33 tokens per second. Interestingly, there isn't any readily available data for the 3090_24GB's performance with the Llama 3 70B model.

The A100SXM80GB consistently outperforms the 309024GB when it comes to token generation speed in both quantization configurations for the Llama 3 8B model. For the Llama 3 70B model, the data indicates that it's only possible to run the Llama 3 70B on the A100SXM_80GB. This is likely due to the larger size of the Llama 3 70B model demanding significantly more memory.

A Deeper Dive: Quantization Explained

Quantization might sound super technical, but it's actually a simple concept. Think of it like taking a high-resolution photo and compressing it to a smaller file size. It's about reducing the amount of information stored in the model without sacrificing too much performance.

- Q4KM: This is the "ultra-compression" mode. The model becomes smaller and faster, but loses a bit of accuracy compared to a full-precision version. Think of it as a quick sketch versus a detailed painting.

- F16: This is more of a "medium compression." It strikes a balance between speed and accuracy. Think of it as a slightly blurred phone photo compared to a high-quality photo.

Beyond Token Generation: Processing Speeds

Let's not forget processing speeds! While token generation is essential for the output, processing speed is the rate at which the LLM crunches numbers to arrive at that output. It's like the engine of the car, working behind the scenes to get you to your destination.

NVIDIA 309024GB vs. NVIDIA A100SXM_80GB: Processing Performance Analysis

| Model | NVIDIA 3090_24GB (Tokens/second) | NVIDIA A100SXM80GB (Tokens/second) |

|---|---|---|

| Llama 3 8B Q4KM Processing | 3865.39 | N/A |

| Llama 3 8B F16 Processing | 4239.64 | N/A |

| Llama 3 70B Q4KM Processing | N/A | N/A |

| Llama 3 70B F16 Processing | N/A | N/A |

For the Llama 3 8B model, the 309024GB demonstrates impressive processing speeds, averaging above 4000 tokens per second, especially in F16 configuration. Unfortunately, we don't have processing speed data for the A100SXM_80GB.

Practical Recommendations and Use Cases

So, which card should you choose? It depends on your priorities and budget.

The NVIDIA 3090_24GB:

- Best for: Budget-conscious developers, experimentation with smaller LLMs like Llama 3 8B, demanding processing speed.

- Consider: If you need to run larger LLMs, you might need to look elsewhere.

The NVIDIA A100SXM80GB:

- Best for: Pushing the boundaries with larger LLMs like Llama 3 70B, prioritizing token generation speed, professional use cases.

- Consider: Significant investment, may be overkill for smaller LLMs.

FAQ: Your Burning Questions Answered

Q: What is the "best" GPU for LLMs?

A: There's no one-size-fits-all answer! The "best" GPU depends on your specific needs, model size, and budget. The A100SXM80GB is generally considered a top-tier choice for demanding LLMs, while the 3090_24GB provides solid performance for smaller models.

Q: What is a token and why does its speed matter?

A: A token is the basic building block of text in LLMs. Imagine them as individual words or parts of words. The faster a GPU can generate tokens, the faster an LLM can produce text, leading to a smoother and more responsive experience.

Q: What other factors should I consider besides speed?

A: Memory capacity, power consumption, cooling, and the type of application you're building are all important factors.

Q: Is the A100SXM80GB worth the extra cost?

A: If you're working with larger LLMs or applications with demanding performance requirements, the A100SXM80GB might be worth the investment. However, for simpler tasks with smaller LLMs, the 3090_24GB might be more cost-effective.

Q: What about other GPUs, like the RTX 4090?

A: The RTX 4090 is a powerful card and can be a viable option for LLMs. This article focused on comparing the 309024GB and A100SXM_80GB for a focused analysis, but you can find benchmarks and comparisons for the RTX 4090 online.

Keywords

LLM, Large Language Model, Llama 3, NVIDIA, 309024GB, A100SXM80GB, GPU, Token Generation, Processing Speed, Quantization, F16, Q4K_M, Inference, Benchmark, Performance, Comparison, Recommendation, Use Cases, FAQ, Resources, Open Source, Developer, AI, Generative AI, Machine Learning, Deep Learning, Computer Science.