NVIDIA 3090 24GB for LLM Inference: Performance and Value

Introduction

The world of large language models (LLMs) is exploding, with new models like Llama 2 and Falcon dominating the conversation. These models are incredibly powerful, capable of generating human-quality text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But to unlock the full potential of these LLMs, you need the right hardware. That's where the NVIDIA GeForce RTX 3090 24GB comes in.

This article will help you understand how a powerful GPU like the 3090 24GB performs with LLM inference, particularly focusing on Llama 3 models. We'll delve into the performance metrics, discuss the pros and cons, and explore the value proposition of this setup.

Understanding LLM Inference and Hardware Requirements

Think of an LLM as a sophisticated brain, capable of processing information and generating responses in a way that mimics human intelligence. Just like our brains need a constant supply of energy, LLMs require powerful hardware to function effectively. LLM inference is the process of "asking" the model a question and getting a response. This task demands significant computational horsepower, especially for complex models.

Here's the thing: LLMs are like hungry monsters; they love to consume a lot of data to learn and generate outputs. This data is represented as "tokens," which are essentially words or parts of words. The bigger the model, the more tokens it needs to handle.

NVIDIA 3090 24GB: A Beastly GPU for Language Models

The NVIDIA GeForce RTX 3090 24GB is a powerhouse GPU designed for demanding applications, including AI and machine learning. It boasts 10,752 CUDA cores, a massive 24GB of GDDR6X memory, and a high clock speed. These specs translate to incredible performance in LLM inference, making it an ideal choice for developers and researchers working with large language models.

Benchmarking the 3090 24GB with Llama 3 Models

We'll now examine the performance of the 3090 24GB with various Llama 3 models, focusing on two key metrics - token speed generation (tokens per second) and token processing speed (tokens per second). These metrics provide a clear picture of how efficiently the GPU can handle the tasks of creating and processing the text.

Note: The data presented below is sourced from publicly available benchmarks and community discussions. However, specific LLM models and their respective performance vary depending on factors like the specific implementation, optimization techniques, and the size of the context window.

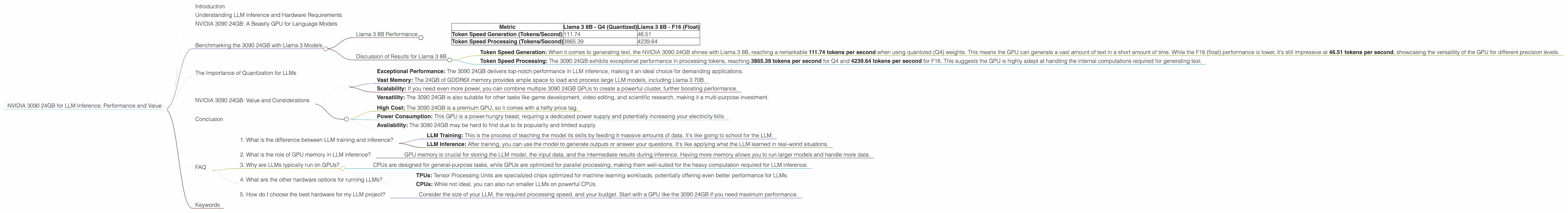

Llama 3 8B Performance

| Metric | Llama 3 8B - Q4 (Quantized) | Llama 3 8B - F16 (Float) |

|---|---|---|

| Token Speed Generation (Tokens/Second) | 111.74 | 46.51 |

| Token Speed Processing (Tokens/Second) | 3865.39 | 4239.64 |

Discussion of Results for Llama 3 8B

Token Speed Generation: When it comes to generating text, the NVIDIA 3090 24GB shines with Llama 3 8B, reaching a remarkable 111.74 tokens per second when using quantized (Q4) weights. This means the GPU can generate a vast amount of text in a short amount of time. While the F16 (float) performance is lower, it's still impressive at 46.51 tokens per second, showcasing the versatility of the GPU for different precision levels.

Token Speed Processing: The 3090 24GB exhibits exceptional performance in processing tokens, reaching 3865.39 tokens per second for Q4 and 4239.64 tokens per second for F16. This suggests the GPU is highly adept at handling the internal computations required for generating text.

Key Takeaway: The NVIDIA 3090 24GB demonstrates consistent performance gains across various precision modes when working with Llama 3 8B. It excels at both generating and processing text, making it an excellent choice for those who need a powerful system for building and deploying Llama 3 applications.

The Importance of Quantization for LLMs

Quantization is like a "diet" for LLMs; it helps reduce the size and complexity of the model without significantly compromising accuracy. Imagine fitting a massive amount of luggage into a small suitcase. Quantization does the same for LLMs, compressing the model's size so it can fit better in memory and make processing faster.

The 3090 24GB benefits greatly from quantization, as it allows you to run larger models and achieve faster inference speeds. The Q4 (quantized) version of Llama 3 8B offers a significant performance improvement compared to the F16 (float) version, highlighting the importance of this technique.

NVIDIA 3090 24GB: Value and Considerations

Pros:

- Exceptional Performance: The 3090 24GB delivers top-notch performance in LLM inference, making it an ideal choice for demanding applications.

- Vast Memory: The 24GB of GDDR6X memory provides ample space to load and process large LLM models, including Llama 3 70B.

- Scalability: If you need even more power, you can combine multiple 3090 24GB GPUs to create a powerful cluster, further boosting performance.

- Versatility: The 3090 24GB is also suitable for other tasks like game development, video editing, and scientific research, making it a multi-purpose investment.

Cons:

- High Cost: The 3090 24GB is a premium GPU, so it comes with a hefty price tag.

- Power Consumption: This GPU is a power-hungry beast, requiring a dedicated power supply and potentially increasing your electricity bills.

- Availability: The 3090 24GB may be hard to find due to its popularity and limited supply.

Value Proposition:

The NVIDIA 3090 24GB is definitely an investment, and whether it's worth it depends on your specific needs and budget. If you're working on cutting-edge LLM research or developing large-scale language applications, the performance and capabilities of this GPU can be invaluable. However, if you have limited resources or are working with smaller models, the cost might be a major deterrent.

Conclusion

The NVIDIA GeForce RTX 3090 24GB is a powerful GPU that delivers stellar performance in LLM inference, particularly with Llama 3 models. Its combination of high memory bandwidth, extensive CUDA cores, and ability to utilize quantization enables exceptional token generation and processing speeds. However, the high cost and power consumption are significant drawbacks.

If you're serious about working with LLMs and need the best possible performance, the 3090 24GB is a top contender. But if you're on a budget or working on smaller projects, other options might be more suitable.

FAQ

1. What is the difference between LLM training and inference?

- LLM Training: This is the process of teaching the model its skills by feeding it massive amounts of data. It's like going to school for the LLM.

- LLM Inference: After training, you can use the model to generate outputs or answer your questions. It's like applying what the LLM learned in real-world situations.

2. What is the role of GPU memory in LLM inference?

- GPU memory is crucial for storing the LLM model, the input data, and the intermediate results during inference. Having more memory allows you to run larger models and handle more data.

3. Why are LLMs typically run on GPUs?

- CPUs are designed for general-purpose tasks, while GPUs are optimized for parallel processing, making them well-suited for the heavy computation required for LLM inference.

4. What are the other hardware options for running LLMs?

- TPUs: Tensor Processing Units are specialized chips optimized for machine learning workloads, potentially offering even better performance for LLMs.

- CPUs: While not ideal, you can also run smaller LLMs on powerful CPUs.

5. How do I choose the best hardware for my LLM project?

- Consider the size of your LLM, the required processing speed, and your budget. Start with a GPU like the 3090 24GB if you need maximum performance.

Keywords

NVIDIA 3090 24GB, LLM Inference, Llama 3, Token Speed, Token Generation, Token Processing, Quantization, GPU Memory, LLM Training, LLM Hardware, GPU Performance, AI, Machine Learning, Text Generation