NVIDIA 3080 Ti 12GB vs. NVIDIA RTX A6000 48GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

In the exciting world of Large Language Models (LLMs), the ability to generate text at lightning speed is crucial. These models, capable of understanding and generating human-like text, are revolutionizing the way we interact with technology. For developers and researchers, finding the right hardware to unleash the full potential of these LLMs is essential.

Today, we'll delve into a head-to-head comparison of two popular GPUs, the NVIDIA GeForce RTX 3080 Ti 12GB and the NVIDIA RTX A6000 48GB, to see which one reigns supreme in token generation speed for various LLM models. We'll analyze the performance of these GPUs using real-world benchmarks, breaking down both the strengths and weaknesses of each contender. Get ready to dive deep into the world of GPUs and LLMs!

Benchmark Analysis: NVIDIA 3080 Ti 12GB vs. NVIDIA RTX A6000 48GB

Our comparison focuses on two key metrics:

- Token Generation Speed: This metric measures the number of tokens the GPU can generate per second. A higher value means faster text generation.

- Processing Speed: This metric measures how quickly the GPU can process the data required for generating text. A higher value means faster processing times.

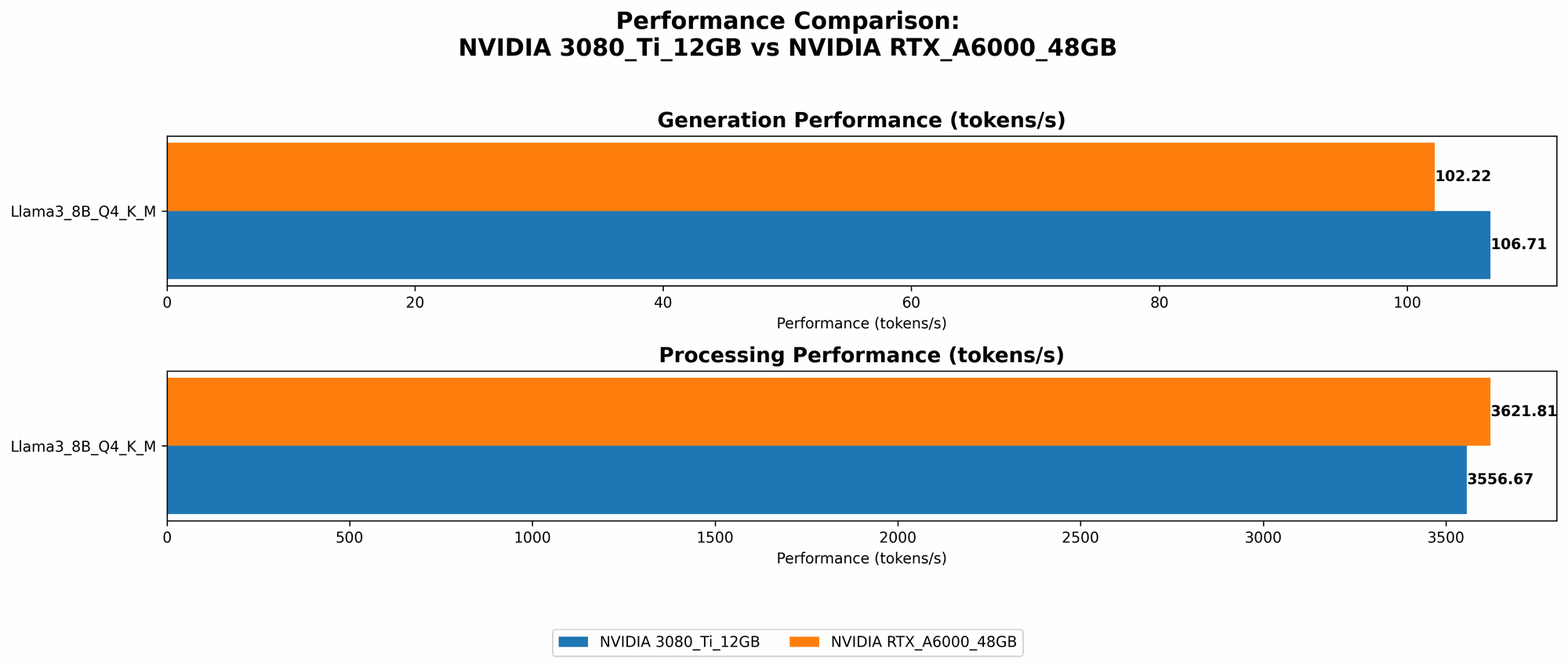

We'll be evaluating the performance of these GPUs on different LLM models, including Llama 3 8B and Llama 3 70B, with various quantization levels (Q4KM and F16). Remember, we are not considering other devices or smaller LLM sizes in this analysis. Let's start with a clear and concise table summarizing the benchmark results:

| GPU | LLM Model | Quantization | Token Generation Speed (Tokens/second) | Processing Speed (Tokens/second) |

|---|---|---|---|---|

| NVIDIA 3080 Ti 12GB | Llama 3 8B | Q4KM | 106.71 | 3556.67 |

| NVIDIA RTX A6000 48GB | Llama 3 8B | Q4KM | 102.22 | 3621.81 |

| NVIDIA RTX A6000 48GB | Llama 3 8B | F16 | 40.25 | 4315.18 |

| NVIDIA RTX A6000 48GB | Llama 3 70B | Q4KM | 14.58 | 466.82 |

NVIDIA 3080 Ti 12GB Performance Analysis

The NVIDIA 3080 Ti 12GB shines in token generation speed for the Llama 3 8B model with Q4KM quantization. It achieves an impressive 106.71 tokens per second, slightly edging out the A6000 in this specific configuration. However, the 3080 Ti 12GB lacks the processing power and memory capacity to handle larger models like Llama 3 70B.

Strengths:

- Excellent token generation speed for smaller models: The 3080 Ti 12GB proves to be a strong contender for smaller LLMs under Q4KM quantization.

- Cost-effective option: Compared to the RTX A6000 48GB, the 3080 Ti 12GB is significantly more affordable.

Weaknesses:

- Limited memory: With only 12GB of VRAM, the 3080 Ti 12GB struggles to handle larger LLM models.

- No F16 quantization support: The benchmark data lacks information about its performance with F16 quantization, potentially limiting its use cases.

NVIDIA RTX A6000 48GB Performance Analysis

The NVIDIA RTX A6000 48GB proves to be a versatile powerhouse capable of handling both smaller and larger LLMs with varying levels of quantization. Its performance is particularly impressive for larger models, but don't underestimate its capabilities for smaller ones.

Strengths:

- High memory capacity: With a massive 48GB of VRAM, the A6000 can handle the memory requirements of larger LLM models like Llama 3 70B.

- Support for F16 quantization: The A6000 offers a competitive advantage with its ability to handle F16 quantization, allowing for potential performance gains.

- Exceptional processing speed: Regardless of the model or quantization level, the A6000 consistently demonstrates impressive processing speeds, making it a top choice for computationally intensive tasks.

Weaknesses:

- Higher cost: The A6000 comes with a hefty price tag, making it a less budget-friendly option for users with limited resources.

- Token generation speed for smaller models: The 3080 Ti 12GB slightly outperforms the A6000 in token generation speed for the smaller Llama 3 8B model with Q4KM quantization.

Comparison of NVIDIA 3080 Ti 12GB and NVIDIA RTX A6000 48GB

The choice between the NVIDIA 3080 Ti 12GB and the NVIDIA RTX A6000 48GB depends heavily on your specific needs and budget. Here's a breakdown to help you decide:

NVIDIA 3080 Ti 12GB:

- Ideal for: Developers or researchers working with smaller LLMs, primarily with Q4KM quantization, who prioritize token generation speed and budget-friendly options.

- Not ideal for: Handling larger LLMs or those requiring F16 quantization.

NVIDIA RTX A6000 48GB:

- Ideal for: Developers or researchers working with a mix of smaller and larger LLMs, including models with F16 quantization, who prioritize memory capacity, processing speed, and are not restricted by budget.

- Not ideal for: Users with a tight budget who only need to work with smaller LLMs.

Practical Recommendations and Use Cases

Here are some practical recommendations to guide your choice:

Smaller LLMs (e.g., Llama 3 8B) with Q4KM quantization: For this scenario, the NVIDIA 3080 Ti 12GB offers a strong balance of performance and affordability. You can achieve fast token generation speeds while staying within a reasonable price range.

Larger LLMs (e.g., Llama 3 70B) with Q4KM or F16 quantization: The NVIDIA RTX A6000 48GB is the clear winner for handling larger LLMs. Its ample memory capacity, powerful processing capabilities, and support for F16 quantization ensure you can handle the demands of these models effectively.

Budget-conscious users working with smaller LLMs: If your budget is limited, the NVIDIA 3080 Ti 12GB offers sufficient performance for smaller LLMs with Q4KM quantization.

Researchers or developers working with various LLM sizes and quantization levels: The NVIDIA RTX A6000 48GB provides the flexibility and power to handle a wide range of LLM models and scenarios.

Going Deeper: LLMs, Quantization, and Hardware

For developers who are new to LLMs, understanding the concepts of quantization and its impact on hardware selection is crucial.

What is Quantization?

Think of it like compressing a high-resolution image using a lower-resolution format. In LLMs, quantization involves reducing the precision of numbers used to represent the model's parameters. This can significantly reduce the memory footprint of the LLM and improve inference speed, especially on GPUs.

Q4KM vs. F16 Quantization

Q4KM (Quantization 4 with Kernel and Matrix): This method uses 4 bits to represent each parameter, reducing the storage size and load on the GPU. It offers a good balance between model size and inference speed.

F16 (Half-Precision Floating Point): This method uses 16 bits to represent each parameter. It is less aggressive than Q4KM but can offer a slightly better quality of inference.

Hardware Considerations

Quantization affects the hardware choices for LLMs. Here's how:

- Memory Requirements: Lower quantization levels (e.g., Q4KM) reduce memory footprint, making it easier to run larger LLMs on GPUs with limited VRAM.

- Computational Requirements: The choice of quantization can impact the computational requirements for inference. Higher-precision quantization levels might require more computational power.

FAQs:

Q: What other factors influence LLM performance besides GPU choice?

A: Several other factors play a role, including:

- CPU Performance: The CPU is responsible for tasks like pre-processing text and post-processing LLM outputs. A powerful CPU can significantly improve the overall inference pipeline.

- Model Architecture: Different LLM architectures have varying computational demands. Some models might be more demanding on the GPU than others.

- Software Stack: The chosen software stack, including the LLM framework and libraries, can impact performance. Optimizations within the software can significantly affect speed and resource usage.

Q: What's the future of LLM hardware requirements?

A: As LLM models continue to grow in size and complexity, the demand for powerful hardware will only increase. Expect to see advancements in GPUs with even larger memory capacity, faster processing speeds, and improved support for various quantization levels. Furthermore, new technologies like specialized AI accelerators might emerge to handle the increasing computational demands of LLMs.

Q: Are there any alternatives to GPUs for running LLMs?

A: Yes! While GPUs are commonly used for LLMs, other alternatives exist:

- TPUs (Tensor Processing Units): These are custom-designed ASICs (Application-Specific Integrated Circuits) by Google specifically for machine learning tasks, including LLM inference. TPUs offer high performance for specific LLM scenarios.

- CPUs (Central Processing Units): While not as efficient as GPUs or TPUs, CPUs can still be used for running smaller LLMs, especially for simpler tasks or when resource constraints exist.

Q: Can I use a consumer-grade GPU for running LLMs?

A: You can, but it might not be ideal, especially for larger models. Consumer-grade GPUs often prioritize gaming performance and have limited memory capacity. For serious LLM work, consider professional-grade GPUs like the RTX A6000 or TPUs.

Keywords:

NVIDIA RTX 3080 Ti 12GB, NVIDIA RTX A6000 48GB, LLM, Large Language Model, Token Generation Speed, Processing Speed, Llama 3 8B, Llama 3 70B, Q4KM Quantization, F16 Quantization, GPU, Benchmark, Performance, Comparison, Budget, Use Cases, GPU, TPUs, CPUs, Hardware, Software, AI, Deep Learning, Machine Learning, Development, Research, Artificial Intelligence, Natural Language Processing, Text Generation, Computer Vision, Chatbots, AI Assistant, AI Applications.