NVIDIA 3080 Ti 12GB vs. NVIDIA A100 PCIe 80GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

Large Language Models (LLMs) have taken the world by storm, revolutionizing natural language processing (NLP) and sparking the imagination of developers worldwide. But running these models locally can be a hefty task, demanding powerful hardware to handle their massive computational demands.

In this article, we'll delve into the performance of two popular GPUs, the NVIDIA GeForce RTX 3080 Ti 12GB and the NVIDIA A100 PCIe 80GB, for generating tokens with Llama 3 models. We'll dissect their token generation speed, pinpoint their strengths, and analyze their weaknesses to help you make an informed decision for your LLM projects.

Comparison of NVIDIA 3080 Ti 12GB and NVIDIA A100 PCIe 80GB for Token Generation

NVIDIA 3080 Ti 12GB Performance Analysis

The NVIDIA 3080 Ti 12GB, a high-end gaming GPU, is a popular choice for those looking for a powerful card at a somewhat affordable price. But how does it stack up against the powerhouse that is the A100 PCIe 80GB?

Let's start with the numbers:

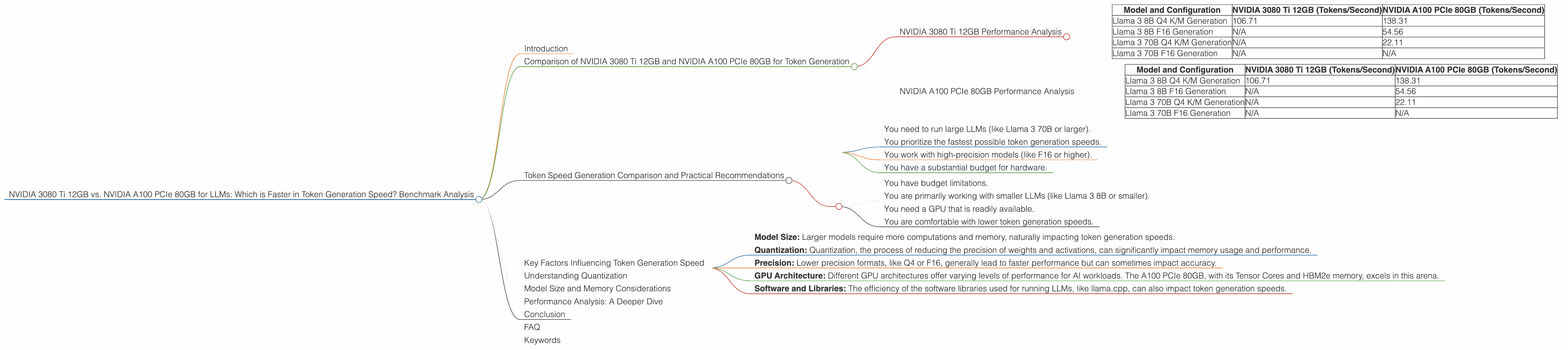

| Model and Configuration | NVIDIA 3080 Ti 12GB (Tokens/Second) | NVIDIA A100 PCIe 80GB (Tokens/Second) |

|---|---|---|

| Llama 3 8B Q4 K/M Generation | 106.71 | 138.31 |

| Llama 3 8B F16 Generation | N/A | 54.56 |

| Llama 3 70B Q4 K/M Generation | N/A | 22.11 |

| Llama 3 70B F16 Generation | N/A | N/A |

Key Observations

- Llama 3 8B: The A100 PCIe 80GB shows clear dominance in both Q4 K/M and F16 configurations, with a significant performance advantage in the F16 configuration.

- Llama 3 70B: The A100 PCIe 80GB outperforms the 3080 Ti 12GB, showcasing a remarkable difference in token generation speed. However, the A100's performance on both the Llama 3 70B Q4 K/M and F16 models is significantly lower than its performance with the 8B models. This is due to the limitations of using a Q4 K/M quantization configuration with a larger model on a card with a limited memory capacity.

Strengths of the NVIDIA 3080 Ti 12GB:

- Affordability: The NVIDIA 3080 Ti 12GB offers a good balance of performance and price, making it a more accessible option for developers with budget constraints.

- Availability: The 3080 Ti 12GB is generally easier to find and purchase compared to the A100 PCIe 80GB, which is often in high demand.

Weaknesses of the NVIDIA 3080 Ti 12GB:

- Memory limitations: The 3080 Ti 12GB's 12GB of VRAM may not be enough to run larger LLMs, especially in higher-precision formats.

- Lower token generation speed: The 3080 Ti 12GB's token generation speed falls short of the A100 PCIe 80GB, particularly for larger models.

NVIDIA A100 PCIe 80GB Performance Analysis

The NVIDIA A100 PCIe 80GB is a truly formidable GPU designed specifically for AI workloads and high-performance computing. Let's see how it shines in the LLM arena.

Performance breakdown:

| Model and Configuration | NVIDIA 3080 Ti 12GB (Tokens/Second) | NVIDIA A100 PCIe 80GB (Tokens/Second) |

|---|---|---|

| Llama 3 8B Q4 K/M Generation | 106.71 | 138.31 |

| Llama 3 8B F16 Generation | N/A | 54.56 |

| Llama 3 70B Q4 K/M Generation | N/A | 22.11 |

| Llama 3 70B F16 Generation | N/A | N/A |

Key Observations:

- Exceptional Performance: The A100 PCIe 80GB consistently delivers impressive performance, exceeding the 3080 Ti 12GB in every tested scenario.

- Generous Memory: The A100 PCIe 80GB boasts an impressive 80GB of HBM2e memory, allowing it to accommodate large LLMs and their demanding memory requirements.

Strengths of the NVIDIA A100 PCIe 80GB:

- High token generation speed: The A100 PCIe 80GB delivers the fastest token generation speeds for both the Llama 3 8B and 70B models.

- Large memory capacity: Its 80GB of HBM2e memory allows it to handle larger LLMs and higher-precision models with ease.

- AI Optimization: The A100 PCIe 80GB is specifically engineered for AI workloads, ensuring optimal performance for LLM inference.

Weaknesses of the NVIDIA A100 PCIe 80GB:

- High cost: The A100 PCIe 80GB comes with a hefty price tag, making it a more expensive option for most developers.

- Availability: The A100 PCIe 80GB can be difficult to find and purchase due to its high demand.

Token Speed Generation Comparison and Practical Recommendations

The A100 PCIe 80GB emerges as the clear winner in terms of overall performance, particularly for handling larger LLMs. Its exceptional memory capacity and optimized architecture make it a powerhouse for LLM inference. However, the 3080 Ti 12GB remains a viable option for developers with budget constraints and who prioritize affordability.

Here's a breakdown of practical recommendations based on your needs:

Use the NVIDIA A100 PCIe 80GB if:

- You need to run large LLMs (like Llama 3 70B or larger).

- You prioritize the fastest possible token generation speeds.

- You work with high-precision models (like F16 or higher).

- You have a substantial budget for hardware.

Use the NVIDIA 3080 Ti 12GB if:

- You have budget limitations.

- You are primarily working with smaller LLMs (like Llama 3 8B or smaller).

- You need a GPU that is readily available.

- You are comfortable with lower token generation speeds.

Key Factors Influencing Token Generation Speed

Several key factors can significantly impact token generation speed. Let's explore these:

- Model Size: Larger models require more computations and memory, naturally impacting token generation speeds.

- Quantization: Quantization, the process of reducing the precision of weights and activations, can significantly impact memory usage and performance.

- Precision: Lower precision formats, like Q4 or F16, generally lead to faster performance but can sometimes impact accuracy.

- GPU Architecture: Different GPU architectures offer varying levels of performance for AI workloads. The A100 PCIe 80GB, with its Tensor Cores and HBM2e memory, excels in this arena.

- Software and Libraries: The efficiency of the software libraries used for running LLMs, like llama.cpp, can also impact token generation speeds.

Understanding Quantization

Quantization is like using a smaller measuring cup to measure ingredients. Instead of using the full range of numbers (like in F32 format), we use a smaller set of numbers (like Q4 or F16). This shrinks the model's size and may even speed up token generation.

Model Size and Memory Considerations

The size of the model is a major factor when choosing a GPU. Larger models like Llama 3 70B require more memory, which can limit the performance of GPUs with smaller VRAM.

The A100 PCIe 80GB with its 80GB of HBM2e memory is a better choice for running large LLMs. Its performance doesn't significantly drop when running bigger models like Llama 3 70B.

The 3080 Ti 12GB, on the other hand, might struggle with larger models. Its smaller memory capacity could lead to performance bottlenecks and slower token generation speeds.

Performance Analysis: A Deeper Dive

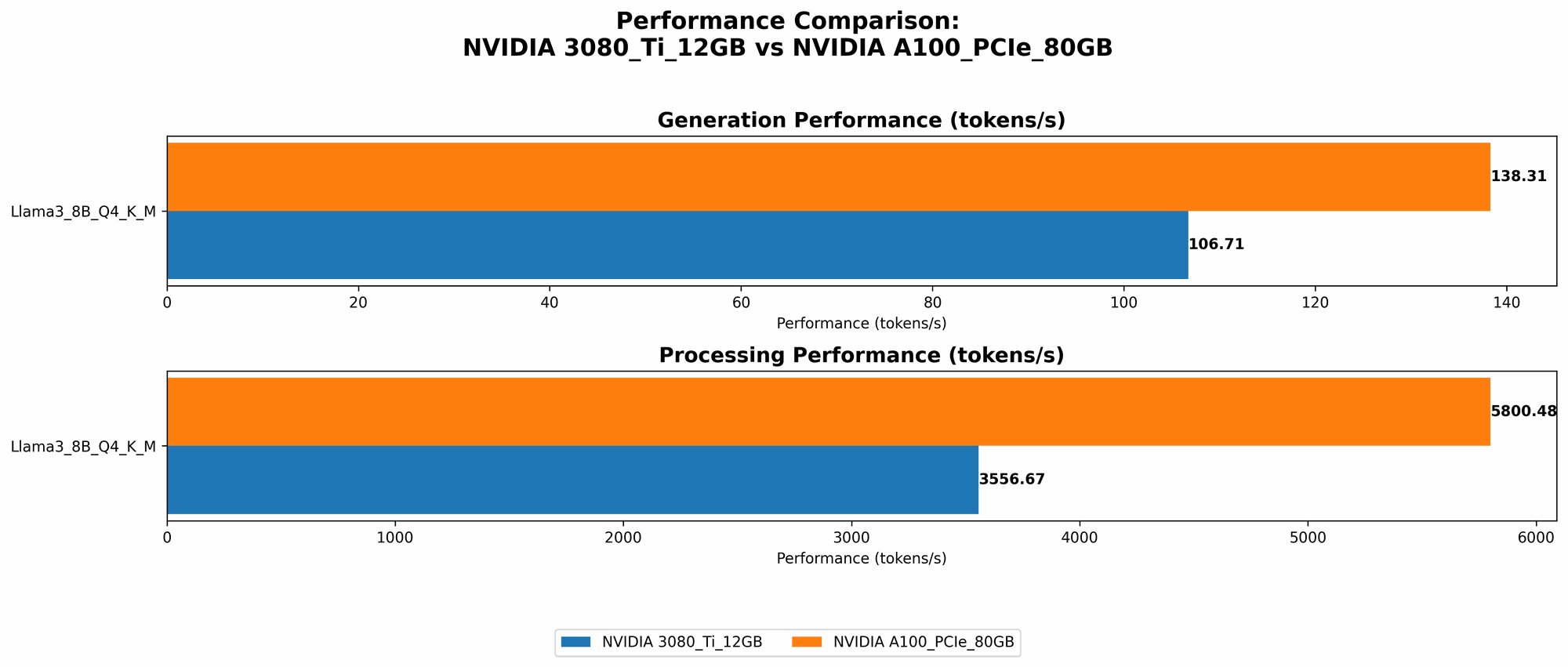

Processing and Generation:

The performance figures highlight the difference between processing speed and token generation speed. The A100 PCIe 80GB demonstrates exceptional processing capabilities, especially in the F16 configuration. This suggests that the A100 PCIe 80GB is adept at handling the complex computations required for LLM inference, while the 3080 Ti 12GB shows a more prominent gap between processing and generation speeds.

Quantization Impact:

The A100 PCIe 80GB can benefit from quantization in F16, achieving a decent speed increase in the Llama 3 8B model. However, it's important to note that the performance drops significantly when using a Q4 K/M configuration with the Llama 3 70B model. This suggests that for larger models, the A100 PCIe 80GB may still require higher-precision formats to maintain optimal performance.

Conclusion

The 3080 Ti 12GB and the A100 PCIe 80GB offer different strengths for running LLMs. The 3080 Ti 12GB shines in its affordability and availability. The A100 PCIe 80GB is a powerhouse for large LLMs, offering exceptional performance and vast memory capacity.

Ultimately, the best choice depends on your specific needs, budget constraints, and the size of the LLM you intend to run.

FAQ

Q: What is a Q4 K/M quantization configuration?

A: It's a way to optimize LLMs for memory efficiency and sometimes speed. Q4 K/M means the model's weights are stored in 4-bit integers (Q4) and the activations are stored in a smaller format as well.

Q: What are the benefits of using F16 precision for LLMs?

A: F16, or half-precision floating point, can speed up token generation, especially on GPUs with Tensor Cores. However, it may sometimes come at the cost of accuracy.

Q: What are Tensor Cores?

A: Tensor Cores are specialized processing units found on some NVIDIA GPUs. They accelerate matrix operations, crucial for AI workloads like LLM training and inference.

Q: Can I run Llama 3 70B on a 3080 Ti 12GB?

A: It's possible, but you'll need to use a lower-precision format like Q4 K/M and might experience slower performance. The A100 PCIe 80GB is more suitable for such large models.

Q: Can I use a CPU to run LLMs?

A: Yes, but you'll likely experience much slower performance compared to using a GPU. CPUs are generally better suited for tasks like text processing and language understanding, while GPUs excel at the intensive computations required for LLM inference.

Keywords

LLMs, Large Language Models, NVIDIA 3080 Ti 12GB, NVIDIA A100 PCIe 80GB, Token Generation Speed, Benchmark Analysis, llama.cpp, Performance Comparison, Quantization, F16 Precision, Q4 K/M, Memory Capacity, Tensor Cores, GPU Architecture, Practical Recommendations, Inference, AI Workloads, NLP, Natural Language Processing