NVIDIA 3080 Ti 12GB vs. NVIDIA 4090 24GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is constantly evolving, opening up exciting possibilities for developers and researchers. These powerful models, like the popular Llama 3, demand hefty computing resources, primarily GPUs, for their training and inference. Choosing the right GPU for LLM workloads can make a significant difference in performance, especially when it comes to token generation speed, which directly impacts how fast your applications respond.

This article dives deep into a head-to-head comparison between two popular NVIDIA GPUs, the 3080 Ti 12GB and the 4090 24GB, specifically focusing on their performance running Llama 3 models. We'll analyze token generation speeds, explore their strengths and weaknesses, and offer recommendations based on your needs.

Get ready for a deep dive into the world of LLMs and GPU performance!

Understanding the Players

NVIDIA 3080 Ti 12GB

The NVIDIA 3080 Ti is a powerhouse in the world of gaming and high-performance computing. Its 12GB of GDDR6X memory and 10,240 CUDA cores make it a formidable option for various demanding applications.

NVIDIA 4090 24GB

The NVIDIA 4090 is the current flagship GPU, boasting an impressive 24GB of GDDR6X memory and a whopping 16,384 CUDA cores. This beast is designed to handle the most intensive workloads, including LLM training and inference.

Benchmark Analysis

The data source for our comparison includes publicly available benchmarks from the llama.cpp repository and XiongjieDai's GPU Benchmarks on LLM Inference repository.

Let's look at the token generation speed results for both GPUs with different Llama 3 model configurations:

Token Generation Speed (Tokens/second)

| GPU | Llama 3 Model | Generation Speed (Tokens/second) |

|---|---|---|

| NVIDIA 3080 Ti 12GB | Llama 3 8B (Q4, K, M) | 106.71 |

| NVIDIA 4090 24GB | Llama 3 8B (Q4, K, M) | 127.74 |

| NVIDIA 4090 24GB | Llama 3 8B (F16) | 54.34 |

| Missing Data | Llama 3 70B (Q4, K, M) | N/A |

| Missing Data | Llama 3 70B (F16) | N/A |

Note: * The generation speed varies depending on the Llama 3 model size and quantization method (Q4, K, M or F16). * Data for Llama 3 70B models on both NVIDIA 3080 Ti 12GB and 4090 24GB is unavailable in our benchmark dataset.

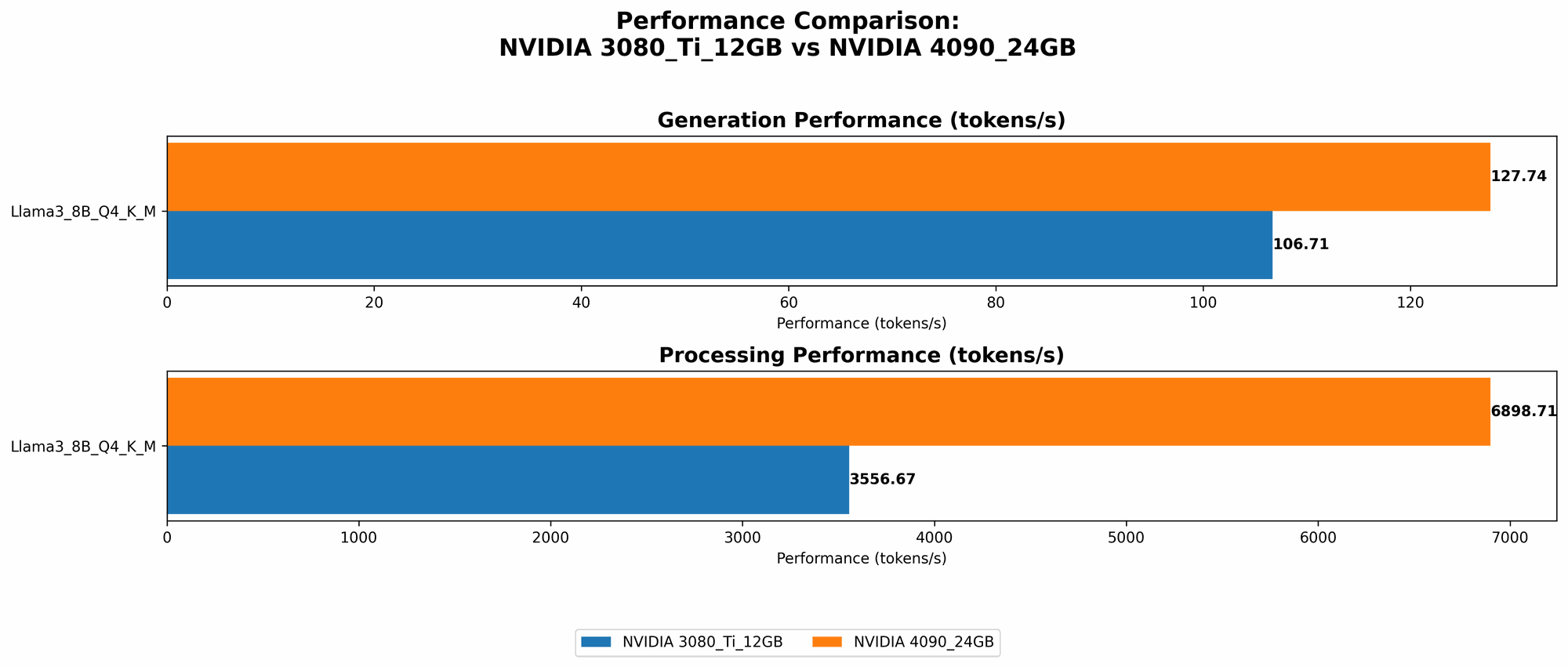

Performance Comparison of NVIDIA 3080 Ti 12GB and NVIDIA 4090 24GB

NVIDIA 4090 24GB: The Speed Demon

The NVIDIA 4090 clearly reigns supreme in token generation speed, offering up to 20% better performance than the 3080 Ti 12GB for the Llama 3 8B model with Q4 quantization.

Let's break down the results:

- Llama 3 8B (Q4, K, M): The 4090 24GB delivers a significant increase in token generation speed, producing around 20% more tokens per second compared to the 3080 Ti 12GB.

- Llama 3 8B (F16): Despite showcasing a faster generation speed than the 3080 Ti 12GB, the 4090 24GB's performance drops significantly when using F16 quantization. This indicates that the 3080 Ti might be a better choice for smaller models with F16 quantization, where memory bandwidth limitations are less pronounced.

NVIDIA 3080 Ti 12GB: A Solid Performer

While the 3080 Ti 12GB might not be the absolute speed king, it still delivers excellent performance for many LLM workloads.

Here's a breakdown of its performance:

- Llama 3 8B (Q4, K, M): The 3080 Ti delivers solid token generation speed, though it falls behind the 4090.

- Llama 3 8B (F16): We lack the F16 data for this model with the 3080 Ti. However, considering the 4090's performance drop with F16 quantization, the 3080 Ti might be a more cost-effective option for models with this type of quantization.

Understanding Quantization

Quantization is a technique used to reduce the size of LLMs, which helps to optimize memory consumption and speed up inference. Imagine it like compressing a large image file to make it smaller without sacrificing too much detail.

- Q4 Quantization: Q4 quantization uses 4-bit integers to represent each value in the LLM, resulting in significantly smaller models and faster inference.

- F16 Quantization: F16 quantization uses 16-bit floating-point numbers, leading to a balance between model size and performance. It's generally a good all-around choice.

Practical Use-cases and Recommendations

Here's a breakdown of how to choose between these two GPUs based on your LLM needs:

NVIDIA 4090 24GB:

- High-performance LLMs: For applications requiring the fastest token generation speed, especially with larger models like Llama 3 70B (once data becomes available), the NVIDIA 4090 is an excellent choice.

- Memory-intensive tasks: Its massive 24GB of GDDR6X memory makes it perfect for memory-intensive tasks like running large models or training.

NVIDIA 3080 Ti 12GB:

- Cost-effective option: If you're looking for a more budget-friendly GPU that can still handle LLM workloads, the NVIDIA 3080 Ti 12GB is a great contender.

- Smaller models: The 3080 Ti might be a better choice for smaller models with F16 quantization, as its memory bandwidth might be sufficient to achieve good performance.

Choosing the Right Tool for the Job

The ideal GPU depends on your specific LLM workload and budget. If speed is your ultimate priority, the NVIDIA 4090 24GB is the clear winner. However, if you're working with smaller models and are looking for a good balance between performance and cost, the NVIDIA 3080 Ti 12GB is a solid option.

FAQs

What are LLMs?

Large Language Models (LLMs) are a type of artificial intelligence that are trained on massive amounts of text data. They can generate human-like text, translate languages, answer questions, write different kinds of creative content, and perform many other language-related tasks.

What is Token Generation Speed?

Token generation speed refers to how many tokens (words or sub-words) an LLM can process or generate per second. This is a crucial metric when it comes to LLM inference, as it directly affects the speed of your application.

What is Quantization?

Quantization is a technique used to reduce the size of LLMs by representing their values with fewer bits. This can significantly speed up inference and reduce memory consumption.

When should I choose the NVIDIA 4090 24GB over the NVIDIA 3080 Ti 12GB?

If you are running large LLMs, particularly those with higher memory requirements, or if you prioritize the fastest token generation speed, the NVIDIA 4090 24GB is the way to go.

When should I choose the NVIDIA 3080 Ti 12GB over the NVIDIA 4090 24GB?

The NVIDIA 3080 Ti 12GB is a solid choice for smaller models and when you are on a tighter budget. It can still deliver excellent performance for many LLM workloads, and its memory bandwidth might be sufficient for models with F16 quantization.

Keywords

LLMs, Llama 3, NVIDIA 3080 Ti 12GB, NVIDIA 4090 24GB, GPU, Token Generation Speed, Quantization, F16, Q4, Benchmarks, Performance Comparison, LLM Inference, GPU Performance, Deep Learning, AI, Machine Learning, Natural Language Processing.