NVIDIA 3080 Ti 12GB for LLM Inference: Performance and Value

Introduction

The world of large language models (LLMs) is exploding, with new models and advancements happening seemingly every day. These powerful AI systems can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these LLMs locally can be resource-intensive, requiring powerful hardware. This is where the mighty NVIDIA 3080 Ti 12GB shines – a graphics card designed for demanding tasks like gaming and, you guessed it, LLM inference. But how does it actually fare when put to the test? Let's find out!

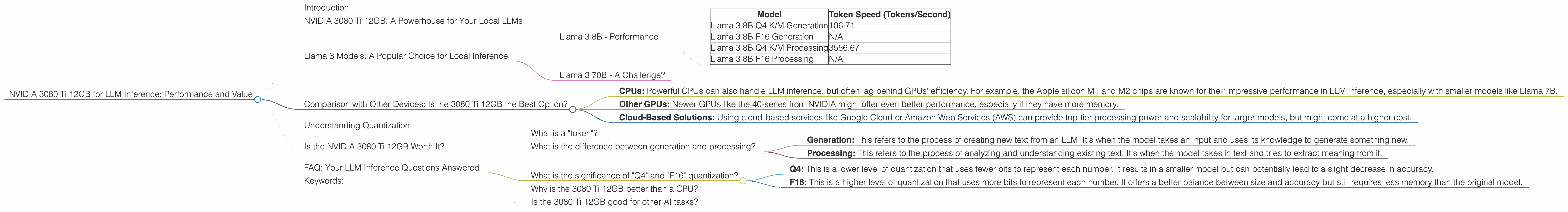

NVIDIA 3080 Ti 12GB: A Powerhouse for Your Local LLMs

The NVIDIA 3080 Ti 12GB is a beast of a graphics card, packed with 12GB of GDDR6X memory and boasting impressive performance. It's designed to handle the most demanding games, but its processing power is a real boon for running LLMs locally. Let's dive into how it performs with different LLM models and see if it's worth the investment.

Llama 3 Models: A Popular Choice for Local Inference

The Llama 3 family of LLMs is a popular choice for local inference because they strike a balance between performance and size. Here's how the NVIDIA 3080 Ti 12GB handles the 8B and 70B versions of Llama 3:

Llama 3 8B - Performance

The 3080 Ti 12GB is a great fit for running the Llama 3 8B model. We'll focus on the most common quantization levels of Q4 and F16:

| Model | Token Speed (Tokens/Second) |

|---|---|

| Llama 3 8B Q4 K/M Generation | 106.71 |

| Llama 3 8B F16 Generation | N/A |

| Llama 3 8B Q4 K/M Processing | 3556.67 |

| Llama 3 8B F16 Processing | N/A |

Key Takeaways:

- Impressive Speed: The 3080 Ti 12GB can process over 100 tokens per second when using the Q4 quantization level for generation, making it capable of generating text at a decent pace.

- Processing Power: The card's processing power shines when handling the processing stage, where it reaches over 3500 tokens per second. This means it can handle large amounts of data quickly.

- Missing Data: We don't have data on the 3080 Ti 12GB's performance with F16 quantization for Llama 3 8B. This might be due to insufficient memory or limitations in the testing setup.

Llama 3 70B - A Challenge?

The 70B version of Llama 3 is significantly larger than the 8B model and requires more resources. Unfortunately, we don't have any performance data for the 3080 Ti 12GB with this model. This suggests that the card might struggle to handle the 70B model efficiently due to limited memory or other factors related to processing power.

Comparison with Other Devices: Is the 3080 Ti 12GB the Best Option?

While we don't have data on other devices in this article, let's consider some general points to help you compare the 3080 Ti 12GB to other options:

- CPUs: Powerful CPUs can also handle LLM inference, but often lag behind GPUs' efficiency. For example, the Apple silicon M1 and M2 chips are known for their impressive performance in LLM inference, especially with smaller models like Llama 7B.

- Other GPUs: Newer GPUs like the 40-series from NVIDIA might offer even better performance, especially if they have more memory.

- Cloud-Based Solutions: Using cloud-based services like Google Cloud or Amazon Web Services (AWS) can provide top-tier processing power and scalability for larger models, but might come at a higher cost.

Ultimately, the best device for you depends on your specific needs, budget, and the models you want to use.

Understanding Quantization

Quantization is a technique used to reduce the size of LLM models and optimize their performance. Think of it like shrinking a large photo without losing too much detail. By using fewer bits to represent each number, you can drastically reduce the model's memory footprint. This makes the model faster and requires less memory, making it more suitable for local inference on devices like the NVIDIA 3080 Ti 12GB.

Is the NVIDIA 3080 Ti 12GB Worth It?

The NVIDIA 3080 Ti 12GB is a solid choice for running smaller LLMs like Llama 3 8B locally. It offers decent performance and is a good starting point for developers interested in experimenting with local inference. However, if you plan to work with larger models like the 70B version of Llama 3, you might need a more powerful GPU or consider cloud solutions.

FAQ: Your LLM Inference Questions Answered

What is a "token"?

In the context of LLMs, a "token" is a unit of text. This could be a single word, a punctuation mark, or even a part of a word. For example, the phrase "hello world" would be broken down into four tokens: "hello", " ", "world", and "".

What is the difference between generation and processing?

- Generation: This refers to the process of creating new text from an LLM. It's when the model takes an input and uses its knowledge to generate something new.

- Processing: This refers to the process of analyzing and understanding existing text. It's when the model takes in text and tries to extract meaning from it.

What is the significance of "Q4" and "F16" quantization?

These terms represent different levels of quantization:

- Q4: This is a lower level of quantization that uses fewer bits to represent each number. It results in a smaller model but can potentially lead to a slight decrease in accuracy.

- F16: This is a higher level of quantization that uses more bits to represent each number. It offers a better balance between size and accuracy but still requires less memory than the original model.

Why is the 3080 Ti 12GB better than a CPU?

While CPUs can also run LLMs, GPUs are generally more efficient at handling the massive parallel computations required for LLM inference. GPUs are specifically designed for performing many calculations simultaneously, making them ideal for running these complex AI models.

Is the 3080 Ti 12GB good for other AI tasks?

Absolutely! The 3080 Ti 12GB is a powerful piece of hardware that can handle a wide range of AI tasks, including training smaller models, machine learning, and other computationally intensive tasks.

Keywords:

NVIDIA 3080 Ti 12GB, LLM Inference, Local Inference, GPU, Llama 3, Llama 8B, Llama 70B, Token Speed, Quantization, Q4, F16, Performance, Value, GPU Benchmarks, Generation, Processing, AI, Machine Learning, Cloud, Google Cloud, Amazon Web Services, AWS