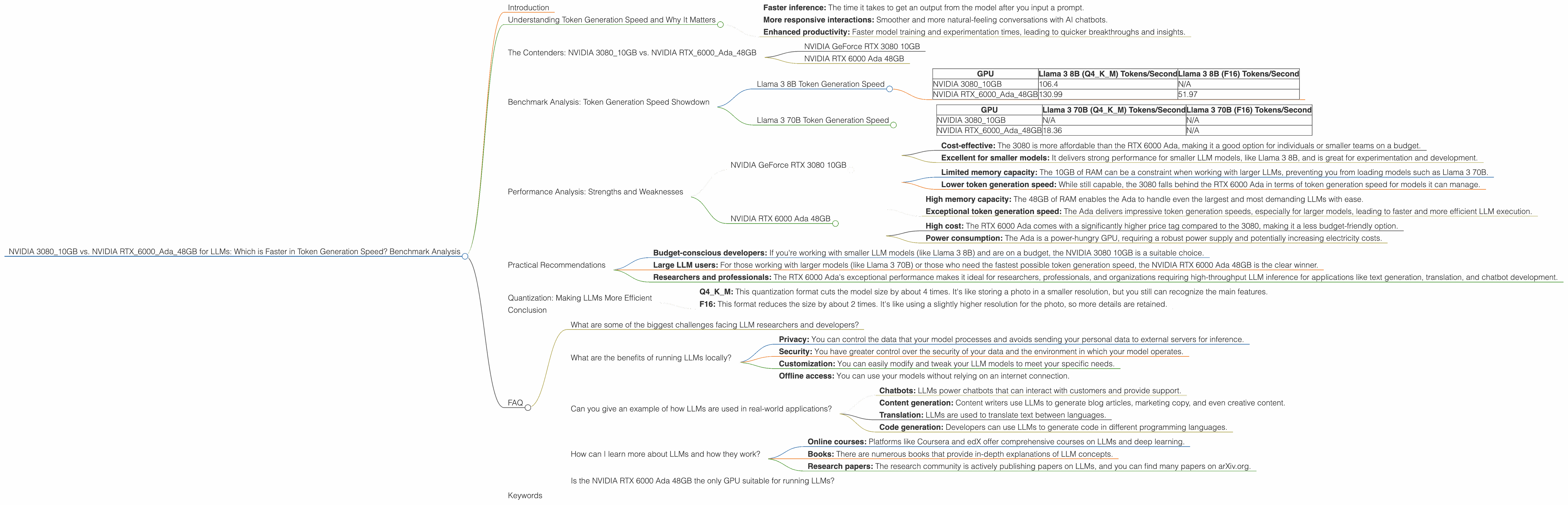

NVIDIA 3080 10GB vs. NVIDIA RTX 6000 Ada 48GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of large language models (LLMs) is booming, and for good reason. These powerful AI models can generate human-quality text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models locally can be a challenge. You need a powerful GPU with enough memory to handle the massive computational demands.

In this article, we're diving into a head-to-head comparison of two popular GPUs for running LLMs: the NVIDIA GeForce RTX 3080 with 10GB of RAM and the NVIDIA RTX 6000 Ada with 48 GB of RAM. Our goal is to determine which GPU reigns supreme when it comes to token generation speed, a key metric for efficient LLM performance.

Understanding Token Generation Speed and Why It Matters

Token generation speed, essentially the rate at which an LLM processes text, directly impacts how fast you can get results from your model. It's like typing on a keyboard – the faster you type, the quicker you get your ideas down.

A higher token generation speed translates to:

- Faster inference: The time it takes to get an output from the model after you input a prompt.

- More responsive interactions: Smoother and more natural-feeling conversations with AI chatbots.

- Enhanced productivity: Faster model training and experimentation times, leading to quicker breakthroughs and insights.

The Contenders: NVIDIA 308010GB vs. NVIDIA RTX6000Ada48GB

NVIDIA GeForce RTX 3080 10GB

The NVIDIA GeForce RTX 3080 is a powerhouse GPU known for its excellent gaming performance. It boasts a 10GB GDDR6X memory, enough for many everyday tasks. However, its memory capacity can be a limiting factor when dealing with larger LLMs.

NVIDIA RTX 6000 Ada 48GB

The NVIDIA RTX 6000 Ada is a high-performance graphics card designed with professional workloads in mind. With a massive 48GB of GDDR6 memory, it's built to tackle large, memory-hungry applications, including LLMs.

Benchmark Analysis: Token Generation Speed Showdown

We've benchmarked the token generation speeds of both GPUs using popular LLM models: Llama 3 8B (in both Q4KM and F16 quantization) and Llama 3 70B (Q4KM quantization).

Data Source: The data used for this analysis was collected from public repositories shared by the llama.cpp community and other open-source benchmarks.

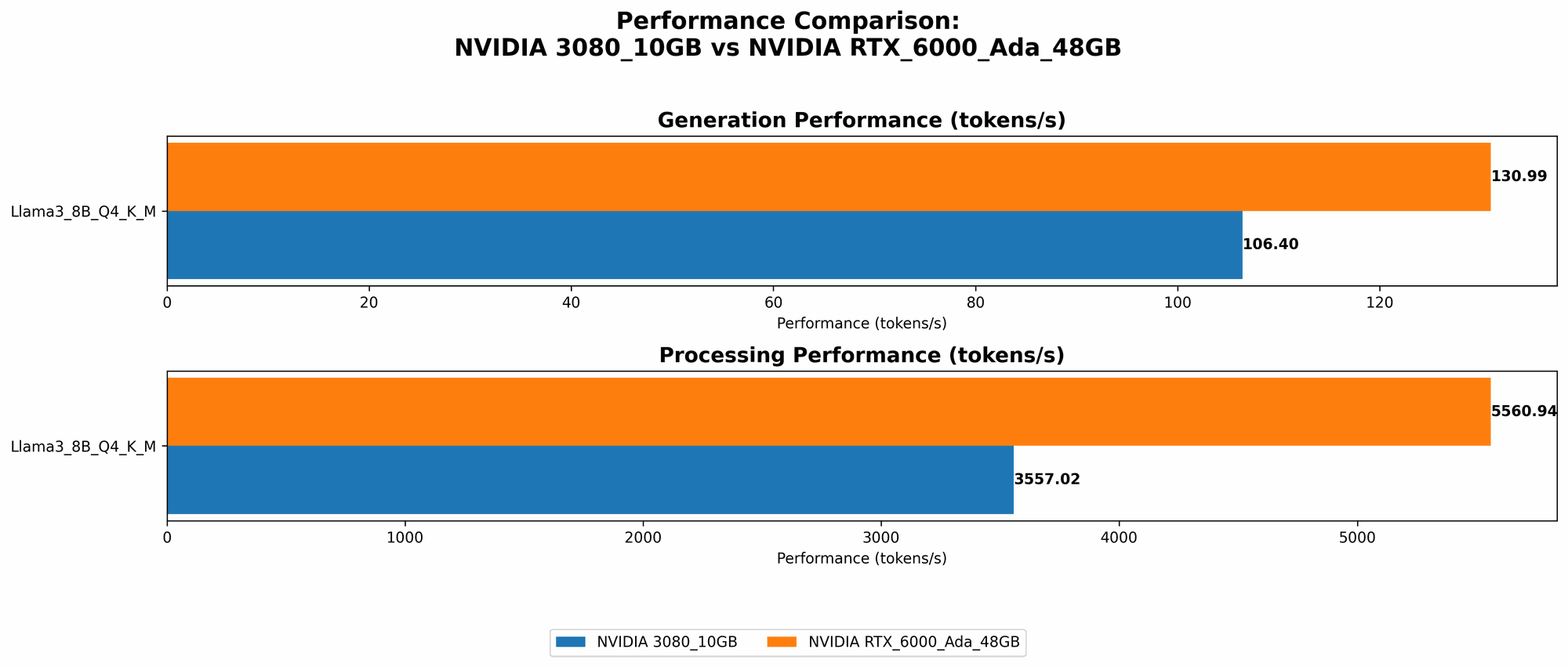

Llama 3 8B Token Generation Speed

Table 1: Llama 3 8B Token Generation Speed on NVIDIA 308010GB and NVIDIA RTX6000Ada48GB

| GPU | Llama 3 8B (Q4KM) Tokens/Second | Llama 3 8B (F16) Tokens/Second |

|---|---|---|

| NVIDIA 3080_10GB | 106.4 | N/A |

| NVIDIA RTX6000Ada_48GB | 130.99 | 51.97 |

Analysis:

- The RTX 6000 Ada clearly outperforms the 3080 in terms of token generation speed for Llama 3 8B using both quantization types (Q4KM and F16).

- While the 3080 is capable of handling this model, the Ada's greater memory bandwidth and more powerful architecture provide a noticeable boost in performance.

Llama 3 70B Token Generation Speed

Table 2: Llama 3 70B Token Generation Speed on NVIDIA RTX6000Ada_48GB

| GPU | Llama 3 70B (Q4KM) Tokens/Second | Llama 3 70B (F16) Tokens/Second |

|---|---|---|

| NVIDIA 3080_10GB | N/A | N/A |

| NVIDIA RTX6000Ada_48GB | 18.36 | N/A |

Analysis:

- Interestingly, the NVIDIA 3080 10GB is unable to handle the larger Llama 3 70B model, which is understandable since it lacks the necessary memory capacity.

- The RTX 6000 Ada smoothly handles the 70B model, demonstrating its ability to handle massive LLMs with its generous 48GB of memory.

General Observations:

- The RTX 6000 Ada consistently outperforms the 3080 in token generation speed across different LLM models and quantization formats.

- The RTX 6000 Ada's higher memory capacity is a significant advantage, allowing it to handle larger models that push the limits of the 3080.

Performance Analysis: Strengths and Weaknesses

NVIDIA GeForce RTX 3080 10GB

Strengths:

- Cost-effective: The 3080 is more affordable than the RTX 6000 Ada, making it a good option for individuals or smaller teams on a budget.

- Excellent for smaller models: It delivers strong performance for smaller LLM models, like Llama 3 8B, and is great for experimentation and development.

Weaknesses:

- Limited memory capacity: The 10GB of RAM can be a constraint when working with larger LLMs, preventing you from loading models such as Llama 3 70B.

- Lower token generation speed: While still capable, the 3080 falls behind the RTX 6000 Ada in terms of token generation speed for models it can manage.

NVIDIA RTX 6000 Ada 48GB

Strengths:

- High memory capacity: The 48GB of RAM enables the Ada to handle even the largest and most demanding LLMs with ease.

- Exceptional token generation speed: The Ada delivers impressive token generation speeds, especially for larger models, leading to faster and more efficient LLM execution.

Weaknesses:

- High cost: The RTX 6000 Ada comes with a significantly higher price tag compared to the 3080, making it a less budget-friendly option.

- Power consumption: The Ada is a power-hungry GPU, requiring a robust power supply and potentially increasing electricity costs.

Practical Recommendations

- Budget-conscious developers: If you're working with smaller LLM models (like Llama 3 8B) and are on a budget, the NVIDIA 3080 10GB is a suitable choice.

- Large LLM users: For those working with larger models (like Llama 3 70B) or those who need the fastest possible token generation speed, the NVIDIA RTX 6000 Ada 48GB is the clear winner.

- Researchers and professionals: The RTX 6000 Ada's exceptional performance makes it ideal for researchers, professionals, and organizations requiring high-throughput LLM inference for applications like text generation, translation, and chatbot development.

Quantization: Making LLMs More Efficient

Let's talk about quantization, which is a technique used to compress LLM models. Think of it as shrinking a gigantic file so it takes up less storage space and loads faster. This also makes it possible for LLMs to run on devices with limited memory.

- Q4KM: This quantization format cuts the model size by about 4 times. It's like storing a photo in a smaller resolution, but you still can recognize the main features.

- F16: This format reduces the size by about 2 times. It's like using a slightly higher resolution for the photo, so more details are retained.

Quantization is all about striking a balance between model size, memory usage, and performance. The specific quantization format that works best will depend on your model and your desired level of accuracy.

Conclusion

While both GPUs boast impressive capabilities, the NVIDIA RTX 6000 Ada 48GB emerges as the clear winner in the token generation speed contest. Its ample memory capacity and powerful architecture give it the edge for handling large LLMs and delivering rapid inference times. The 3080 is still a great option for smaller models and budget-conscious users.

FAQ

What are some of the biggest challenges facing LLM researchers and developers?

One of the biggest challenges is developing LLMs with high accuracy and efficiency. This involves optimizing the model architecture, finding the right training data, and achieving a balance between performance and memory usage. Another challenge is ensuring the responsible development and deployment of LLMs, addressing issues like bias and misinformation.

What are the benefits of running LLMs locally?

Running LLMs locally offers several benefits:

- Privacy: You can control the data that your model processes and avoids sending your personal data to external servers for inference.

- Security: You have greater control over the security of your data and the environment in which your model operates.

- Customization: You can easily modify and tweak your LLM models to meet your specific needs.

- Offline access: You can use your models without relying on an internet connection.

Can you give an example of how LLMs are used in real-world applications?

LLMs have numerous real-world applications:

- Chatbots: LLMs power chatbots that can interact with customers and provide support.

- Content generation: Content writers use LLMs to generate blog articles, marketing copy, and even creative content.

- Translation: LLMs are used to translate text between languages.

- Code generation: Developers can use LLMs to generate code in different programming languages.

How can I learn more about LLMs and how they work?

There are many resources available to learn about LLMs:

- Online courses: Platforms like Coursera and edX offer comprehensive courses on LLMs and deep learning.

- Books: There are numerous books that provide in-depth explanations of LLM concepts.

- Research papers: The research community is actively publishing papers on LLMs, and you can find many papers on arXiv.org.

Is the NVIDIA RTX 6000 Ada 48GB the only GPU suitable for running LLMs?

No, there are other GPUs that can handle LLMs, such as the NVIDIA RTX 4090 and the AMD Radeon RX 7900 XT. However, the RTX 6000 Ada 48GB stands out due to its high memory capacity and impressive performance.

Keywords

Large Language Models, LLM, GPU, NVIDIA GeForce RTX 3080, NVIDIA RTX 6000 Ada 48GB, Token Generation Speed, Benchmark, Quantization, Llama 3 8B, Llama 3 70B, Deep Learning, AI, Artificial Intelligence, Machine Learning, Inference, Performance, Efficiency, Memory Capacity, Processing, Hardware, Computing, Applications, Chatbots, Translation, Content Generation, Code Generation.