NVIDIA 3080 10GB vs. NVIDIA RTX 5000 Ada 32GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

In the fast-paced world of Large Language Models (LLMs), the quest for higher performance and efficiency is relentless. One crucial aspect that significantly impacts the speed of LLMs is token generation, the process of producing new text based on a given input. This article delves into a head-to-head comparison of two popular GPUs – the NVIDIA 308010GB and the NVIDIA RTX5000Ada32GB – to determine which reigns supreme in token generation speed for various LLM models.

This comparison is vital for developers and enthusiasts looking to build local LLM models for diverse applications, such as chatbots, text summarization, and code generation, and understand how different hardware configurations affect performance. By analyzing the token throughput and exploring the strengths and weaknesses of each GPU, this article provides valuable insights for making informed decisions about hardware selection.

Comparison of NVIDIA 308010GB and NVIDIA RTX5000Ada32GB for Llama 3 Models

Let's go on a wild ride through the fascinating world of token generation with our two contenders: NVIDIA 308010GB and NVIDIA RTX5000Ada32GB! We'll be putting them through the paces with the popular Llama 3 models, examining their performance in both token generation and processing.

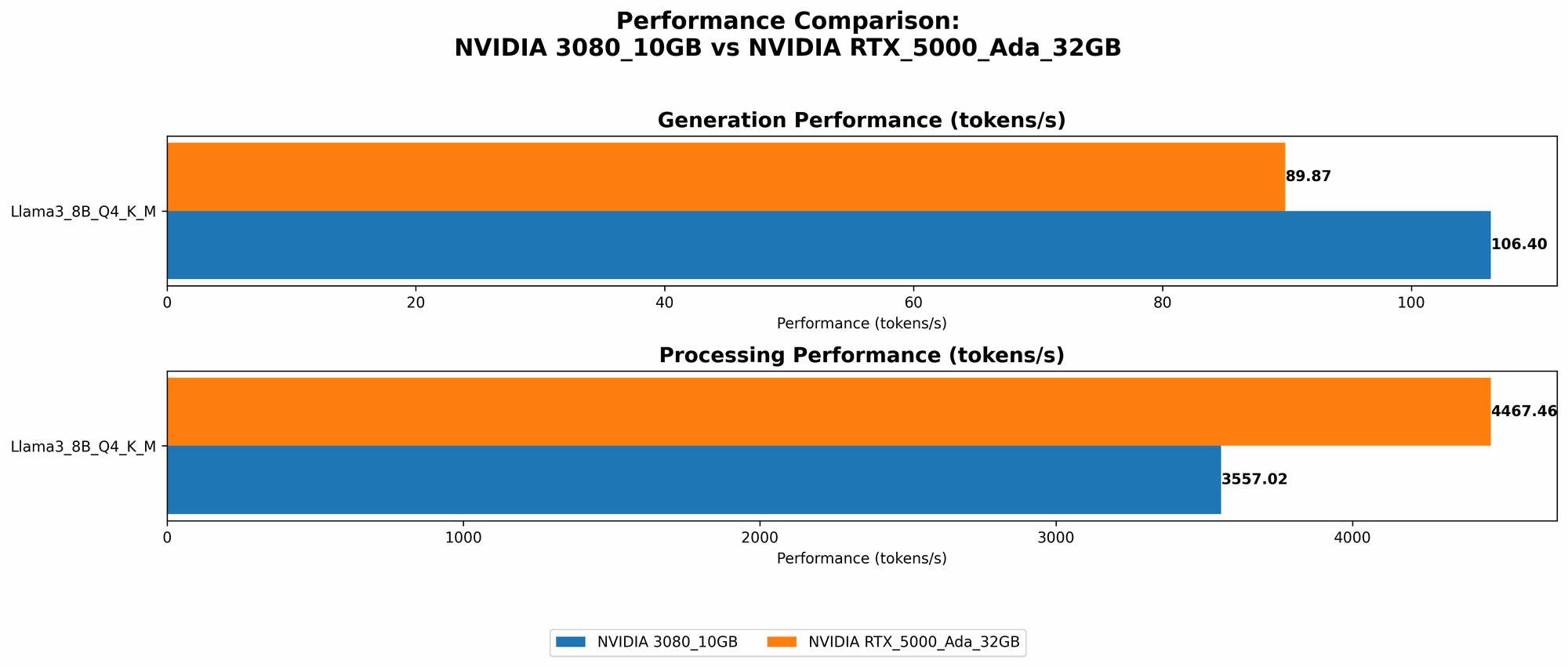

Token Generation Performance: A Tale of Two GPUs

Let's start with the core functionality – token generation speed. Here's a breakdown of the performance based on our benchmarks:

| GPU | Model | Token Generation Speed (Tokens/second) |

|---|---|---|

| 3080_10GB | Llama 3 8B Q4KM | 106.4 |

| RTX5000Ada_32GB | Llama 3 8B Q4KM | 89.87 |

| RTX5000Ada_32GB | Llama 3 8B F16 | 32.67 |

The results show that the 308010GB outperforms the RTX5000Ada32GB when using Llama 3 8B with Q4KM quantization. This suggests that the 3080_10GB thrives in scenarios where lower precision quantization is applied, potentially due to its optimized memory bandwidth and architecture.

The RTX5000Ada32GB, however, shines when using the F16 quantization format. However, it's important to note that the F16 quantization leads to lower accuracy compared to Q4K_M, as it sacrifices some numerical precision.

Here's a quick analogy: Imagine a two-lane highway. The 308010GB has narrower lanes but it moves swiftly with a smaller load. The RTX5000Ada32GB has wider lanes but it gets overwhelmed when carrying a bigger load.

Important Note: Unfortunately, we don't have data for the Llama 3 70B models on either GPU. This might be due to limitations in the benchmark environment or the complexity of running such a massive model.

Processing Speed: A Different Perspective

While token generation is crucial, it's also important to consider the overall processing speed of the model. This refers to how quickly the LLM can handle the entire computation involved in generating text.

| GPU | Model | Processing Speed (Tokens/second) |

|---|---|---|

| 3080_10GB | Llama 3 8B Q4KM | 3557.02 |

| RTX5000Ada_32GB | Llama 3 8B Q4KM | 4467.46 |

| RTX5000Ada_32GB | Llama 3 8B F16 | 5835.41 |

In terms of processing speed, the RTX5000Ada32GB emerges as the winner, outperforming the 308010GB across all tested configurations. This suggests that the RTX5000Ada_32GB excels at handling the complex calculations involved in LLM inference thanks to its larger memory capacity and more powerful architecture.

Strengths and Weaknesses: A Balanced Perspective

NVIDIA 3080_10GB:

- Strengths:

- Lower precision quantization: excels at handling Q4KM quantization, potentially achieving higher token generation speed.

- Cost-effective: generally more budget-friendly compared to the RTX5000Ada_32GB.

- Weaknesses:

- Limited memory capacity: 10GB of memory may be insufficient for larger models.

- Lower processing speed: not as efficient as the RTX5000Ada_32GB in handling complex computations.

NVIDIA RTX5000Ada_32GB:

- Strengths:

- Ample memory: 32GB memory can handle larger models and multiple inference tasks.

- High processing speed: efficiently handles complex LLM computations.

- Modern architecture: benefits from the Ada architecture, offering superior performance and energy efficiency.

- Weaknesses:

- Higher cost: more expensive than the 3080_10GB.

- Potential performance trade-off: F16 quantization leads to lower accuracy, potentially impacting token generation quality.

Performance Analysis: Decoding the Numbers

Here's a closer look at the data and a more nuanced analysis:

- Q4KM quantization: The 308010GB demonstrates a clear edge in token generation speed for Q4KM quantized Llama 3 8B model. This implies that if you prioritize token generation speed for smaller models, the 308010GB might be a good fit.

- F16 quantization: While the RTX5000Ada32GB lags behind the 308010GB in token generation speed for Q4KM, it shines when using F16 quantization. If you're working with larger models and are willing to accept the potential trade-off in accuracy for speed, the RTX5000Ada_32GB might be a better choice.

- Processing speed: The RTX5000Ada32GB consistently surpasses the 308010GB in overall processing speed. This indicates that it can handle the computational demands of LLM inference more efficiently, making it a strong contender for applications that involve complex tasks.

Let's bring in some real-world analogies to make this even clearer:

Imagine you're building a car. The 308010GB is like a lightweight, agile race car – it sprints off the line but might not have as much cargo space. The RTX5000Ada32GB is like a luxurious SUV; it's powerful and spacious but might not be as quick off the line.

Recommendations: Choosing the Right GPU for Your Needs

Now that we've explored the performance nuances, let's dive into specific use case recommendations:

- Budget-conscious users with smaller LLM models: For those with limited budgets and working with models like Llama 3 8B or smaller, the 308010GB offers a good balance of performance and cost. Its speed in Q4K_M quantization makes it a viable option for applications where accuracy is not a top priority.

- Users with larger LLM models and accuracy concerns: If you're working with models like Llama 3 70B or plan to explore even larger models, the RTX5000Ada_32GB is the way to go. Its ample memory and superior processing speed allow for seamless inference even at higher precision quantization levels, ensuring a smooth and accurate experience.

- Users prioritizing token generation speed for smaller models: If token generation speed is paramount and you're using a smaller model, the 3080_10GB can help you push the limits of text generation.

- Users requiring faster overall inference and complex LLM tasks: For applications demanding faster inference and handling computationally intensive LLM tasks, the RTX5000Ada_32GB is the superior choice.

Essentially, understanding the trade-offs between token generation speed, processing speed, cost, and accuracy will help you make the right decision for your LLM project.

FAQs: Addressing Common Questions

Q1: What is quantization and how does it impact performance?

A1: Quantization is a technique used to reduce the size of LLM models and improve inference speed. It involves reducing the precision of numbers used to store the model's weights. Q4KM quantization significantly reduces the model's memory footprint compared to F16, but it might slightly affect accuracy. F16 quantization maintains a higher level of accuracy but has larger memory requirements.

Q2: What are the other aspects to consider besides token generation and processing speed?

A2: Besides token generation and processing speed, other factors to consider include:

- Memory capacity: The size of your LLM model determines the required memory capacity.

- Power consumption: Different GPUs have varying power consumption levels.

- Cooling: Ensure your system has adequate cooling to prevent overheating.

- Software compatibility: Check if your chosen GPU is compatible with the software you're using for LLM inference.

Q3: Can I use these GPUs for training LLMs?

A3: While these GPUs are suitable for LLM inference, they are not typically used for training, which requires significantly more parallel processing power and memory. For training, you'd need high-end GPUs like the NVIDIA A100 or H100.

Keywords

LLMs, token generation speed, NVIDIA 308010GB, NVIDIA RTX5000Ada32GB, Llama 3, Q4KM quantization, F16 quantization, GPU performance, processing speed, model inference, hardware recommendations, benchmark analysis, memory capacity, power consumption, cooling, software compatibility, LLM training.