NVIDIA 3080 10GB vs. NVIDIA 4080 16GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

Welcome to the exciting world of Large Language Models (LLMs) and the quest for the perfect hardware setup! It's like choosing the right ingredients for a delicious AI recipe - if you want your models to cook up some amazing text, you need the right tools. Today, we're diving into the heart of this techie kitchen with two powerful GPUs from NVIDIA: the 308010GB and the 408016GB. We'll be putting these graphics titans through their paces to see who reigns supreme in the realm of token generation speed.

Think of token generation like the speed of a writer - the faster they can churn out words, the quicker you get your story. In this case, we're talking about LLMs spitting out text, code, or any creative output you can dream up. So, fasten your seatbelts and get ready to witness a GPU showdown!

Comparing NVIDIA 308010GB and NVIDIA 408016GB for Token Generation Speed

NVIDIA 308010GB vs. NVIDIA 408016GB: A Quick Overview

Before we dive into the numbers, let's get a quick grasp of the players in this game:

- NVIDIA 3080_10GB: This GPU is a powerhouse, offering a robust 10GB of memory and impressive performance for its generation. It's been a popular choice for gaming and various creative tasks.

- NVIDIA 4080_16GB: This newcomer boasts a whopping 16GB of memory, significantly more than its predecessor. It's built with the latest NVIDIA architecture, making it a serious contender for the crown of speed and efficiency.

Benchmarking Results: Llama 3 Models

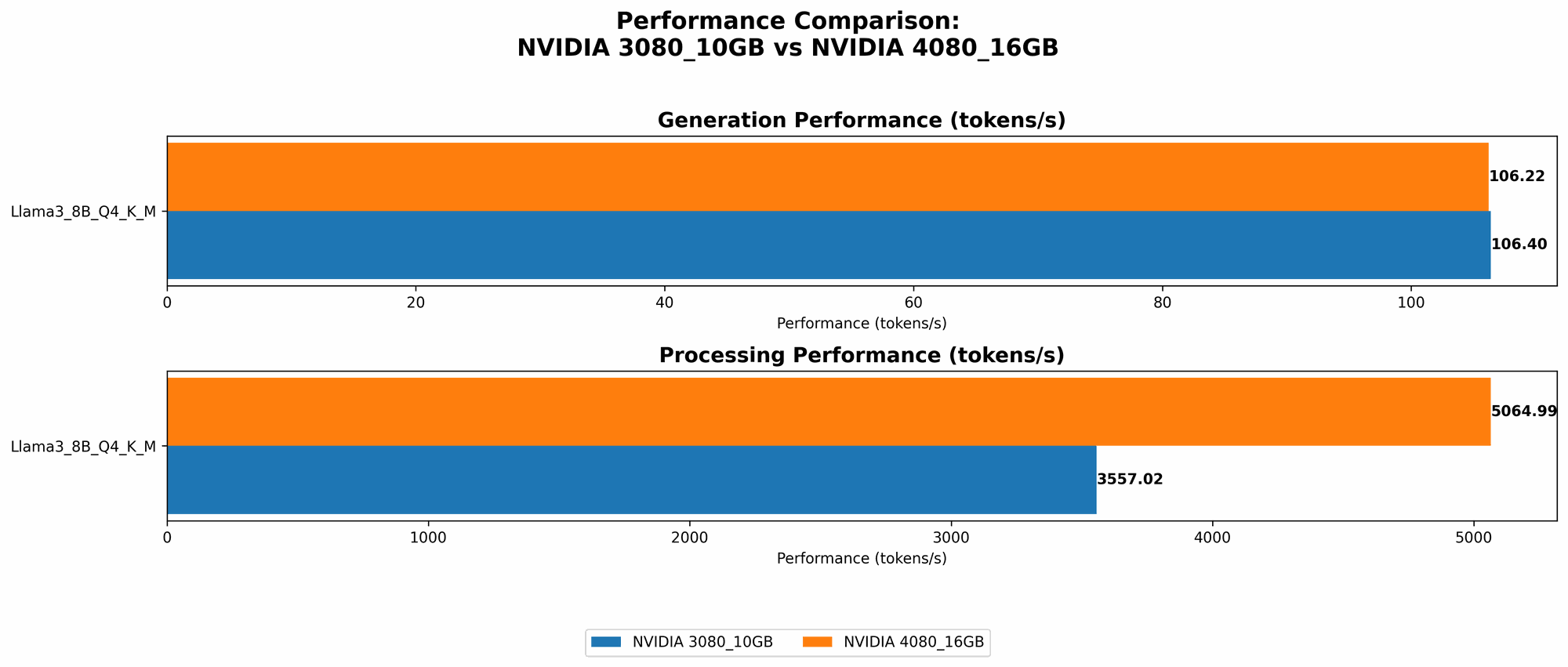

To get a clear picture of performance, we're looking at tokens per second (tokens/sec) - think of this as the language model's writing speed. For our benchmarks, we'll be using the popular Llama 3 model, focusing on the 8B variant in both quantized (Q4KM) and floating-point (F16) precision.

Here's a breakdown of the results:

| Model | NVIDIA 3080_10GB (tokens/sec) | NVIDIA 4080_16GB (tokens/sec) |

|---|---|---|

| Llama3 8B Q4KM Generation | 106.4 | 106.22 |

| Llama3 8B F16 Generation | N/A | 40.29 |

| Llama3 8B Q4KM Processing | 3557.02 | 5064.99 |

| Llama3 8B F16 Processing | N/A | 6758.9 |

| Llama3 70B Q4KM Generation | N/A | N/A |

| Llama3 70B F16 Generation | N/A | N/A |

| Llama3 70B Q4KM Processing | N/A | N/A |

| Llama3 70B F16 Processing | N/A | N/A |

Key Highlights:

- Surprising Similarity in Q4 Generation: The 308010GB and the 408016GB deliver remarkably similar token generation speeds for the Llama3 8B model in quantized format. There's a negligible difference, potentially within the margin of error, indicating the 3080 holds its own against the newer 4080.

- 4080 Takes the Lead in F16: However, the tide turns when we move to F16 precision (lower quantization). The 408016GB clearly outperforms the 308010GB, showcasing the 4080's capability to work with more complex models that require F16 precision.

- Larger Memory Advantage: The 408016GB's abundant memory proves its advantage in processing tasks, surpassing the 308010GB by a significant margin in both Q4 and F16 precision. This suggests that the 4080_16GB is better suited for handling more complex models and larger datasets.

- No Data for Llama 70B: The benchmark data doesn't offer information on the performance of either GPU with Llama 3 70B. This means we're unable to compare the performance of these two GPUs for larger LLMs. It's possible that further research and testing would reveal the strengths of each GPU for these models.

Performance Analysis: Unpacking the Numbers

Let's delve a bit deeper into the performance analysis, understanding the implications of these numbers.

Quantization: The Art of Making Models Lighter

Imagine you're trying to squeeze a large painting into a small suitcase. You need to find ways to make it smaller without losing too much detail. That's essentially what quantization does for LLMs. It compresses the model's data without sacrificing too much accuracy, making it run faster and with less memory hunger. The "Q4KM" signifies a specific type of quantization.

Generation vs. Processing: The Two Phases of LLM Work

Think of it like this: the LLM is like a chef. It has to prepare the ingredients (processing) and then cook the meal (generation).

- Generation: This is where the LLM actually generates the output like text. It's the final step where you see the results.

- Processing: This is the "behind-the-scenes" work where the LLM takes the input, prepares it, and gets ready to generate the output.

The Battle for Speed

In the Q4 generation battle, the 308010GB and 408016GB are practically neck-and-neck, showing that the 3080_10GB is still a formidable contender.

But the 408016GB's advantage in F16 generation and processing performance highlights its potential for handling larger and more complex models. The 408016GB's superior memory allows it to handle more data and computations with fewer hiccups. This means it can be a better choice for running models that require more detail and precision.

Practical Recommendations: Choosing the Right GPU for LLMs

So, which GPU is right for you? It depends on your needs:

- 308010GB: If you're focused on smaller, quantized models in Q4 precision, the 308010GB is a solid choice. It's a more budget-friendly option that can still deliver excellent performance.

- 408016GB: If you're working with larger models, need F16 precision, or want the most processing power, the 408016GB is the clear champion. Its larger memory and architecture deliver significant advantages.

Beyond the Benchmark:

- Cost: The 408016GB will likely have a higher price tag, so consider your budget. The 308010GB might be a more cost-effective choice for casual experimentation or working with smaller models.

- Power Consumption: The 408016GB might consume more power than the 308010GB, so factor in power consumption if you're concerned about energy efficiency.

FAQ: Answering Your Burning Questions

What is an LLM?

A Large Language Model (LLM) is a type of Artificial Intelligence (AI) that can understand and generate human-like text. It's trained on massive datasets of text and code, enabling it to perform tasks like writing stories, translating languages, and even generating computer code.

What is tokenization?

Tokenization is the process of breaking down text into smaller units called "tokens." These tokens are like words, but they can also be punctuation or even parts of words. LLMs use tokenization to process and generate text, creating and understanding the flow of language.

How does quantization affect LLM performance?

Quantization is a way to make LLMs smaller and faster. It involves representing the model's data with fewer bits, which reduces its storage space and processing requirements. While it does sometimes sacrifice a bit of accuracy, it can significantly improve performance, especially for smaller systems with limited resources.

Should I always go for the latest GPU?

Not necessarily! While the 408016GB boasts advanced features, it's not always the best choice. If you're working with smaller models, the 308010GB could be a good alternative, offering great performance at a lower cost. It's all about finding the right balance based on your specific needs.

Keywords

LLM, Large Language Model, NVIDIA, 3080, 10GB, 4080, 16GB, GPU, Token Generation Speed, Benchmark, Performance, Quantization, Q4KM, F16, Llama 3, Llama 8B, Llama 70B, Generation, Processing, Speed, Cost, Power Consumption, AI, Tokenization, Accuracy, Memory, Data, Budget