NVIDIA 3080 10GB for LLM Inference: Performance and Value

Introduction

The world of Large Language Models (LLMs) is exploding, bringing the power of artificial intelligence to our fingertips. LLMs are becoming increasingly popular for tasks like text generation, translation, and even code writing. However, running these models locally can be resource-intensive, requiring powerful hardware to achieve optimal performance.

This article will explore the capabilities of the NVIDIA GeForce RTX 3080 10GB for running LLM inference locally. We'll delve into the performance of this GPU with various LLM models and delve into the trade-offs between speed and resource consumption. Get ready to unleash the power of LLMs on your own machine, and let's dive into the fascinating world of local AI!

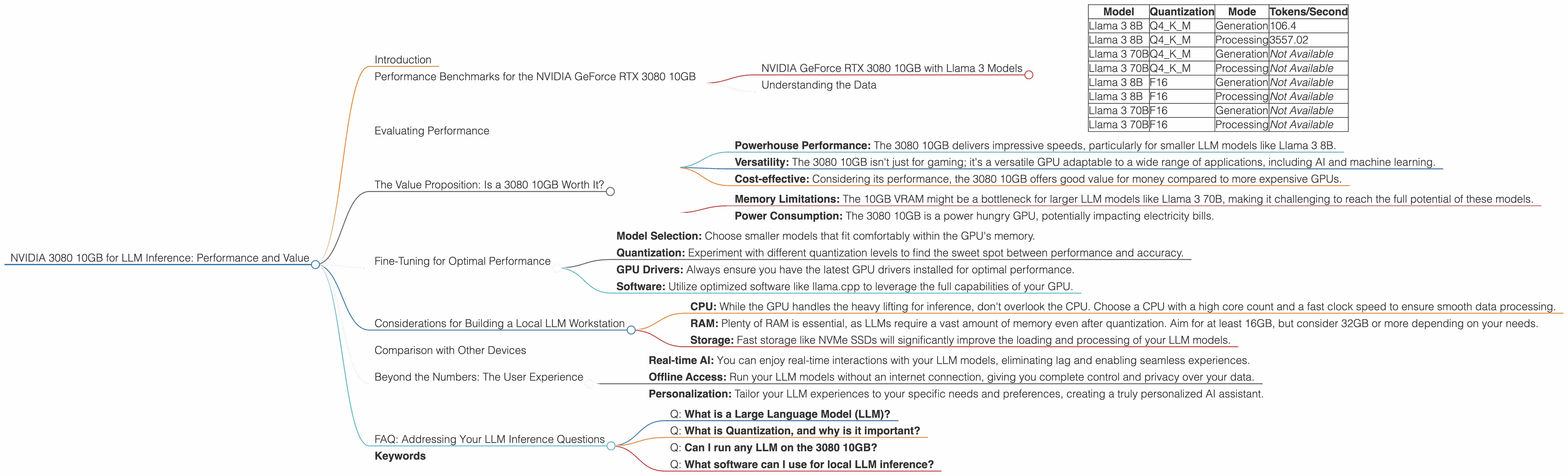

Performance Benchmarks for the NVIDIA GeForce RTX 3080 10GB

The NVIDIA GeForce RTX 3080 10GB is a powerful GPU known for its gaming prowess, but it also excels in LLM inference. We'll focus on the generation and processing speeds, measured in tokens per second, of different LLM models.

NVIDIA GeForce RTX 3080 10GB with Llama 3 Models

The Llama 3 series is a popular choice for local inference due to its balance of size and capability. We'll examine the performance of the 3080 10GB with Llama 3 8B and Llama 3 70B models:

| Model | Quantization | Mode | Tokens/Second |

|---|---|---|---|

| Llama 3 8B | Q4KM | Generation | 106.4 |

| Llama 3 8B | Q4KM | Processing | 3557.02 |

| Llama 3 70B | Q4KM | Generation | Not Available |

| Llama 3 70B | Q4KM | Processing | Not Available |

| Llama 3 8B | F16 | Generation | Not Available |

| Llama 3 8B | F16 | Processing | Not Available |

| Llama 3 70B | F16 | Generation | Not Available |

| Llama 3 70B | F16 | Processing | Not Available |

_Note: This table displays the performance data for the models. Data is not available for Llama 3 70B models in both generation and processing modes. _

Understanding the Data

To maximize performance, the 3080 10GB is currently best suited for running smaller LLM models like Llama 3 8B.

Quantization: The "Q4KM" stands for 4-bit quantization, a technique that reduces the size of the LLM model without sacrificing much accuracy. This is crucial for running larger models on a GPU with limited memory.

Generation vs. Processing: "Generation" refers to the speed at which the model can generate new text. "Processing" is the speed at which the model can handle input text to understand its meaning.

Evaluating Performance

The data reveals that the NVIDIA GeForce RTX 3080 10GB can handle the Llama 3 8B model with impressive speed. The 3080 10GB achieves 106.4 tokens/second for generation and a remarkable 3557.02 tokens/second for processing. This signifies fast response times for both generating text and understanding the input text!

The Value Proposition: Is a 3080 10GB Worth It?

The NVIDIA GeForce RTX 3080 10GB offers a compelling value proposition for those venturing into local LLM inference:

Pros:

- Powerhouse Performance: The 3080 10GB delivers impressive speeds, particularly for smaller LLM models like Llama 3 8B.

- Versatility: The 3080 10GB isn't just for gaming; it's a versatile GPU adaptable to a wide range of applications, including AI and machine learning.

- Cost-effective: Considering its performance, the 3080 10GB offers good value for money compared to more expensive GPUs.

Cons:

- Memory Limitations: The 10GB VRAM might be a bottleneck for larger LLM models like Llama 3 70B, making it challenging to reach the full potential of these models.

- Power Consumption: The 3080 10GB is a power hungry GPU, potentially impacting electricity bills.

Fine-Tuning for Optimal Performance

To unlock the full potential of your 3080 10GB and make your LLM inference experience smoother, consider these optimizations:

- Model Selection: Choose smaller models that fit comfortably within the GPU's memory.

- Quantization: Experiment with different quantization levels to find the sweet spot between performance and accuracy.

- GPU Drivers: Always ensure you have the latest GPU drivers installed for optimal performance.

- Software: Utilize optimized software like llama.cpp to leverage the full capabilities of your GPU.

Considerations for Building a Local LLM Workstation

If you're looking to create a powerful LLM workstation using a 3080 10GB, here are some key considerations:

- CPU: While the GPU handles the heavy lifting for inference, don't overlook the CPU. Choose a CPU with a high core count and a fast clock speed to ensure smooth data processing.

- RAM: Plenty of RAM is essential, as LLMs require a vast amount of memory even after quantization. Aim for at least 16GB, but consider 32GB or more depending on your needs.

- Storage: Fast storage like NVMe SSDs will significantly improve the loading and processing of your LLM models.

Comparison with Other Devices

While we are focusing on the NVIDIA GeForce RTX 3080 10GB, let's briefly look at its performance relative to other popular devices:

Apple M1 Max: The Apple M1 Max boasts impressive performance in LLM inference, especially for smaller models. However, for larger LLMs, the 3080 10GB might still offer an advantage.

AMD Radeon RX 6900 XT: With its larger VRAM, the RX 6900 XT excels at handling larger LLMs. However, the 3080 10GB might offer better performance for smaller models.

Beyond the Numbers: The User Experience

The impact of the 3080 10GB for local LLM inference extends beyond just the numbers. Imagine:

- Real-time AI: You can enjoy real-time interactions with your LLM models, eliminating lag and enabling seamless experiences.

- Offline Access: Run your LLM models without an internet connection, giving you complete control and privacy over your data.

- Personalization: Tailor your LLM experiences to your specific needs and preferences, creating a truly personalized AI assistant.

FAQ: Addressing Your LLM Inference Questions

Q: What is a Large Language Model (LLM)?

A: LLMs are complex AI models trained on massive datasets of text, allowing them to understand and generate human-like text. They are the brains behind many AI applications, from chatbots to creative writing tools.

Q: What is Quantization, and why is it important?

A: Quantization is a technique that reduces the size of an LLM model without sacrificing much accuracy. It's like compressing a file without losing its content. Quantization enables us to run larger models on devices with limited memory, like the 3080 10GB.

Q: Can I run any LLM on the 3080 10GB?

A: While the 3080 10GB is powerful, it might struggle with very large LLMs. The ideal model size for optimal performance will depend on the specific LLM architecture and the amount of VRAM on your 3080 10GB.

Q: What software can I use for local LLM inference?

A: Several options are available, each with its pros and cons. Popular choices include llama.cpp, which is designed for good performance on GPUs.

Keywords

NVIDIA GeForce RTX 3080 10GB, LLM inference, local AI, Llama 3, performance, benchmarking, GPU, VRAM, quantization, value, processing, generation, tokens, LLM workstation, AMD Radeon RX 6900 XT, Apple M1 Max, user experience, real-time AI, offline access, personalization, FAQ, software options, llama.cpp