NVIDIA 3070 8GB vs. NVIDIA RTX 6000 Ada 48GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is booming and these powerful AI models are transforming the way we interact with information and technology. To harness the full potential of LLMs, we need robust hardware that can handle their demanding computational requirements. This article focuses on two popular GPUs, the NVIDIA GeForce RTX 3070 with 8GB of memory and the NVIDIA RTX 6000 Ada with a whopping 48GB of memory, and how they perform in token generation speed when running LLM models.

Imagine a world where you can have a conversation with a machine that understands you and responds in a way that feels natural. That's the magic of LLMs, and to turn this magic into reality, we need the right tools, like powerful GPUs.

Comparing the Titans: NVIDIA 30708GB and NVIDIA RTX6000Ada48GB

NVIDIA 3070_8GB - The Budget-Friendly Powerhouse

The NVIDIA GeForce RTX 3070 8GB, a popular choice for gamers and creators, also has a place in the LLM world. Though a budget-friendly option compared to its high-end counterparts, it can still handle smaller LLM models with reasonable efficiency. Its 8GB of GDDR6 memory while enough for smaller models, might not be sufficient for larger ones.

NVIDIA RTX6000Ada_48GB - The Big Game Changer

On the other end of the spectrum, we have the NVIDIA RTX 6000 Ada, a beast in the GPU world, boasting an impressive 48GB of HBM2e memory. This massive memory capacity makes it a top choice for handling large LLM models that eat up memory like Pac-Man gobbles dots.

Benchmark Analysis: Unveiling the Token Generation Speed Champions

Let's dive into the numbers! We'll analyze the token generation speeds of the 30708GB and RTX6000Ada48GB GPUs for different LLM configurations, specifically the Llama 3 model, focusing on the 8B and 70B parameter sizes. We'll also look at different quantization techniques (Q4KM and F16) to see how they influence performance.

Understanding Quantization and its Impact

A lot of people are familiar with how GPUs work with graphics, but what is quantization in the context of LLMs? Imagine you have a very detailed image and need to compress it without losing too much information. Quantization does something similar for LLMs, reducing the precision of weights and activations, thereby shrinking the model's size and memory footprint, which makes it more efficient.

Think of Q4KM as using very few colors to represent the image, while F16 offers more colors and a slightly more detailed picture. This trade-off between detail (precision) and size (memory) is what quantization is all about.

Token Generation Speed Comparison: Breaking it Down

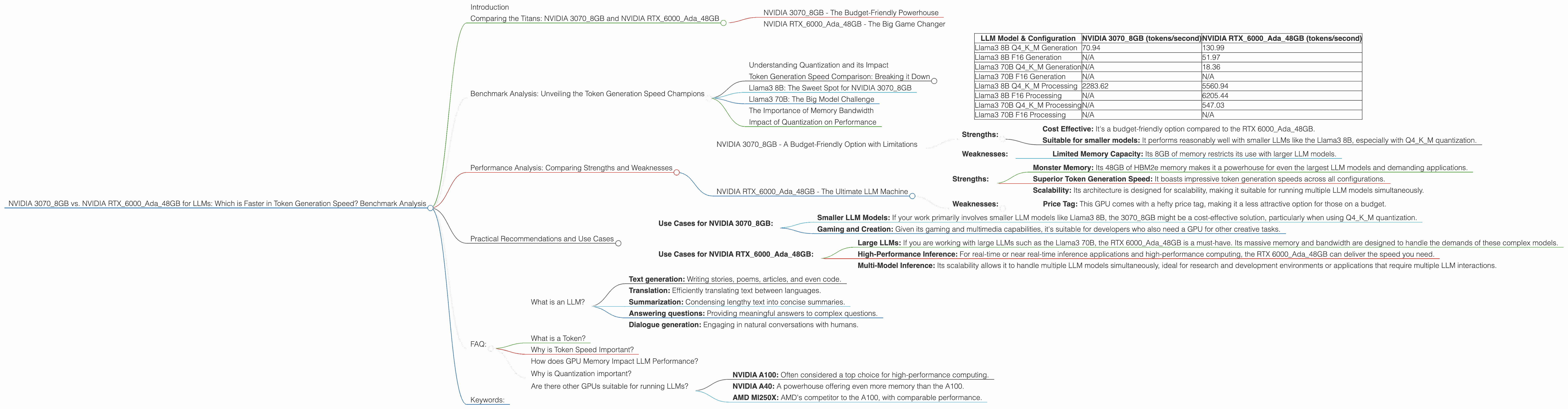

The following table summarizes the token generation speeds (tokens/second) for different LLM models and configurations running on the two GPUs. Note that some combinations are not included because either the data is unavailable or the model cannot run on the specific device.

| LLM Model & Configuration | NVIDIA 3070_8GB (tokens/second) | NVIDIA RTX6000Ada_48GB (tokens/second) |

|---|---|---|

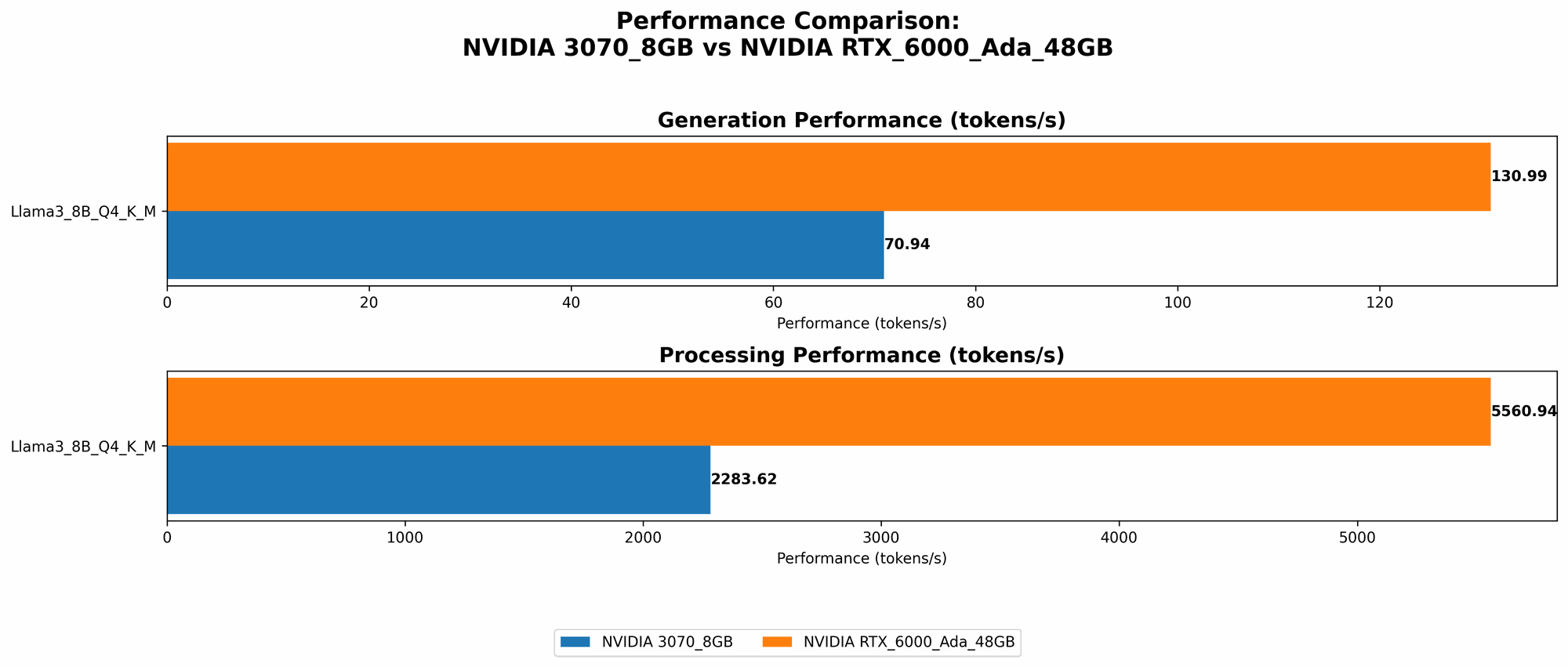

| Llama3 8B Q4KM Generation | 70.94 | 130.99 |

| Llama3 8B F16 Generation | N/A | 51.97 |

| Llama3 70B Q4KM Generation | N/A | 18.36 |

| Llama3 70B F16 Generation | N/A | N/A |

| Llama3 8B Q4KM Processing | 2283.62 | 5560.94 |

| Llama3 8B F16 Processing | N/A | 6205.44 |

| Llama3 70B Q4KM Processing | N/A | 547.03 |

| Llama3 70B F16 Processing | N/A | N/A |

Llama3 8B: The Sweet Spot for NVIDIA 3070_8GB

For the Llama3 8B model, the NVIDIA 30708GB performs surprisingly well, especially when using Q4K_M quantization. It achieves a token generation speed of 70.94 tokens/second, proving its capability to handle smaller LLM models effectively.

The NVIDIA RTX 6000Ada48GB, however, outperforms the 30708GB in all configurations. It achieves a significantly faster token generation speed of 130.99 tokens/second with Q4KM and even generates 51.97 tokens/second with F16. This difference highlights the advantage of the RTX 6000Ada_48GB in terms of memory bandwidth and processing power for LLM inference.

Llama3 70B: The Big Model Challenge

The picture changes considerably when we move to the massive Llama3 70B model. The 3070_8GB is unable to handle the 70B model due to its limited memory capacity, making it unsuitable for such large LLM models.

The RTX 6000Ada48GB, with its generous 48GB memory, shines in this scenario. Though the token generation speed drops to 18.36 tokens/second with Q4KM quantization, it still outshines any other device for this model size.

The Importance of Memory Bandwidth

We see a clear pattern here: memory bandwidth is crucial for the speed of token generation. The RTX 6000Ada48GB, with its HBM2e memory and massive bandwidth, easily surpasses the performance of the 3070_8GB, especially for larger models like the Llama3 70B.

Impact of Quantization on Performance

The choice of quantization technique plays a crucial role in LLM performance as well. For the Llama 3 8B, we see a significant performance difference between Q4KM and F16.

While Q4KM delivers slightly higher token generation speeds, F16 can offer better accuracy, though it demands more memory.

Performance Analysis: Comparing Strengths and Weaknesses

NVIDIA 3070_8GB - A Budget-Friendly Option with Limitations

- Strengths:

- Cost Effective: It's a budget-friendly option compared to the RTX 6000Ada48GB.

- Suitable for smaller models: It performs reasonably well with smaller LLMs like the Llama3 8B, especially with Q4KM quantization.

- Weaknesses:

- Limited Memory Capacity: Its 8GB of memory restricts its use with larger LLM models.

NVIDIA RTX6000Ada_48GB - The Ultimate LLM Machine

- Strengths:

- Monster Memory: Its 48GB of HBM2e memory makes it a powerhouse for even the largest LLM models and demanding applications.

- Superior Token Generation Speed: It boasts impressive token generation speeds across all configurations.

- Scalability: Its architecture is designed for scalability, making it suitable for running multiple LLM models simultaneously.

- Weaknesses:

- Price Tag: This GPU comes with a hefty price tag, making it a less attractive option for those on a budget.

Practical Recommendations and Use Cases

- Use Cases for NVIDIA 30708GB:

- Smaller LLM Models: If your work primarily involves smaller LLM models like Llama3 8B, the 30708GB might be a cost-effective solution, particularly when using Q4KM quantization.

- Gaming and Creation: Given its gaming and multimedia capabilities, it's suitable for developers who also need a GPU for other creative tasks.

- Use Cases for NVIDIA RTX6000Ada_48GB:

- Large LLMs: If you are working with large LLMs such as the Llama3 70B, the RTX 6000Ada48GB is a must-have. Its massive memory and bandwidth are designed to handle the demands of these complex models.

- High-Performance Inference: For real-time or near real-time inference applications and high-performance computing, the RTX 6000Ada48GB can deliver the speed you need.

- Multi-Model Inference: Its scalability allows it to handle multiple LLM models simultaneously, ideal for research and development environments or applications that require multiple LLM interactions.

FAQ:

What is an LLM?

An LLM or Large Language Model is a type of artificial intelligence (AI) model trained on massive datasets of text. This training allows them to understand and generate human-like language, enabling them to perform a wide range of tasks such as:

- Text generation: Writing stories, poems, articles, and even code.

- Translation: Efficiently translating text between languages.

- Summarization: Condensing lengthy text into concise summaries.

- Answering questions: Providing meaningful answers to complex questions.

- Dialogue generation: Engaging in natural conversations with humans.

What is a Token?

In natural language processing (NLP), a token is the smallest unit of text that has meaning. It could be a word, a punctuation mark, or even a special character.

For example, the sentence "I love cats" can be broken down into five tokens: "I", "love", "cats".

Why is Token Speed Important?

Token speed, the rate at which a GPU can process tokens, is crucial for the performance of LLMs. The faster the token speed, the quicker an LLM can generate text, answer questions, and perform other tasks. Think of it like typing speed - the faster you type, the more words you can write per minute.

How does GPU Memory Impact LLM Performance?

GPU memory is like the brain of your computer. The more memory you have, the more information the GPU can store and process at a time.

LLMs demand a lot of memory, especially large models. If you're trying to run a massive model on a GPU with limited memory, it will be like trying to fit a whole library into a small suitcase - it just won't work.

Why is Quantization important?

Quantization is a technique that reduces the size of an LLM while preserving its accuracy. It does this by reducing the precision of numbers used to represent the model's parameters and activations. Think of it as compressing a large file without losing too much detail.

Are there other GPUs suitable for running LLMs?

Yes, there are many other GPUs suited for running LLMs, each with its strengths and weaknesses. Some popular choices include:

- NVIDIA A100: Often considered a top choice for high-performance computing.

- NVIDIA A40: A powerhouse offering even more memory than the A100.

- AMD MI250X: AMD's competitor to the A100, with comparable performance.

Keywords:

NVIDIA 30708GB, NVIDIA RTX6000Ada48GB, LLM, Large Language Model, token generation speed, Llama 3, Llama 8B, Llama 70B, quantization, Q4KM, F16, memory bandwidth, GPU, performance comparison, benchmarking, AI, machine learning, natural language processing, NLP, inference, processing, token, token speed, GPU memory, GPU memory capacity, GPU price, cost-effective, practical recommendations, use cases, FAQ, LLM model, GPU model, AMD, NVIDIA