NVIDIA 3070 8GB vs. NVIDIA RTX 5000 Ada 32GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

In the fascinating world of Large Language Models (LLMs), speed is king. The faster your hardware can process and generate text, the more enjoyable and efficient your interaction with these AI marvels becomes. Today, we're diving into the performance battleground between two popular GPUs for running LLMs: the NVIDIA GeForce RTX 3070 8GB and the NVIDIA GeForce RTX 5000 Ada 32GB.

Imagine you're training a massive AI like ChatGPT. You need a powerhouse to crunch through terabytes of data and generate responses that rival human creativity. This is where the right GPU comes in.

We'll be comparing the performance of these two GPUs in token generation, a crucial metric for measuring LLM efficiency. Token generation refers to the process of breaking down and producing text into chunks, each representing a word or part of a word. Think of it like assembling a sentence from individual LEGO bricks.

Get ready for some geeky fun as we explore the results, dive into the technical details, and ultimately, help you choose the ideal GPU for your LLM adventures.

Performance Analysis: NVIDIA GeForce RTX 3070 8GB vs. NVIDIA GeForce RTX 5000 Ada 32GB

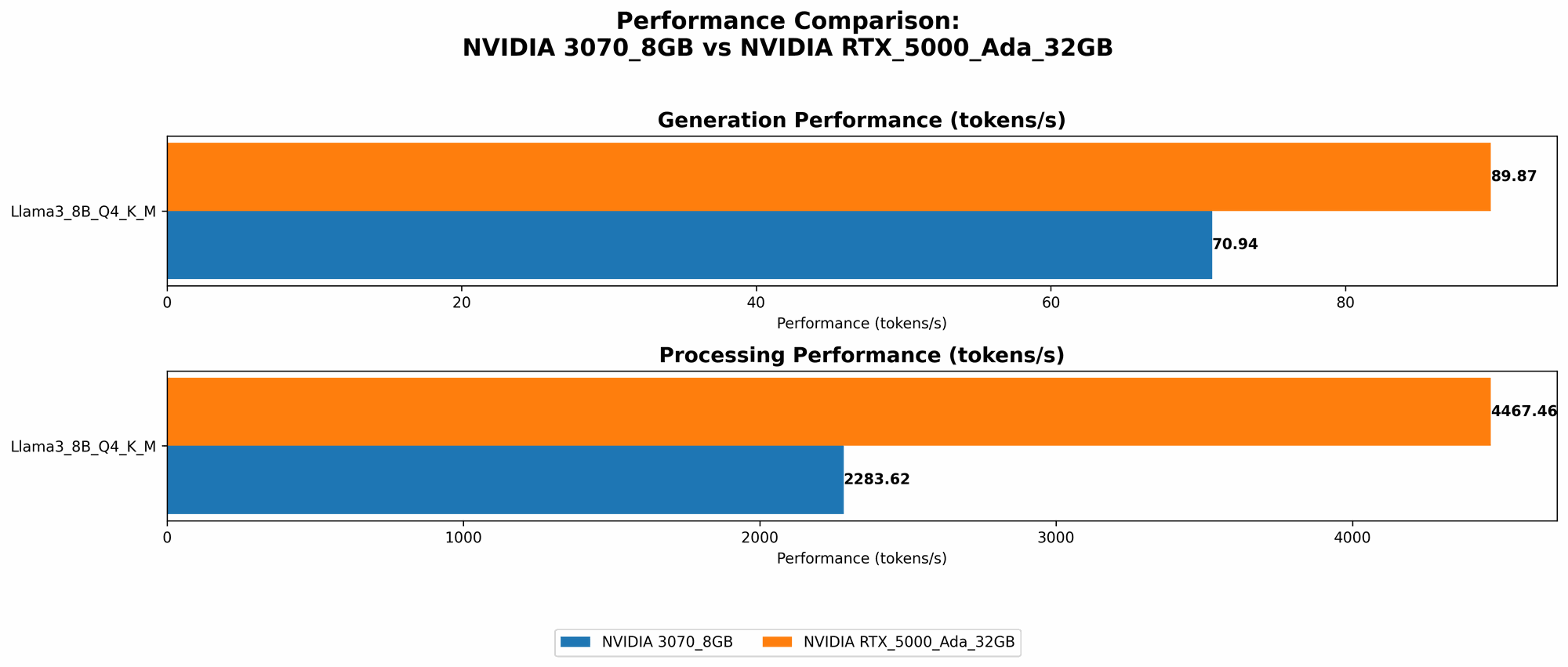

Comparing Token Generation Speed

Let's start with the key metric – token generation speed, measured in tokens per second (tokens/sec). This measurement reflects how fast a GPU can churn out text based on the LLM's model.

| Model | NVIDIA GeForce RTX 3070 8GB (tokens/sec) | NVIDIA GeForce RTX 5000 Ada 32GB (tokens/sec) |

|---|---|---|

| Llama 3 8B - Q4KM | 70.94 | 89.87 |

| Llama 3 8B - F16 | N/A | 32.67 |

| Llama 3 70B - Q4KM | N/A | N/A |

| Llama 3 70B - F16 | N/A | N/A |

As shown in the table, the NVIDIA GeForce RTX 5000 Ada 32GB outperforms the NVIDIA GeForce RTX 3070 8GB in token generation speed for Llama 3 8B model in both Q4KM and F16 quantization schemes.

What is Quantization?

Just like we can store a number using different sizes of containers (e.g., smaller for a pint of milk, larger for a bathtub), we can store the weights of a neural network (like an LLM) in different sizes. Quantization is like using smaller containers to store those numbers, making the model lighter and potentially faster. Q4KM is a type of quantization that is used for Llama 3 8B. F16 is a more precise quantization format which uses half the storage space compared to the standard F32 format.

The RTX 5000 Ada 32GB shows a clear advantage, especially in the F16 format, highlighting its ability to handle more demanding LLM configurations. This is likely due to its larger memory capacity (32GB) and the more advanced Ada architecture.

Processing Speed: A Deeper Dive

Token generation is just part of the story.

Let's look at the processing speed, which measures how quickly the GPU can handle the internal computations for each token.

| Model | NVIDIA GeForce RTX 3070 8GB (tokens/sec) | NVIDIA GeForce RTX 5000 Ada 32GB (tokens/sec) |

|---|---|---|

| Llama 3 8B - Q4KM | 2283.62 | 4467.46 |

| Llama 3 8B - F16 | N/A | 5835.41 |

| Llama 3 70B - Q4KM | N/A | N/A |

| Llama 3 70B - F16 | N/A | N/A |

Again, the NVIDIA GeForce RTX 5000 Ada 32GB shines, demonstrating significantly higher processing speed compared to the RTX 3070 8GB.

Strengths and Weaknesses: A Fair Comparison

NVIDIA GeForce RTX 3070 8GB:

Strengths:

- More Affordable: The NVIDIA GeForce RTX 3070 8GB is a budget-friendly option for those looking to dip their toes into the world of LLM inference.

Weaknesses:

- Limited Memory: The 8GB of VRAM can become a bottleneck when dealing with larger LLMs or more complex models.

- Slower Than RTX 5000 Ada 32GB: It's not as fast as the RTX 5000 Ada 32GB in both token generation and processing speed.

NVIDIA GeForce RTX 5000 Ada 32GB:

Strengths:

- Powerful Performance: Boasts impressive token generation and processing speed, especially with the Llama 3 8B F16 model.

- More Memory: 32GB of VRAM provides ample headroom for handling larger, more complex LLMs.

Weaknesses:

- Expensive: The RTX 5000 Ada 32GB is a significant investment compared to the RTX 3070 8GB.

Choosing the Right GPU for Your Needs

For Budget-Minded Users:

If you're new to LLMs or working with smaller models, the NVIDIA GeForce RTX 3070 8GB offers affordable performance. However, be mindful of its limitations when it comes to larger models.

For Performance Enthusiasts:

The NVIDIA GeForce RTX 5000 Ada 32GB is the top choice for users who prioritize speed and want to handle more complex LLMs. You can expect a significant boost in performance, especially with the F16 quantization scheme for Llama 3 8B.

Think About Your Use Cases:

- Research and Development: The NVIDIA GeForce RTX 5000 Ada 32GB is a good choice for experimenting with larger models and pushing the boundaries of LLM research.

- Personal Use: The NVIDIA GeForce RTX 3070 8GB could be sufficient for simple tasks like generating text or translating languages.

- Production Environment: If you need to run LLMs in a production environment, the RTX 5000 Ada 32GB provides reliable speed and stability.

FAQ: Your LLM and GPU Questions Answered

Q: What are the biggest factors to consider when choosing a GPU for LLMs?

*A: * The primary factors for choosing the right GPU for LLMs are:

- Memory: More memory (VRAM) is essential for larger models. Think of it like a bigger desk for your LLM to work on.

- Compute Performance: A powerful GPU with a high compute capacity will deliver faster token generation and processing speeds.

- Cost: Balance your needs with your budget.

Q: How does quantization affect LLM performance?

A: Quantization is like using smaller containers to store the numbers that represent your LLM model. It makes the model more compact and can potentially speed up inference.

Q: Do I need a high-end GPU for running LLMs?

A: The GPU you need depends on the complexity and size of the LLM. Smaller models might run fine on a mid-range GPU, while larger models will require more powerful hardware.

Q: What's the difference between token generation and processing speed?

A: Token Generation is about how fast a GPU can produce text based on the LLM's model, while Processing Speed refers to the efficiency of the GPU's internal calculations for each token.

Q: What are some other good GPUs for running LLMs?

A: Besides the ones we discussed, other popular choices include the NVIDIA GeForce RTX 40 Series, the AMD Radeon RX 7000 Series, and the NVIDIA A100.

Keywords

NVIDIA 3070, RTX 5000 Ada, LLM, token generation, processing speed, Llama 3, quantization, F16, Q4KM, GPU, performance, benchmark, comparison, AI, machine learning, natural language processing, deep learning keywords.