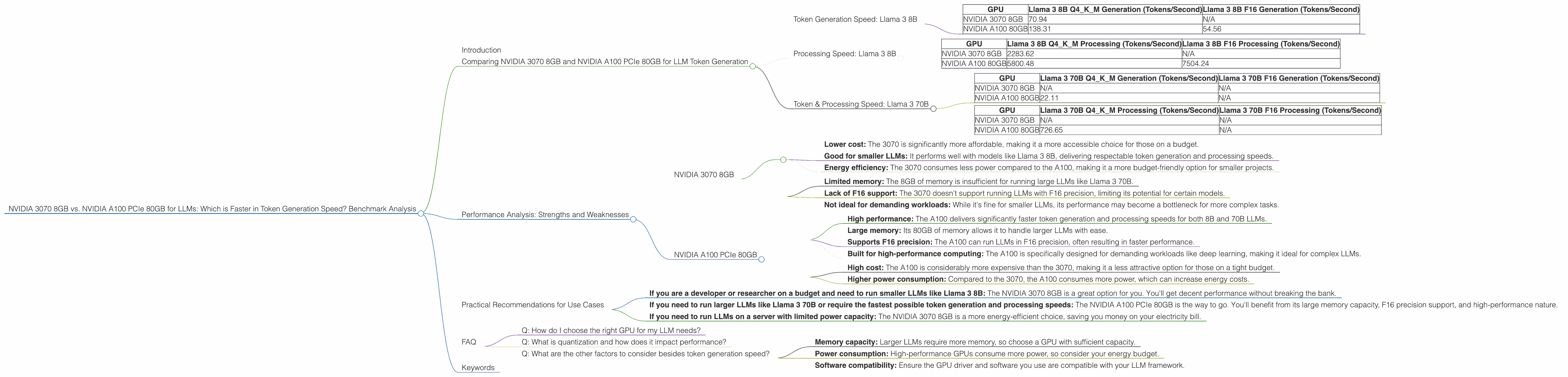

NVIDIA 3070 8GB vs. NVIDIA A100 PCIe 80GB for LLMs: Which is Faster in Token Generation Speed? Benchmark Analysis

Introduction

The world of large language models (LLMs) is rapidly evolving, with new models constantly being released and pushing the boundaries of what's possible.

These powerful AI systems are capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. But running these models requires significant computing power, and choosing the right hardware can make a big difference in performance and cost efficiency.

This article dives into the performance of two popular GPUs – the NVIDIA GeForce RTX 3070 8GB and the NVIDIA A100 PCIe 80GB – when it comes to running LLMs. We'll analyze their token generation speed, explore their strengths and weaknesses, and help you choose the best GPU for your needs.

Comparing NVIDIA 3070 8GB and NVIDIA A100 PCIe 80GB for LLM Token Generation

The NVIDIA GeForce RTX 3070 8GB is a popular gaming graphics card that's also a solid option for running smaller LLMs. The NVIDIA A100 PCIe 80GB, on the other hand, is a high-end data center GPU designed for demanding workloads like deep learning.

Let's see how these GPUs compare when it comes to token generation speed for different LLM models.

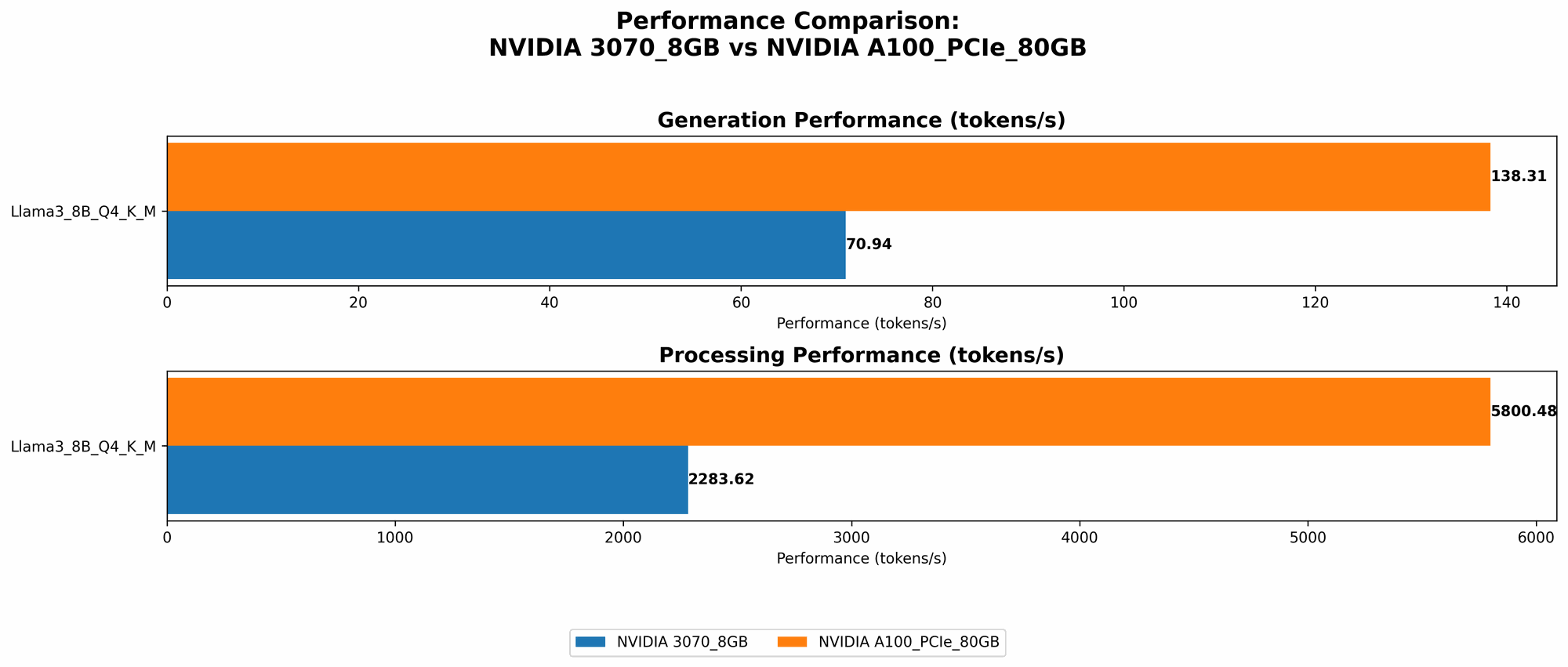

Token Generation Speed: Llama 3 8B

Llama3 8B is a popular LLM known for its impressive performance and accessibility. Let's analyze its token generation speed on both GPUs.

| GPU | Llama 3 8B Q4KM Generation (Tokens/Second) | Llama 3 8B F16 Generation (Tokens/Second) |

|---|---|---|

| NVIDIA 3070 8GB | 70.94 | N/A |

| NVIDIA A100 80GB | 138.31 | 54.56 |

The NVIDIA A100 PCIe 80GB clearly outperforms the NVIDIA 3070 8GB in token generation speed for Llama 3 8B, offering almost double the speed when using the Q4KM quantization.

It's important to note that the A100 also offers an option for running the model in F16 precision, which is not supported by the 3070. This results in a slower token generation speed for the A100 in F16, but still significantly faster than the 3070.

Think about it like this: The A100 is like a race car built for speed, while the 3070 is a quick sports car. Both are fast, but the race car can handle higher performance and more complex tasks.

Processing Speed: Llama 3 8B

Beyond token generation, let's also look at how fast these GPUs process the LLM's calculations. This helps us evaluate the efficiency of the entire model execution.

| GPU | Llama 3 8B Q4KM Processing (Tokens/Second) | Llama 3 8B F16 Processing (Tokens/Second) |

|---|---|---|

| NVIDIA 3070 8GB | 2283.62 | N/A |

| NVIDIA A100 80GB | 5800.48 | 7504.24 |

The NVIDIA A100 again takes the lead, providing more than double the processing speed compared to the 3070 when using Q4KM quantization. The A100 also offers faster processing with F16 precision, despite a slight drop in token generation speed.

This superior processing power translates into faster response times and a smoother user experience when interacting with the LLM.

Token & Processing Speed: Llama 3 70B

Let's now shift our attention to a larger LLM - Llama 3 70B. This behemoth model boasts a massive number of parameters, presenting a greater challenge for hardware.

| GPU | Llama 3 70B Q4KM Generation (Tokens/Second) | Llama 3 70B F16 Generation (Tokens/Second) |

|---|---|---|

| NVIDIA 3070 8GB | N/A | N/A |

| NVIDIA A100 80GB | 22.11 | N/A |

The NVIDIA 3070 8GB unfortunately lacks the memory capacity to run Llama 3 70B. The A100, however, can handle this larger model effectively, although its token generation speed is significantly reduced compared to the 8B model.

Let's also look at the processing speed for this larger model:

| GPU | Llama 3 70B Q4KM Processing (Tokens/Second) | Llama 3 70B F16 Processing (Tokens/Second) |

|---|---|---|

| NVIDIA 3070 8GB | N/A | N/A |

| NVIDIA A100 80GB | 726.65 | N/A |

The A100 can still handle the processing power, demonstrating its ability to tackle larger LLMs.

Performance Analysis: Strengths and Weaknesses

Let's take a closer look at the strengths and weaknesses of both devices regarding their performance with LLMs:

NVIDIA 3070 8GB

Strengths:

- Lower cost: The 3070 is significantly more affordable, making it a more accessible choice for those on a budget.

- Good for smaller LLMs: It performs well with models like Llama 3 8B, delivering respectable token generation and processing speeds.

- Energy efficiency: The 3070 consumes less power compared to the A100, making it a more budget-friendly option for smaller projects.

Weaknesses:

- Limited memory: The 8GB of memory is insufficient for running large LLMs like Llama 3 70B.

- Lack of F16 support: The 3070 doesn't support running LLMs with F16 precision, limiting its potential for certain models.

- Not ideal for demanding workloads: While it's fine for smaller LLMs, its performance may become a bottleneck for more complex tasks.

NVIDIA A100 PCIe 80GB

Strengths:

- High performance: The A100 delivers significantly faster token generation and processing speeds for both 8B and 70B LLMs.

- Large memory: Its 80GB of memory allows it to handle larger LLMs with ease.

- Supports F16 precision: The A100 can run LLMs in F16 precision, often resulting in faster performance.

- Built for high-performance computing: The A100 is specifically designed for demanding workloads like deep learning, making it ideal for complex LLMs.

Weaknesses:

- High cost: The A100 is considerably more expensive than the 3070, making it a less attractive option for those on a tight budget.

- Higher power consumption: Compared to the 3070, the A100 consumes more power, which can increase energy costs.

Practical Recommendations for Use Cases

The best GPU for you depends on your specific needs and budget. Here's a breakdown of use cases to guide your decision:

- If you are a developer or researcher on a budget and need to run smaller LLMs like Llama 3 8B: The NVIDIA 3070 8GB is a great option for you. You'll get decent performance without breaking the bank.

- If you need to run larger LLMs like Llama 3 70B or require the fastest possible token generation and processing speeds: The NVIDIA A100 PCIe 80GB is the way to go. You'll benefit from its large memory capacity, F16 precision support, and high-performance nature.

- If you need to run LLMs on a server with limited power capacity: The NVIDIA 3070 8GB is a more energy-efficient choice, saving you money on your electricity bill.

FAQ

Q: How do I choose the right GPU for my LLM needs?

A: Consider the size of the LLM you plan to run, your budget, and the performance requirements of your application. For smaller LLMs and budget-conscious projects, the NVIDIA 3070 8GB is a good choice. For larger LLMs and demanding tasks, the NVIDIA A100 PCIe 80GB offers superior performance but comes at a higher cost.

Q: What is quantization and how does it impact performance?

A: Quantization is a technique used to reduce the size of LLM models by reducing the precision of their weights. It's like using fewer bits to represent the same information. This reduces memory requirements and can speed up inference, but it might also slightly reduce accuracy.

For instance, Q4KM quantization uses 4 bits to represent each weight, reducing the model size and speeding up processing.

Q: What are the other factors to consider besides token generation speed?

A: While token generation speed is crucial, other factors are important:

- Memory capacity: Larger LLMs require more memory, so choose a GPU with sufficient capacity.

- Power consumption: High-performance GPUs consume more power, so consider your energy budget.

- Software compatibility: Ensure the GPU driver and software you use are compatible with your LLM framework.

Keywords

LLM, large language model, NVIDIA 3070 8GB, NVIDIA A100 PCIe 80GB, token generation speed, Llama 3 8B, Llama 3 70B, F16 precision, Q4KM quantization, performance, benchmark, GPU, deep learning, cost, budget, power consumption, memory capacity, software compatibility.