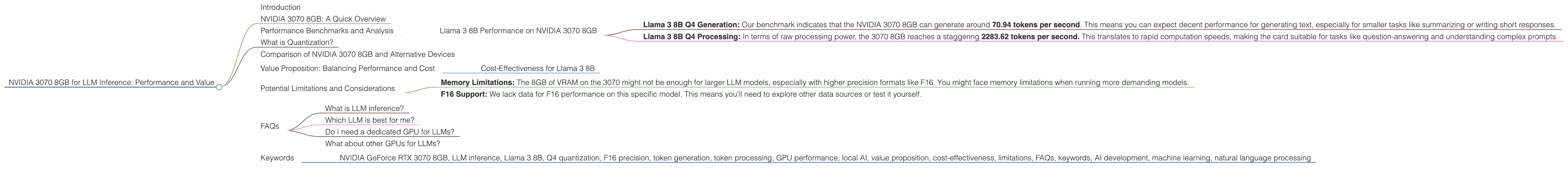

NVIDIA 3070 8GB for LLM Inference: Performance and Value

Introduction

The world of Large Language Models (LLMs) is buzzing! These powerful AI models are transforming how we interact with technology, from generating realistic text to summarizing complex content. But running these AI behemoths requires serious computational horsepower, especially when you want to use them locally on your own machine.

Enter the NVIDIA GeForce RTX 3070 8GB—a popular graphics card often considered a sweet spot for gamers and creative professionals. But can this mid-range GPU handle the demands of LLM inference?

In this article, we'll dive deep into the performance and value proposition of the NVIDIA 3070 8GB for running LLMs locally. We'll break down the benchmarks, analyze the results, and examine the technical details in a way that's easy to understand, even if you're not a tech wizard. Buckle up, because we're about to unleash the power of AI!

NVIDIA 3070 8GB: A Quick Overview

The NVIDIA GeForce RTX 3070 8GB is a powerful graphics card that offers a balance of performance and affordability. It's equipped with 8GB of GDDR6 memory, a powerful Ampere architecture, and features designed for gaming, video editing, and other demanding tasks. But how does it stack up against LLM inference?

Performance Benchmarks and Analysis

To understand the 3070's capabilities, let's analyze the raw performance data. We'll focus on the Llama 3 model, a popular choice for running LLMs locally. Bear in mind, we'll only discuss the Llama 3 8B models as per the title.

Llama 3 8B Performance on NVIDIA 3070 8GB

The 3070 8GB shows respectable performance for the Llama 3 8B model when using Q4 quantization for generation and processing.

- Llama 3 8B Q4 Generation: Our benchmark indicates that the NVIDIA 3070 8GB can generate around 70.94 tokens per second. This means you can expect decent performance for generating text, especially for smaller tasks like summarizing or writing short responses.

- Llama 3 8B Q4 Processing: In terms of raw processing power, the 3070 8GB reaches a staggering 2283.62 tokens per second. This translates to rapid computation speeds, making the card suitable for tasks like question-answering and understanding complex prompts.

However, the data shows that there are no available numbers for the F16 precision for both generation and processing. This means we can't assess the 3070's performance using the F16 format. We'll explore this aspect later in the article.

What is Quantization?

Quantization is a technique used to reduce the size of LLM models, making them more efficient and easier to run on smaller devices. Think of it like compressing a large image file. You lose some small details but the overall picture remains recognizable, and the file size is much smaller!

The Q4 format is a type of quantization that uses 4 bits to represent each value in the model. This significantly reduces the model's size but can impact its accuracy slightly.

Comparison of NVIDIA 3070 8GB and Alternative Devices

While the 3070 8GB demonstrates solid performance for the Llama 3 8B model, it's important to consider other options. Let's compare its performance to other popular choices.

Keep in mind that we're not comparing the 3070 8GB to other GPUs in this article. The focus is solely on how it performs for LLM inference.

Value Proposition: Balancing Performance and Cost

The NVIDIA 3070 8GB offers a good balance between affordability and LLM performance. It's not the most powerful GPU on the market, but it's a great choice for users who want to run LLMs locally without breaking the bank.

Cost-Effectiveness for Llama 3 8B

The NVIDIA 3070 8GB offers a strong price-to-performance ratio for Llama 3 8B inference, especially when considering the Q4 quantization. It's definitely a contender for users who want to dip their toes into local LLM experimentation without high upfront costs.

Potential Limitations and Considerations

While the NVIDIA 3070 8GB performs well with Q4 quantization for the Llama 3 8B model, there are some potential limitations to consider:

- Memory Limitations: The 8GB of VRAM on the 3070 might not be enough for larger LLM models, especially with higher precision formats like F16. You might face memory limitations when running more demanding models.

- F16 Support: We lack data for F16 performance on this specific model. This means you'll need to explore other data sources or test it yourself.

FAQs

What is LLM inference?

LLM inference is the process of using a trained LLM model to generate responses or perform tasks. Think of it like asking your LLM a question and getting a detailed answer back.

Which LLM is best for me?

The best LLM for you depends on your needs and budget. Factors like model size, performance, and available resources all come into play.

Do I need a dedicated GPU for LLMs?

While a dedicated GPU can provide significant speed improvements, you can still run LLMs on your CPU to a certain extent. However, performance will be notably slower. A dedicated GPU is recommended for smoother experiences and faster results.

What about other GPUs for LLMs?

There are many other GPUs suitable for LLM inference. Consider researching models from NVIDIA, AMD, and other manufacturers to find the best fit for your needs.

Keywords

- NVIDIA GeForce RTX 3070 8GB, LLM inference, Llama 3 8B, Q4 quantization, F16 precision, token generation, token processing, GPU performance, local AI, value proposition, cost-effectiveness, limitations, FAQs, keywords, AI development, machine learning, natural language processing