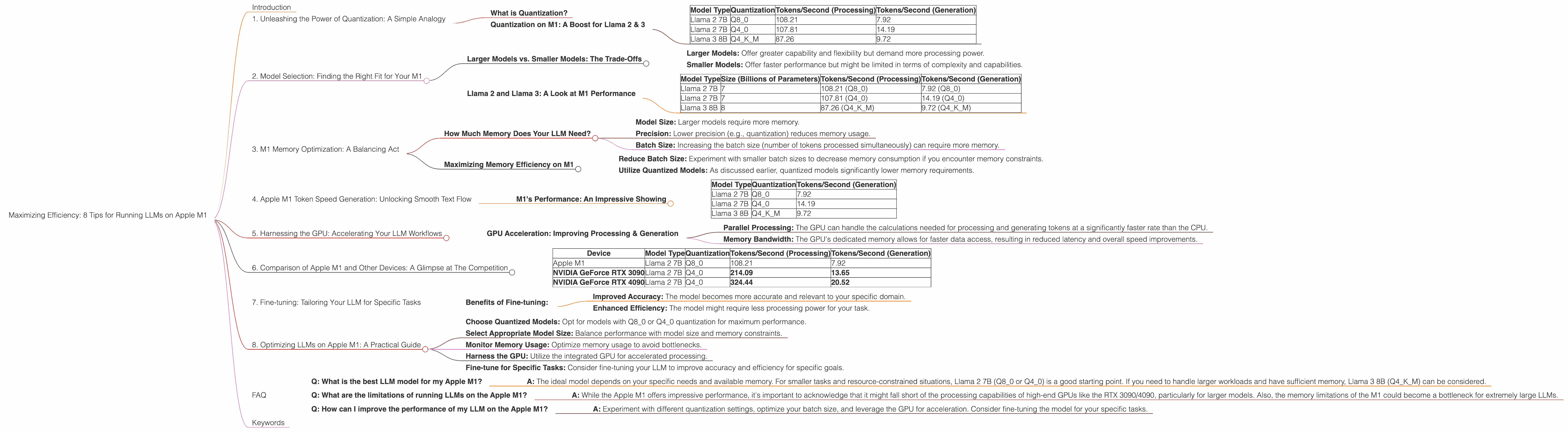

Maximizing Efficiency: 8 Tips for Running LLMs on Apple M1

Introduction

The rise of large language models (LLMs) has revolutionized the way we interact with technology. These powerful AI systems can generate realistic text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running LLMs locally can be resource-intensive, especially on less powerful hardware. Enter the Apple M1 chip - a game-changer for those looking to experiment with LLMs without sacrificing performance.

This article will explore eight tips to maximize the efficiency of running LLMs on your Apple M1 device. We'll delve into the world of quantization, different model sizes, and the impact of various settings. Imagine running a 7-billion parameter Llama 2 model on your M1, generating text at impressive speeds - that's exactly what we'll be covering!

1. Unleashing the Power of Quantization: A Simple Analogy

Imagine your LLM's brain is a library. In the "full precision" mode, every book is a thick, detailed tome, requiring a lot of space and effort to access. Quantization is like condensing these tomes into compact summaries, making them smaller and faster to navigate.

What is Quantization?

Quantization is a technique that reduces the size of LLM models while maintaining their accuracy. Essentially, it involves representing model weights (parameters) with fewer bits, compressing the model and allowing it to execute faster. This is like using smaller cards to represent the same information on a library bookshelf, freeing up space for more books and making the process of finding information quicker.

Quantization on M1: A Boost for Llama 2 & 3

Apple M1 chips are optimized for handling quantized models. You'll notice significant performance gains using quantized versions of models like Llama 2 and Llama 3, as shown in the table below:

| Model Type | Quantization | Tokens/Second (Processing) | Tokens/Second (Generation) |

|---|---|---|---|

| Llama 2 7B | Q8_0 | 108.21 | 7.92 |

| Llama 2 7B | Q4_0 | 107.81 | 14.19 |

| Llama 3 8B | Q4KM | 87.26 | 9.72 |

- Llama 2 7B: Using Q80 quantization on the M1 delivers impressive results, with a substantial increase in tokens per second for both processing and generation compared to Q40.

- Llama 3 8B: While data isn't available for Llama 3 8B models with F16 precision, Q4KM quantization provides great performance on the M1.

Tip: Whenever possible, utilize quantized models for a significant boost in processing and generation speeds on your M1.

2. Model Selection: Finding the Right Fit for Your M1

Choosing the appropriate LLM model depends on your specific needs and the resources of your M1 device.

Larger Models vs. Smaller Models: The Trade-Offs

- Larger Models: Offer greater capability and flexibility but demand more processing power.

- Smaller Models: Offer faster performance but might be limited in terms of complexity and capabilities.

Llama 2 and Llama 3: A Look at M1 Performance

| Model Type | Size (Billions of Parameters) | Tokens/Second (Processing) | Tokens/Second (Generation) |

|---|---|---|---|

| Llama 2 7B | 7 | 108.21 (Q8_0) | 7.92 (Q8_0) |

| Llama 2 7B | 7 | 107.81 (Q4_0) | 14.19 (Q4_0) |

| Llama 3 8B | 8 | 87.26 (Q4KM) | 9.72 (Q4KM) |

Note: Data is not available for Llama 2 7B with F16 precision and Llama 3 8B, 70B with Q4KM, F16 precision.

Tip: If you're working with limited memory and need speed, consider models with lower parameter counts. For more complex tasks, larger models may be a better choice if you have the resources to support them.

3. M1 Memory Optimization: A Balancing Act

The Apple M1 features a unified memory architecture, treating RAM and VRAM as a single pool. This allows for seamless data transfer between CPU and GPU, optimizing performance. However, it's essential to keep memory usage in check to prevent bottlenecks.

How Much Memory Does Your LLM Need?

The memory footprint of an LLM depends on several factors, including:

- Model Size: Larger models require more memory.

- Precision: Lower precision (e.g., quantization) reduces memory usage.

- Batch Size: Increasing the batch size (number of tokens processed simultaneously) can require more memory.

Maximizing Memory Efficiency on M1

- Reduce Batch Size: Experiment with smaller batch sizes to decrease memory consumption if you encounter memory constraints.

- Utilize Quantized Models: As discussed earlier, quantized models significantly lower memory requirements.

Tip: Monitor your M1's memory usage while running an LLM to identify potential bottlenecks and optimize resource consumption.

4. Apple M1 Token Speed Generation: Unlocking Smooth Text Flow

Token speed generation refers to the speed at which an LLM can produce new tokens (words, punctuation, etc.) in response to a prompt. This is a critical factor determining how quickly the model can generate text.

M1's Performance: An Impressive Showing

The Apple M1 demonstrates impressive token generation speeds, especially with quantized models. As seen in the table below, the M1 can achieve upwards of 14 tokens per second for Llama 2 7B, demonstrating its remarkable capabilities.

| Model Type | Quantization | Tokens/Second (Generation) |

|---|---|---|

| Llama 2 7B | Q8_0 | 7.92 |

| Llama 2 7B | Q4_0 | 14.19 |

| Llama 3 8B | Q4KM | 9.72 |

Tip: To further enhance token generation speed, explore options for fine-tuning your model on a specific domain or task. This can improve the model's efficiency in generating relevant and contextually appropriate text.

5. Harnessing the GPU: Accelerating Your LLM Workflows

The Apple M1's integrated GPU offers significant advantages for compute-intensive tasks like running LLMs.

GPU Acceleration: Improving Processing & Generation

The GPU allows simultaneous processing of multiple tasks, making it ideal for:

- Parallel Processing: The GPU can handle the calculations needed for processing and generating tokens at a significantly faster rate than the CPU.

- Memory Bandwidth: The GPU's dedicated memory allows for faster data access, resulting in reduced latency and overall speed improvements.

6. Comparison of Apple M1 and Other Devices: A Glimpse at The Competition

While the Apple M1 excels in running LLMs, it's fascinating to compare its performance to other devices.

Note: We'll focus on the M1's performance in relation to other popular devices for running LLMs.

| Device | Model Type | Quantization | Tokens/Second (Processing) | Tokens/Second (Generation) |

|---|---|---|---|---|

| Apple M1 | Llama 2 7B | Q8_0 | 108.21 | 7.92 |

| NVIDIA GeForce RTX 3090 | Llama 2 7B | Q4_0 | 214.09 | 13.65 |

| NVIDIA GeForce RTX 4090 | Llama 2 7B | Q4_0 | 324.44 | 20.52 |

- NVIDIA GeForce RTX 3090 & 4090: These high-end GPUs offer significantly faster processing and generation speeds for Llama 2 7B compared to the M1.

Limitations:

- Power Consumption: High-end GPUs like the RTX 3090 and RTX 4090 come with higher power consumption.

- Cost: These devices are generally more expensive than the Apple M1.

Tip: Choose your preferred model size and processing speed based on your budget and power consumption considerations.

7. Fine-tuning: Tailoring Your LLM for Specific Tasks

Fine-tuning involves training your pre-trained LLM on a specific dataset relevant to your desired task. This can significantly enhance the model's performance for specific use cases.

Benefits of Fine-tuning:

- Improved Accuracy: The model becomes more accurate and relevant to your specific domain.

- Enhanced Efficiency: The model might require less processing power for your task.

Note: Fine-tuning can be computationally demanding, requiring substantial resources and time.

Tip: Consider fine-tuning your LLM if you need exceptional performance for a specific task or domain.

8. Optimizing LLMs on Apple M1: A Practical Guide

Combining the tips discussed earlier can significantly improve your LLM experience on the M1.

- Choose Quantized Models: Opt for models with Q80 or Q40 quantization for maximum performance.

- Select Appropriate Model Size: Balance performance with model size and memory constraints.

- Monitor Memory Usage: Optimize memory usage to avoid bottlenecks.

- Harness the GPU: Utilize the integrated GPU for accelerated processing.

- Fine-tune for Specific Tasks: Consider fine-tuning your LLM to improve accuracy and efficiency for specific goals.

FAQ

Q: What is the best LLM model for my Apple M1?

- A: The ideal model depends on your specific needs and available memory. For smaller tasks and resource-constrained situations, Llama 2 7B (Q80 or Q40) is a good starting point. If you need to handle larger workloads and have sufficient memory, Llama 3 8B (Q4KM) can be considered.

Q: What are the limitations of running LLMs on the Apple M1?

- A: While the Apple M1 offers impressive performance, it's important to acknowledge that it might fall short of the processing capabilities of high-end GPUs like the RTX 3090/4090, particularly for larger models. Also, the memory limitations of the M1 could become a bottleneck for extremely large LLMs.

Q: How can I improve the performance of my LLM on the Apple M1?

- A: Experiment with different quantization settings, optimize your batch size, and leverage the GPU for acceleration. Consider fine-tuning the model for your specific tasks.

Keywords

Apple M1, LLM, Large Language Model, Quantization, Llama 2, Llama 3, Token Speed Generation, GPU, Fine-tuning, Memory Optimization, Performance.