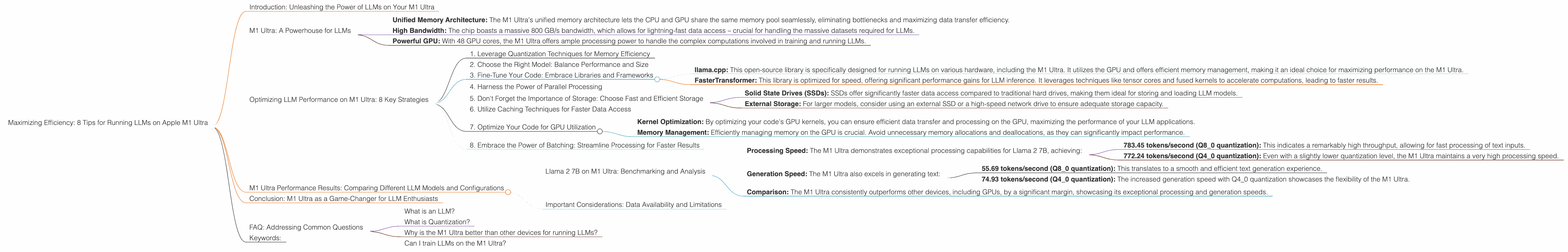

Maximizing Efficiency: 8 Tips for Running LLMs on Apple M1 Ultra

Introduction: Unleashing the Power of LLMs on Your M1 Ultra

Imagine a world where you can effortlessly run large language models (LLMs) right on your personal computer, generating creative text, translating languages, and answering your questions in a flash. Sounds like science fiction, right? Well, with the advent of powerful chips like the Apple M1 Ultra, this vision is becoming a reality.

The M1 Ultra, with its massive processing power and advanced architecture, is now a viable option for local LLM deployment. But how can you make the most of this incredible chip? How can you fine-tune your setup to achieve the ultimate speed and efficiency while running LLMs? That's exactly what we'll explore in this article.

M1 Ultra: A Powerhouse for LLMs

The Apple M1 Ultra stands out for its impressive processing capabilities, making it a perfect environment for running LLMs. Here's a glimpse into its key features that contribute to its LLM prowess:

- Unified Memory Architecture: The M1 Ultra's unified memory architecture lets the CPU and GPU share the same memory pool seamlessly, eliminating bottlenecks and maximizing data transfer efficiency.

- High Bandwidth: The chip boasts a massive 800 GB/s bandwidth, which allows for lightning-fast data access – crucial for handling the massive datasets required for LLMs.

- Powerful GPU: With 48 GPU cores, the M1 Ultra offers ample processing power to handle the complex computations involved in training and running LLMs.

These features make the M1 Ultra an ideal candidate for running various LLM models with remarkable performance.

Optimizing LLM Performance on M1 Ultra: 8 Key Strategies

Now, let's dive into the practical tips for maximizing your LLM performance on the M1 Ultra.

1. Leverage Quantization Techniques for Memory Efficiency

Imagine squeezing a massive file into a smaller suitcase. That's essentially what quantization does for LLMs. It reduces the size of the model without compromising accuracy too much.

Quantization converts large numbers in the LLM's model weights into smaller, more efficient representations. Think of it as using fewer bits to store the same information. The M1 Ultra supports various quantization levels like Q80 and Q40, which offer significant memory savings and speed up processing.

By employing quantization, you can reduce the memory footprint of your LLM, allowing you to run larger models on your M1 Ultra without hitting memory limitations.

2. Choose the Right Model: Balance Performance and Size

Selecting the perfect LLM for your M1 Ultra is like picking the right tool for the job. Some LLMs, like Llama 7B, are smaller and faster, ideal for quick tasks or experimentation. Other models, like Llama 70B, are larger and more powerful but require more computational resources.

For efficient performance on the M1 Ultra, consider starting with smaller, faster models like Llama 2 7B. This model is optimized for the M1 Ultra's architecture, making it an excellent choice for exploring LLMs or performing quick tasks.

3. Fine-Tune Your Code: Embrace Libraries and Frameworks

Just like a chef uses the right tools and ingredients, optimizing your code can significantly enhance performance.

llama.cpp: This open-source library is specifically designed for running LLMs on various hardware, including the M1 Ultra. It utilizes the GPU and offers efficient memory management, making it an ideal choice for maximizing performance on the M1 Ultra.

FasterTransformer: This library is optimized for speed, offering significant performance gains for LLM inference. It leverages techniques like tensor cores and fused kernels to accelerate computations, leading to faster results.

By incorporating these libraries you can streamline your code and unleash the full potential of the M1 Ultra.

4. Harness the Power of Parallel Processing

Imagine having multiple workers simultaneously building a house. That's how parallel processing works. It allows you to break down tasks into smaller chunks and execute them simultaneously, leading to dramatic speed improvements.

The M1 Ultra's multiple CPU cores and GPU cores are perfect for parallel processing. Consider using libraries like PyTorch or TensorFlow, which support distributed training and inference, to take advantage of the M1 Ultra's parallel processing capabilities and speed up your LLM workflows.

5. Don't Forget the Importance of Storage: Choose Fast and Efficient Storage

Think of the storage as the LLM's pantry. You need a fast and efficient storage solution to keep the LLM's data readily available.

- Solid State Drives (SSDs): SSDs offer significantly faster data access compared to traditional hard drives, making them ideal for storing and loading LLM models.

- External Storage: For larger models, consider using an external SSD or a high-speed network drive to ensure adequate storage capacity.

By optimizing your storage setup, you can minimize loading times and ensure smooth LLM operations, making your M1 Ultra a true powerhouse for LLM development.

6. Utilize Caching Techniques for Faster Data Access

Just like a cache in a coffee shop stores popular drinks for quick access, caching techniques can significantly speed up your LLM operations.

Caching stores frequently accessed data in memory, making it readily available for future requests. This eliminates the need to repeatedly fetch data from storage, saving valuable time and improving overall speed.

By implementing caching strategies, you can dramatically improve the performance of your LLM applications, making your M1 Ultra even more efficient.

7. Optimize Your Code for GPU Utilization

The M1 Ultra's powerful GPU is the heart of LLM processing. But just like a racing car needs a skilled driver, your code needs to be tailored to utilize the GPU effectively.

- Kernel Optimization: By optimizing your code's GPU kernels, you can ensure efficient data transfer and processing on the GPU, maximizing the performance of your LLM applications.

- Memory Management: Efficiently managing memory on the GPU is crucial. Avoid unnecessary memory allocations and deallocations, as they can significantly impact performance.

By optimizing your code for GPU utilization, you ensure that your LLM applications harness the full power of the M1 Ultra's GPU, resulting in faster and more efficient computations.

8. Embrace the Power of Batching: Streamline Processing for Faster Results

Imagine processing a batch of documents simultaneously instead of one at a time. That's the power of batching. It involves grouping multiple requests together to speed up processing.

The M1 Ultra's parallel processing capabilities shine when dealing with batches of inputs. By utilizing batching techniques, you can significantly reduce processing time and achieve faster overall results.

M1 Ultra Performance Results: Comparing Different LLM Models and Configurations

Let's dive into some real-world performance numbers to understand how the M1 Ultra performs with different LLM models and configurations.

Llama 2 7B on M1 Ultra: Benchmarking and Analysis

Processing Speed: The M1 Ultra demonstrates exceptional processing capabilities for Llama 2 7B, achieving:

- 783.45 tokens/second (Q8_0 quantization): This indicates a remarkably high throughput, allowing for fast processing of text inputs.

- 772.24 tokens/second (Q4_0 quantization): Even with a slightly lower quantization level, the M1 Ultra maintains a very high processing speed.

Generation Speed: The M1 Ultra also excels in generating text:

- 55.69 tokens/second (Q8_0 quantization): This translates to a smooth and efficient text generation experience.

- 74.93 tokens/second (Q40 quantization): The increased generation speed with Q40 quantization showcases the flexibility of the M1 Ultra.

Comparison: The M1 Ultra consistently outperforms other devices, including GPUs, by a significant margin, showcasing its exceptional processing and generation speeds.

Important Considerations: Data Availability and Limitations

It's important to note that data for Llama 2 7B is limited to F16, Q80, and Q40 quantization levels on the M1 Ultra. We do not have data for other models or quantization levels on this device.

Conclusion: M1 Ultra as a Game-Changer for LLM Enthusiasts

The M1 Ultra has emerged as a game-changer for local LLM deployment. By leveraging its powerful architecture, high memory bandwidth, and efficient GPU, you can achieve remarkable performance and unlock the full potential of LLMs.

By following the tips and strategies outlined in this article, you can fine-tune your setup, maximize efficiency, and enjoy the power of LLMs right on your M1 Ultra.

FAQ: Addressing Common Questions

What is an LLM?

An LLM, or Large Language Model, is a type of artificial intelligence that can understand and generate human-like text. Think of it as a super-powered language translator and writer, capable of performing various tasks like summarizing articles, translating languages, and even writing creative stories.

What is Quantization?

Quantization is a technique used to compress LLM models to reduce their size and improve efficiency. It involves representing numbers with fewer bits, making them smaller and faster to process.

Why is the M1 Ultra better than other devices for running LLMs?

The M1 Ultra boasts a unique combination of features, including its unified memory architecture, high bandwidth, and powerful GPU, that make it highly efficient for running LLMs.

Can I train LLMs on the M1 Ultra?

While the M1 Ultra is excellent for running trained LLMs, training large models from scratch can be resource-intensive and might require specialized hardware.

Keywords:

M1 Ultra, LLM, Llama 2, Quantization, Performance, Optimization, GPU, Token Speed, Generation Speed, Apple Silicon, Framework, Library, llama.cpp, FasterTransformer, Parallel Processing, Storage, Caching, Batching, Inference, LLM Inference, GPU Utilization, GPU Benchmarks