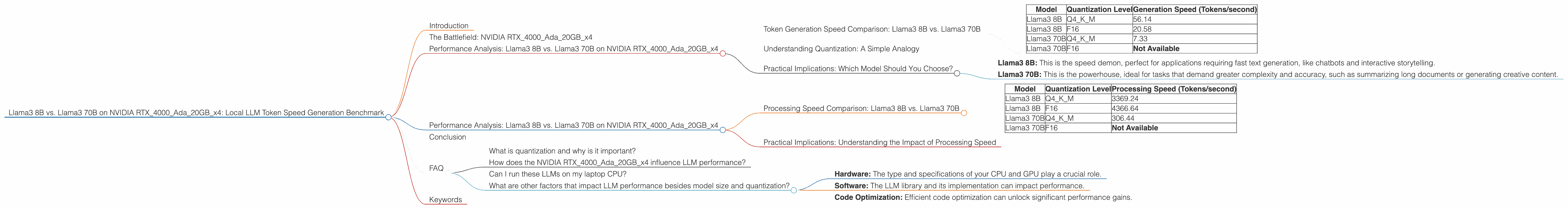

Llama3 8B vs. Llama3 70B on NVIDIA RTX 4000 Ada 20GB x4: Local LLM Token Speed Generation Benchmark

Introduction

Welcome, fellow language model enthusiasts! Today, we're diving deep into the fascinating world of local Large Language Model (LLM) performance, specifically comparing the Llama3 8B and Llama3 70B models on the powerful NVIDIA RTX4000Ada20GBx4.

This benchmark focuses on token generation speed for both models, showcasing their capabilities in generating text locally. We'll explore the differences in performance based on quantization levels (Q4KM vs F16) and uncover which model might be a better fit for your specific needs. Stay tuned for some surprising insights!

The Battlefield: NVIDIA RTX4000Ada20GBx4

This high-end NVIDIA graphics card packs a punch with its Ampere architecture and impressive 20GB of GDDR6 memory. It's a powerhouse designed for demanding tasks like AI and machine learning, making it an excellent choice for running these LLMs locally.

Performance Analysis: Llama3 8B vs. Llama3 70B on NVIDIA RTX4000Ada20GBx4

Token Generation Speed Comparison: Llama3 8B vs. Llama3 70B

Let's get straight to the numbers, shall we? The table below shows the token generation speed in tokens per second (tokens/second) for both models on the RTX4000Ada20GBx4.

| Model | Quantization Level | Generation Speed (Tokens/second) |

|---|---|---|

| Llama3 8B | Q4KM | 56.14 |

| Llama3 8B | F16 | 20.58 |

| Llama3 70B | Q4KM | 7.33 |

| Llama3 70B | F16 | Not Available |

Key Observations:

- Llama3 8B reigns supreme in token generation speed. The smaller model, even with Q4KM quantization, generates tokens significantly faster than the Llama3 70B. This is expected, as the 70B model has much more complex and larger parameter space to process.

- Model size matters. The 70B model, with its 70 billion parameters, requires more processing power, leading to a noticeable drop in token generation speed compared to the 8B model.

- Quantization impacts performance. Q4KM quantization (a more aggressive form of quantization) helps boost speed for both models, but the difference is more prominent in the Llama3 8B.

Understanding Quantization: A Simple Analogy

Imagine you are a chef preparing a complex dish with dozens of ingredients. Quantization simplifies the task by using fewer ingredients (like a pre-made sauce). This results in a faster cooking time, but the flavor might not be as rich.

In the case of LLMs, quantization reduces the complexity of the model by reducing the precision of its parameters. This speeds up processing, but might result in slightly lower accuracy.

Practical Implications: Which Model Should You Choose?

The choice between Llama3 8B and Llama3 70B ultimately depends on your specific needs and resources. Think of it like choosing between a nimble sports car and a luxurious limousine.

- Llama3 8B: This is the speed demon, perfect for applications requiring fast text generation, like chatbots and interactive storytelling.

- Llama3 70B: This is the powerhouse, ideal for tasks that demand greater complexity and accuracy, such as summarizing long documents or generating creative content.

If you prioritize speed and have limited computational resources, the Llama3 8B is your go-to choice. If you need the power of a larger model for complex language tasks and have ample processing power, the Llama3 70B is the way to go.

Performance Analysis: Llama3 8B vs. Llama3 70B on NVIDIA RTX4000Ada20GBx4

Processing Speed Comparison: Llama3 8B vs. Llama3 70B

Let's shift our focus to another crucial aspect of LLM performance: processing speed. The numbers below represent the processing speed in tokens per second (tokens/second) for both models.

| Model | Quantization Level | Processing Speed (Tokens/second) |

|---|---|---|

| Llama3 8B | Q4KM | 3369.24 |

| Llama3 8B | F16 | 4366.64 |

| Llama3 70B | Q4KM | 306.44 |

| Llama3 70B | F16 | Not Available |

Key Observations:

- Llama3 8B shines again in processing speed. This smaller model, even with Q4KM quantization, processes tokens at a much faster rate compared to the Llama3 70B model.

- Quantization impacts vary. While Q4KM quantization enhances processing speed for both models, it appears to have a more significant impact on the Llama3 8B model.

- Model size remains a major factor. The larger parameter space of the 70B model necessitates more processing power, leading to a considerable difference in processing speed.

Practical Implications: Understanding the Impact of Processing Speed

Processing speed is directly related to how quickly the LLM can handle incoming inputs and generate outputs. A faster processing speed translates to a more responsive and interactive user experience.

For example, if you are building a chatbot, a model with a high processing speed will be able to provide quick and engaging responses to user queries.

In general, a higher processing speed is desirable for most LLM applications, as it leads to faster response times and improved user experience. However, the choice of model ultimately depends on your specific needs and priorities.

Conclusion

Our journey through the token speed generation benchmark of Llama3 8B and Llama3 70B on NVIDIA RTX4000Ada20GBx4 has brought us some fascinating insights.

The Llama3 8B model emerges as the speed demon, outperforming the Llama3 70B in both token generation and processing speeds. This advantage stems from its smaller parameter space, making it more efficient for local hardware. However, the Llama3 70B holds its ground with its enhanced capabilities for complex language tasks.

Remember, choosing the right LLM model for your project is a balancing act between performance, accuracy, and resources. By understanding the nuances of each model, you can make an informed decision that aligns with your specific needs.

FAQ

What is quantization and why is it important?

Quantization is a technique used to reduce the size and complexity of LLM models by reducing the precision of their parameters. This process helps boost performance and memory efficiency, enabling models to run smoothly on more modest hardware.

Imagine you're trying to fit a large suitcase into a small car. Quantization is like packing the suitcase more efficiently by using smaller and lighter items.

How does the NVIDIA RTX4000Ada20GBx4 influence LLM performance?

The RTX4000Ada20GBx4 is a powerful graphics card designed for AI workloads. Its dedicated processing units and ample memory make it ideal for handling the demanding calculations involved in running LLMs, leading to faster token generation and processing speeds.

Can I run these LLMs on my laptop CPU?

It's possible, but it's not recommended. While CPUs can handle basic LLM tasks, their performance pales in comparison to dedicated GPUs like the RTX4000Ada20GBx4. For optimal performance, consider using a GPU-powered device.

What are other factors that impact LLM performance besides model size and quantization?

Several other factors influence LLM performance, including:

- Hardware: The type and specifications of your CPU and GPU play a crucial role.

- Software: The LLM library and its implementation can impact performance.

- Code Optimization: Efficient code optimization can unlock significant performance gains.

Keywords

Large Language Model, LLM, Llama3, Llama 3, 8B, 70B, NVIDIA, RTX4000Ada20GBx4, GPU, Token Generation, Processing Speed, Quantization, Q4KM, F16, Benchmark, Performance, Local, Speed, Inference, AI, Machine Learning, Model Size, Resources, Accuracy, Chatbot, Interactive Storytelling, Creative Content, Hardware, Software, Optimization