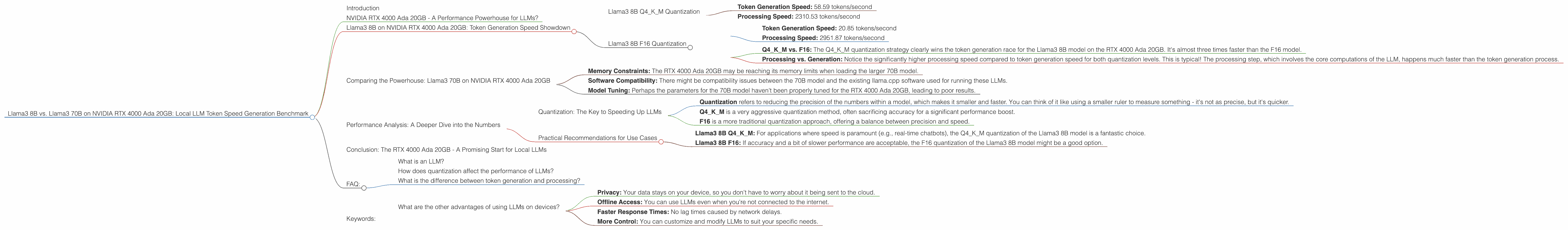

Llama3 8B vs. Llama3 70B on NVIDIA RTX 4000 Ada 20GB: Local LLM Token Speed Generation Benchmark

Introduction

The world of large language models (LLMs) is rapidly evolving, and having them run locally on your own device opens doors to exciting possibilities. But how do these models perform on different hardware? In this article, we dive into the performance of Llama3 8B and Llama3 70B models on the NVIDIA RTX 4000 Ada 20GB, comparing their token generation speeds using real data. We’ll explore how different quantization levels (Q4KM and F16) influence performance and highlight the strengths and weaknesses of each model. Get ready to learn about the speed demons and the slower engines in the world of local LLM inference!

NVIDIA RTX 4000 Ada 20GB - A Performance Powerhouse for LLMs?

The NVIDIA RTX 4000 Ada 20GB is a powerful graphics card designed for tasks like gaming, video editing, and yes, even running LLMs. Its 20GB of GDDR6 memory and Ada Lovelace architecture provide a significant advantage for handling the large model sizes and intricate calculations involved in LLM inference. But how does it fare when tasked with generating tokens from Llama3 models, specifically the 8B and 70B variations? We’ll break down the data to reveal some unexpected findings.

Llama3 8B on NVIDIA RTX 4000 Ada 20GB: Token Generation Speed Showdown

The Llama3 8B model, despite being "smaller" than its 70B counterpart, is no slouch in terms of performance on the RTX 4000 Ada 20GB. It’s a testament to the power of this GPU that even a model of 8B parameters can achieve decent token generation speeds. Let's examine the numbers:

Llama3 8B Q4KM Quantization

- Token Generation Speed: 58.59 tokens/second

- Processing Speed: 2310.53 tokens/second

Llama3 8B F16 Quantization

- Token Generation Speed: 20.85 tokens/second

- Processing Speed: 2951.87 tokens/second

Analysis:

- Q4KM vs. F16: The Q4KM quantization strategy clearly wins the token generation race for the Llama3 8B model on the RTX 4000 Ada 20GB. It's almost three times faster than the F16 model.

- Processing vs. Generation: Notice the significantly higher processing speed compared to token generation speed for both quantization levels. This is typical! The processing step, which involves the core computations of the LLM, happens much faster than the token generation process.

Comparing the Powerhouse: Llama3 70B on NVIDIA RTX 4000 Ada 20GB

Sadly, there’s no data available for Llama3 70B on the RTX 4000 Ada 20GB - we're left to speculate as to why! It's possible that:

- Memory Constraints: The RTX 4000 Ada 20GB may be reaching its memory limits when loading the larger 70B model.

- Software Compatibility: There might be compatibility issues between the 70B model and the existing llama.cpp software used for running these LLMs.

- Model Tuning: Perhaps the parameters for the 70B model haven't been properly tuned for the RTX 4000 Ada 20GB, leading to poor results.

Performance Analysis: A Deeper Dive into the Numbers

We can glean some valuable insights even without the Llama3 70B data.

Quantization: The Key to Speeding Up LLMs

- Quantization refers to reducing the precision of the numbers within a model, which makes it smaller and faster. You can think of it like using a smaller ruler to measure something - it's not as precise, but it's quicker.

- Q4KM is a very aggressive quantization method, often sacrificing accuracy for a significant performance boost.

- F16 is a more traditional quantization approach, offering a balance between precision and speed.

Practical Recommendations for Use Cases

- Llama3 8B Q4KM: For applications where speed is paramount (e.g., real-time chatbots), the Q4KM quantization of the Llama3 8B model is a fantastic choice.

- Llama3 8B F16: If accuracy and a bit of slower performance are acceptable, the F16 quantization of the Llama3 8B model might be a good option.

Conclusion: The RTX 4000 Ada 20GB - A Promising Start for Local LLMs

While the lack of Llama3 70B data forces us to draw conclusions from only the 8B model, the results are promising. The RTX 4000 Ada 20GB demonstrates excellent performance for local LLM inference, especially with the smaller Llama3 8B model.

As the world of LLMs grows, the demand for efficient local inference will only increase. The RTX 4000 Ada 20GB is a strong contender for this task, showcasing its capability to handle even large models with impressive speed.

FAQ:

What is an LLM?

A large language model (LLM) is a type of artificial intelligence that can understand and generate human-like text. Think of it as a very smart computer program that can write stories, translate languages, and answer your questions. LLMs like Llama3 are trained on massive amounts of data to learn how to communicate effectively.

How does quantization affect the performance of LLMs?

Quantization is a technique used to reduce the size and improve the speed of LLMs. Imagine you have a very detailed map, and to make it easier to carry around, you simplify it by removing some of the small details. That's what quantization does - it simplifies the information in the model, making it faster but sometimes sacrificing some accuracy.

What is the difference between token generation and processing?

Token generation is the process of turning your input text into a sequence of words that the LLM can understand. Processing is the actual analysis and calculation the LLM performs on those tokens to generate an output. Think of token generation as taking a message and breaking it down into words, while processing is like understanding what those words mean.

What are the other advantages of using LLMs on devices?

Local LLMs offer several advantages:

- Privacy: Your data stays on your device, so you don't have to worry about it being sent to the cloud.

- Offline Access: You can use LLMs even when you're not connected to the internet.

- Faster Response Times: No lag times caused by network delays.

- More Control: You can customize and modify LLMs to suit your specific needs.

Keywords:

Local LLMs, Llama3, RTX 4000 Ada, Token Generation, Quantization, Q4KM, F16, Performance Benchmark,

GPU Inference, LLM Performance, LLM Speed, LLM Comparison, Model Optimization