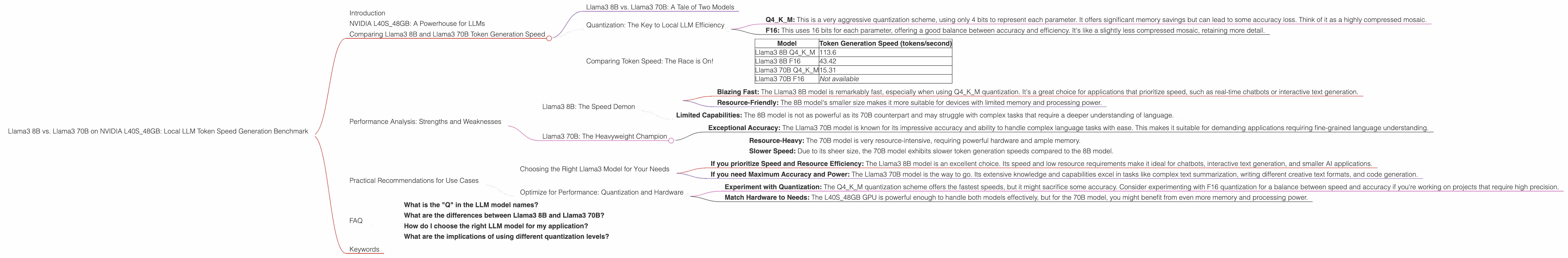

Llama3 8B vs. Llama3 70B on NVIDIA L40S 48GB: Local LLM Token Speed Generation Benchmark

Introduction

The world of Large Language Models (LLMs) is abuzz with excitement, and for good reason! These powerful algorithms can do everything from writing creative text to translating languages and generating code. But with so many different models and hardware options available, choosing the right combination for your needs can be a daunting task.

This article dives deep into the performance of two popular Llama3 models – the 8B and 70B variants – running locally on an NVIDIA L40S_48GB GPU. We'll analyze their token generation speeds, uncover their strengths and weaknesses, and provide practical recommendations for using them in your projects.

Buckle up, because we're about to embark on a thrilling journey through the heart of local LLM acceleration!

NVIDIA L40S_48GB: A Powerhouse for LLMs

The NVIDIA L40S_48GB GPU is a beast of a card, packed with 48GB of high-bandwidth GDDR6 memory and a powerful NVIDIA Ampere architecture. It's designed to tackle the most demanding workloads, including AI training and inference. But how does it measure up when it comes to running LLMs? Let's find out!

Comparing Llama3 8B and Llama3 70B Token Generation Speed

Llama3 8B vs. Llama3 70B: A Tale of Two Models

The Llama3 8B and 70B models are two popular choices for local LLM deployment. The 8B model is relatively lightweight, making it ideal for resource-constrained devices, while the 70B model offers significantly higher accuracy and capabilities, but demands more power and memory.

Quantization: The Key to Local LLM Efficiency

To run these LLMs locally, we'll use a technique called quantization. Quantization is like compressing a large model into a smaller, more manageable size. Imagine it like taking a huge painting and turning it into a mosaic – you lose some detail but gain efficiency!

Essentially, we're reducing the number of bits used to represent each parameter in the model, which significantly reduces the memory footprint and speeds up processing. In this benchmark, we'll focus on two quantization levels:

- Q4KM: This is a very aggressive quantization scheme, using only 4 bits to represent each parameter. It offers significant memory savings but can lead to some accuracy loss. Think of it as a highly compressed mosaic.

- F16: This uses 16 bits for each parameter, offering a good balance between accuracy and efficiency. It's like a slightly less compressed mosaic, retaining more detail.

Comparing Token Speed: The Race is On!

Let's examine the token generation speeds of these models:

| Model | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 8B Q4KM | 113.6 |

| Llama3 8B F16 | 43.42 |

| Llama3 70B Q4KM | 15.31 |

| Llama3 70B F16 | Not available |

Key Takeaways:

- Smaller Model, Faster Speed: The Llama3 8B model, unsurprisingly, delivers significantly faster token generation speeds compared to the larger 70B model. This is because it has fewer parameters to process.

- Quantization Trade-offs: The Q4KM quantization scheme on both models achieves much faster speeds compared to F16. However, the F16 scheme offers a balance, potentially sacrificing a bit of speed for increased accuracy.

- Model Size vs. Performance: The Llama3 8B model is a clear winner in terms of speed, especially when using Q4KM quantization. However, you'll need to weigh the trade-off between speed and the potential for accuracy loss with Q4KM compared to F16.

Performance Analysis: Strengths and Weaknesses

Llama3 8B: The Speed Demon

Strengths:

- Blazing Fast: The Llama3 8B model is remarkably fast, especially when using Q4KM quantization. It's a great choice for applications that prioritize speed, such as real-time chatbots or interactive text generation.

- Resource-Friendly: The 8B model's smaller size makes it more suitable for devices with limited memory and processing power.

Weaknesses:

- Limited Capabilities: The 8B model is not as powerful as its 70B counterpart and may struggle with complex tasks that require a deeper understanding of language.

Llama3 70B: The Heavyweight Champion

Strengths:

- Exceptional Accuracy: The Llama3 70B model is known for its impressive accuracy and ability to handle complex language tasks with ease. This makes it suitable for demanding applications requiring fine-grained language understanding.

Weaknesses:

- Resource-Heavy: The 70B model is very resource-intensive, requiring powerful hardware and ample memory.

- Slower Speed: Due to its sheer size, the 70B model exhibits slower token generation speeds compared to the 8B model.

Practical Recommendations for Use Cases

Choosing the Right Llama3 Model for Your Needs

- If you prioritize Speed and Resource Efficiency: The Llama3 8B model is an excellent choice. Its speed and low resource requirements make it ideal for chatbots, interactive text generation, and smaller AI applications.

- If you need Maximum Accuracy and Power: The Llama3 70B model is the way to go. Its extensive knowledge and capabilities excel in tasks like complex text summarization, writing different creative text formats, and code generation.

Optimize for Performance: Quantization and Hardware

- Experiment with Quantization: The Q4KM quantization scheme offers the fastest speeds, but it might sacrifice some accuracy. Consider experimenting with F16 quantization for a balance between speed and accuracy if you're working on projects that require high precision.

- Match Hardware to Needs: The L40S_48GB GPU is powerful enough to handle both models effectively, but for the 70B model, you might benefit from even more memory and processing power.

FAQ

What is the "Q" in the LLM model names?

The "Q" refers to quantization, a method that reduces the size of LLM models by reducing the number of bits per parameter. This results in smaller models that can run more efficiently on devices with limited resources, like laptops and mobile devices.

What are the differences between Llama3 8B and Llama3 70B?

The Llama3 8B model is smaller and faster but has limited capabilities. The Llama3 70B model is larger and more powerful but requires more resources to run.

How do I choose the right LLM model for my application?

Consider your requirements for accuracy, speed, and resource availability. If you prioritize speed and efficiency, choose the 8B model. If you need the highest accuracy and capabilities, go with the 70B model.

What are the implications of using different quantization levels?

Lower quantization levels (like Q4KM) result in smaller, faster models but may sacrifice some accuracy. Higher quantization levels (like F16) preserve more accuracy but result in larger models with slower processing.

Keywords

Llama3, 8B, 70B, LLM, NVIDIA, L40S48GB, GPU, Token Speed, Generation, Benchmark, Quantization, Q4K_M, F16, Model Size, Performance, Accuracy, Speed, Resource Efficiency, Use Cases, Applications, Chatbot, Text Generation, Summarization, Code Generation, Hardware, Optimization