Llama3 8B vs. Llama2 7B on Apple M3 Max: Local LLM Token Speed Generation Benchmark

Introduction to LLM Token Speed and the Apple M3 Max

It's a wild world out there, and the world of Large Language Models (LLMs) is especially chaotic. Every day, a new one pops up, claiming to be the fastest, most powerful, and most intelligent AI around. 🤯 But how do we actually compare these LLMs and figure out which one is truly the best fit for our needs?

One key factor is token speed. This refers to how quickly an LLM can process and generate text, measured in tokens/second. Think of it as the LLM's typing speed, except instead of typing words, it's spitting out those magical tokens that make up language.

And if you're a developer looking to run these LLMs locally on your own machine, a powerful GPU is essential. That's where the Apple M3 Max comes in - a beast of a processor that can handle those demanding LLM workloads. 💪

In this article, we'll dive deep into the world of token speeds, comparing the popular Llama 3 8B and Llama 2 7B models on the Apple M3 Max. We'll analyze their performance side-by-side, highlighting their strengths and weaknesses, and helping you make the best decision for your projects.

So, buckle up and get ready to learn how these LLMs perform on the M3 Max, because things are about to get real! 🚀

Apple M3 Max Token Speed Generation: Llama3 8B vs. Llama2 7B

Let's get down to the nitty-gritty. We'll compare the performance of Llama 3 8B and Llama 2 7B on the Apple M3 Max, focusing on token generation speed, which is how quickly they can generate text. We'll also explore the differences in processing speed, as that can also greatly impact the overall experience.

Apple M3 Max Token Speed Generation: Llama3 8B

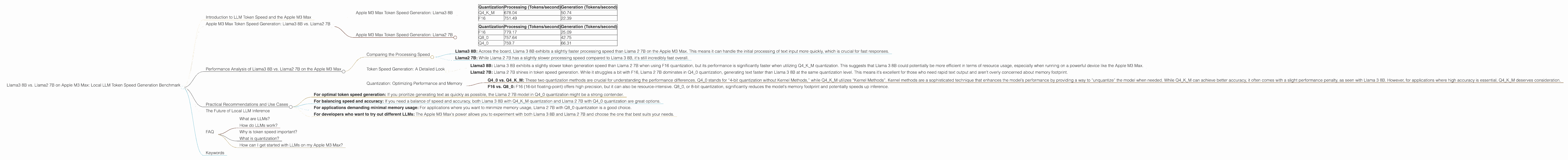

Here is the token speed generation performance of Llama 3 8B on the Apple M3 Max for various quantization levels:

| Quantization | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|

| Q4KM | 678.04 | 50.74 |

| F16 | 751.49 | 22.39 |

Apple M3 Max Token Speed Generation: Llama2 7B

Here's the Llama 2 7B performance on the M3 Max for different quantization levels:

| Quantization | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|

| F16 | 779.17 | 25.09 |

| Q8_0 | 757.64 | 42.75 |

| Q4_0 | 759.7 | 66.31 |

Note: Unfortunately, Llama3 70B F16 performance on the M3 Max is not available.

Performance Analysis of Llama3 8B vs. Llama2 7B on the Apple M3 Max

Comparing the Processing Speed

- Llama3 8B: Across the board, Llama 3 8B exhibits a slightly faster processing speed than Llama 2 7B on the Apple M3 Max. This means it can handle the initial processing of text input more quickly, which is crucial for fast responses.

- Llama2 7B: While Llama 2 7B has a slightly slower processing speed compared to Llama 3 8B, it's still incredibly fast overall.

Token Speed Generation: A Detailed Look

- Llama3 8B: Llama 3 8B exhibits a slightly slower token generation speed than Llama 2 7B when using F16 quantization, but its performance is significantly faster when utilizing Q4KM quantization. This suggests that Llama 3 8B could potentially be more efficient in terms of resource usage, especially when running on a powerful device like the Apple M3 Max.

- Llama2 7B: Llama 2 7B shines in token speed generation. While it struggles a bit with F16, Llama 2 7B dominates in Q4_0 quantization, generating text faster than Llama 3 8B at the same quantization level. This means it's excellent for those who need rapid text output and aren't overly concerned about memory footprint.

Quantization: Optimizing Performance and Memory

- Q40 vs. Q4KM: These two quantization methods are crucial for understanding the performance differences. Q40 stands for “4-bit quantization without Kernel Methods,” while Q4KM utilizes “Kernel Methods”. Kernel methods are a sophisticated technique that enhances the model’s performance by providing a way to “unquantize” the model when needed. While Q4KM can achieve better accuracy, it often comes with a slight performance penalty, as seen with Llama 3 8B. However, for applications where high accuracy is essential, Q4KM deserves consideration.

- F16 vs. Q80: F16 (16-bit floating-point) offers high precision, but it can also be resource-intensive. Q80, or 8-bit quantization, significantly reduces the model's memory footprint and potentially speeds up inference.

Practical Recommendations and Use Cases

So, which LLM reigns supreme on the Apple M3 Max? It depends on your needs!

Here's a quick breakdown to help you decide:

- For optimal token speed generation: If you prioritize generating text as quickly as possible, the Llama 2 7B model in Q4_0 quantization might be a strong contender.

- For balancing speed and accuracy: If you need a balance of speed and accuracy, both Llama 3 8B with Q4KM quantization and Llama 2 7B with Q4_0 quantization are great options.

- For applications demanding minimal memory usage: For applications where you want to minimize memory usage, Llama 2 7B with Q8_0 quantization is a good choice.

- For developers who want to try out different LLMs: The Apple M3 Max's power allows you to experiment with both Llama 3 8B and Llama 2 7B and choose the one that best suits your needs.

The Future of Local LLM Inference

This benchmark provides a glimpse into the exciting future of local LLM inference. With the Apple M3 Max and other powerful GPUs, running these sophisticated models locally is becoming increasingly feasible.

As hardware continues to advance and LLMs become even more robust, we can expect faster processing and generation speeds, unlocking new possibilities for developers and researchers.

FAQ

What are LLMs?

LLMs are Large Language Models, a type of artificial intelligence that excels at understanding and generating human-like text. Imagine a super-powered chatbot that can write stories, translate languages, and answer your questions.

How do LLMs work?

LLMs use a massive dataset of text to learn patterns in language. They use this knowledge to generate new text, translate between languages, summarize information, and perform other language-related tasks.

Why is token speed important?

Token speed is crucial because it dictates how quickly an LLM can process and generate text. Faster token speeds mean faster response times and a more seamless user experience.

What is quantization?

Quantization is a technique used to reduce the size of a model without sacrificing too much accuracy. Think of it like shrinking a giant photo without losing too much detail.

How can I get started with LLMs on my Apple M3 Max?

You can find various resources online that guide you through setting up and running LLMs on the Apple M3 Max. Start with the documentation provided by the specific LLM you're interested in.

Keywords

LLM, Llama 3 8B, Llama 2 7B, Apple M3 Max, Token Speed, Quantization, F16, Q40, Q80, Q4KM, Processing Speed, Generation Speed, Local Inference, AI, Machine Learning, Performance, Benchmark, GPU, Text Generation, Development, Resources, Comparison, Recommendations, Use Cases, Future of LLM