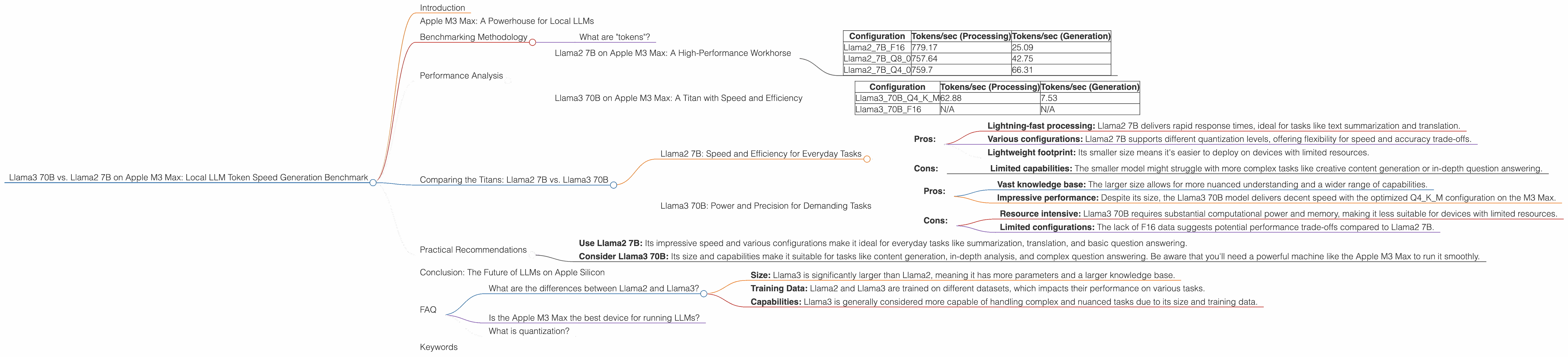

Llama3 70B vs. Llama2 7B on Apple M3 Max: Local LLM Token Speed Generation Benchmark

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason! These powerful AI models can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these LLMs can be computationally expensive, and often requires powerful hardware like a dedicated GPU.

This article dives into the exciting world of local LLM deployment on the Apple M3 Max, a powerful chip designed for demanding tasks like video editing and 3D modeling. We'll compare the performance of two popular LLMs, Llama2 7B (a smaller, more efficient model) and Llama3 70B (a larger, more capable model), on this powerful chip. We'll analyze token generation speeds for different quantization levels and precisions, highlighting the strengths, weaknesses, and potential use cases for each model. Buckle up, it's going to be a thrilling ride through the world of LLM token generation!

Apple M3 Max: A Powerhouse for Local LLMs

The Apple M3 Max is a high-performance chip designed for demanding tasks, and it's quickly becoming a popular choice for developers and enthusiasts wanting to run LLMs locally. With its massive bandwidth and multiple GPU cores, it provides the muscle needed to efficiently process large datasets during LLM inference.

Benchmarking Methodology

This benchmark focuses on tokens per second (tokens/sec), a metric that reflects the number of tokens a model can generate per second. Higher tokens/sec means faster processing and quicker responses.

What are "tokens"?

Imagine you're building a Lego structure: each piece is a "token." In language models, each token is a word, punctuation mark, or even a part of a word. Each word is broken down into smaller chunks. These tokens are the building blocks of the language model's knowledge and understanding.

We've used data from Performance of llama.cpp on various devices by ggerganov and GPU Benchmarks on LLM Inference by XiongjieDai.

Performance Analysis

Llama2 7B on Apple M3 Max: A High-Performance Workhorse

The Llama2 7B model, despite its smaller size, showcases excellent performance on the Apple M3 Max. Here's a breakdown:

Quantization and Precision:

- F16: This precision is the highest, resulting in generally better accuracy.

- Q8_0: This quantization level provides a good balance between accuracy and speed.

- Q4_0: This quantization level prioritizes speed over accuracy, making it suitable for real-time applications.

Here's a table summarizing Llama2 7B's performance on the M3 Max:

| Configuration | Tokens/sec (Processing) | Tokens/sec (Generation) |

|---|---|---|

| Llama27BF16 | 779.17 | 25.09 |

| Llama27BQ8_0 | 757.64 | 42.75 |

| Llama27BQ4_0 | 759.7 | 66.31 |

Observations:

- Llama2 7B demonstrates impressive processing speed, even at the lowest quantization level. This makes it ideal for tasks like summarization or text translation where speed is crucial.

- While the token generation speed is lower than processing speed, it's still reasonable, especially in the Q4_0 configuration.

Llama3 70B on Apple M3 Max: A Titan with Speed and Efficiency

The Llama3 70B model, a giant in the world of LLMs, shows remarkable performance considering its size and complexity. Let's delve into its performance on the M3 Max:

Quantization and Precision:

- Q4KM: This quantization level is optimized for speed and memory efficiency.

- F16: Not available for Llama3 70B on the M3 Max.

Here's a table summarizing Llama3 70B's performance on the M3 Max:

| Configuration | Tokens/sec (Processing) | Tokens/sec (Generation) |

|---|---|---|

| Llama370BQ4KM | 62.88 | 7.53 |

| Llama370BF16 | N/A | N/A |

Observations:

- The Llama3 70B model's performance, while not as fast as the Llama2 7B, is still impressive given its much larger size. Its Q4KM configuration delivers a respectable speed.

- The lack of F16 data for Llama3 70B on the M3 Max suggests that its performance might be less ideal at the highest precision.

Comparing the Titans: Llama2 7B vs. Llama3 70B

Both Llama2 7B and Llama3 70B show promise for local deployment on the Apple M3 Max. However, their different sizes and strengths lead to distinct use case scenarios.

Llama2 7B: Speed and Efficiency for Everyday Tasks

- Pros:

- Lightning-fast processing: Llama2 7B delivers rapid response times, ideal for tasks like text summarization and translation.

- Various configurations: Llama2 7B supports different quantization levels, offering flexibility for speed and accuracy trade-offs.

- Lightweight footprint: Its smaller size means it's easier to deploy on devices with limited resources.

- Cons:

- Limited capabilities: The smaller model might struggle with more complex tasks like creative content generation or in-depth question answering.

Llama3 70B: Power and Precision for Demanding Tasks

- Pros:

- Vast knowledge base: The larger size allows for more nuanced understanding and a wider range of capabilities.

- Impressive performance: Despite its size, the Llama3 70B model delivers decent speed with the optimized Q4KM configuration on the M3 Max.

- Cons:

- Resource intensive: Llama3 70B requires substantial computational power and memory, making it less suitable for devices with limited resources.

- Limited configurations: The lack of F16 data suggests potential performance trade-offs compared to Llama2 7B.

Practical Recommendations

For developers looking for a fast and efficient LLM:

- Use Llama2 7B: Its impressive speed and various configurations make it ideal for everyday tasks like summarization, translation, and basic question answering.

For developers working on complex tasks or requiring a vast knowledge base:

- Consider Llama3 70B: Its size and capabilities make it suitable for tasks like content generation, in-depth analysis, and complex question answering. Be aware that you'll need a powerful machine like the Apple M3 Max to run it smoothly.

Conclusion: The Future of LLMs on Apple Silicon

The Apple M3 Max is proving to be a powerful platform for running LLMs locally, offering impressive performance and efficiency for both smaller models like Llama2 7B and larger models like Llama3 70B. As these models continue to evolve and improve, expect even more exciting possibilities for local LLM deployment on Apple Silicon. The future of LLMs on Apple Silicon is bright, and it's just getting started!

FAQ

What are the differences between Llama2 and Llama3?

Llama2 and Llama3 are both large language models, but they differ in several key aspects:

- Size: Llama3 is significantly larger than Llama2, meaning it has more parameters and a larger knowledge base.

- Training Data: Llama2 and Llama3 are trained on different datasets, which impacts their performance on various tasks.

- Capabilities: Llama3 is generally considered more capable of handling complex and nuanced tasks due to its size and training data.

Is the Apple M3 Max the best device for running LLMs?

The Apple M3 Max offers impressive performance for running LLMs, but it's not the only option. Other powerful GPUs and processors can also handle LLMs effectively. Ultimately, the best device for running an LLM depends on your budget, performance needs, and the specific model you choose.

What is quantization?

Quantization is a technique used to compress large language models, making them smaller and faster. It works by reducing the number of bits needed to represent each value in the model's weights. Think of it like using smaller building blocks to build the same structure. This reduces the memory footprint and can increase processing speed.

Keywords

LLMs, Llama3, Llama2, Apple M3 Max, Token Speed, Generation, Processing, Quantization, F16, Q80, Q40, Q4KM, Local Deployment, Performance Benchmark, GPU, Bandwidth, Inference, AI, Machine Learning, Deep Learning, Natural Language Processing, NLP, Developer, Geek, Performance Analysis, Practical Recommendations, FAQ.