Is NVIDIA RTX A6000 48GB Powerful Enough for Llama3 8B?

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement. These powerful AI models can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models locally on your own machine can be quite demanding, especially if you want to use the newest and most powerful models like Llama 3.

In this deep dive, we'll explore whether the NVIDIA RTXA600048GB, a popular choice for demanding tasks like machine learning, is up to the challenge of running Llama3 8B. We'll look at performance benchmarks, compare it to other models, and provide practical recommendations for putting this powerful combination to work for you.

So, let's dive into the data and see if the RTXA600048GB can handle Llama3 8B like a boss!

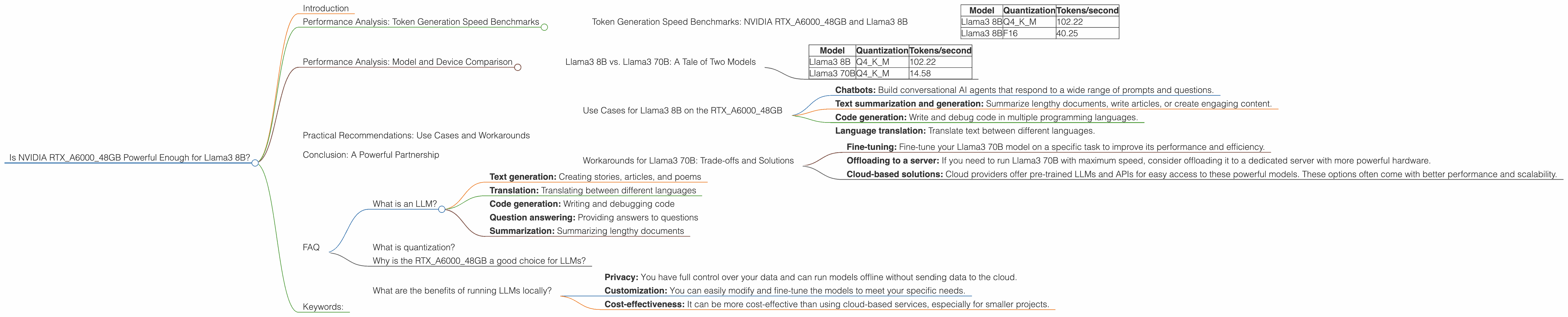

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA RTXA600048GB and Llama3 8B

The first key metric for assessing the efficiency of your system is token generation speed, which measures how quickly the model can produce new text.

| Model | Quantization | Tokens/second |

|---|---|---|

| Llama3 8B | Q4KM | 102.22 |

| Llama3 8B | F16 | 40.25 |

What does this tell us?

- Quantization: LLMs are often quantized to reduce their memory footprint and speed up inference. "Q4KM" is a quantization method that reduces the size of the model by 4x while maintaining good performance. "F16" uses half-precision floating point numbers, which is another common method for model compression.

- Token generation speed: The RTXA600048GB can generate 102.22 tokens per second with Llama3 8B using Q4KM quantization. This is 2.5 times faster than the F16 quantization which generates 40.25 tokens/second.

Think of it this way: You're typing on a super-fast keyboard, with Q4KM making you a 2.5x faster typer compared to F16.

Performance Analysis: Model and Device Comparison

Llama3 8B vs. Llama3 70B: A Tale of Two Models

Now, let's compare the performance of Llama3 8B with its larger sibling, Llama3 70B. The 70B model is significantly more complex and requires more resources, so performance is going to be different.

| Model | Quantization | Tokens/second |

|---|---|---|

| Llama3 8B | Q4KM | 102.22 |

| Llama3 70B | Q4KM | 14.58 |

Key Takeaways:

- Performance difference: While the RTXA600048GB can handle Llama3 8B with impressive speed, the 70B model is significantly slower, generating only 14.58 tokens per second with Q4KM quantization. This is 7 times slower than Llama3 8B.

- Model complexity: The larger 70B model is more demanding on the GPU's resources, resulting in a significant performance drop.

Think of it this way: It's like comparing a sports car to a semi-truck. The sports car (Llama3 8B) can zip around quickly, while the semi-truck (Llama3 70B) takes a longer, more deliberate approach.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 8B on the RTXA600048GB

Given the solid performance of Llama3 8B on this powerful GPU, here are some real-world use cases that you might consider:

- Chatbots: Build conversational AI agents that respond to a wide range of prompts and questions.

- Text summarization and generation: Summarize lengthy documents, write articles, or create engaging content.

- Code generation: Write and debug code in multiple programming languages.

- Language translation: Translate text between different languages.

Workarounds for Llama3 70B: Trade-offs and Solutions

While running Llama3 70B on the RTXA600048GB might be a bit of a bottleneck, there are ways to work around the challenge:

- Fine-tuning: Fine-tune your Llama3 70B model on a specific task to improve its performance and efficiency.

- Offloading to a server: If you need to run Llama3 70B with maximum speed, consider offloading it to a dedicated server with more powerful hardware.

- Cloud-based solutions: Cloud providers offer pre-trained LLMs and APIs for easy access to these powerful models. These options often come with better performance and scalability.

Conclusion: A Powerful Partnership

The combination of the NVIDIA RTXA600048GB and Llama3 8B is a solid choice for running powerful LLMs locally. This powerful partnership offers a strong foundation for building and deploying a wide range of AI applications.

While the RTXA600048GB can handle Llama3 8B with impressive speed, it's important to consider the performance trade-offs when working with larger models like Llama3 70B. We've covered the use cases and workarounds to help you make the best decision for your project.

Now, go forth and build amazing things with LLMs!

FAQ

What is an LLM?

An LLM (Large Language Model) is a type of artificial intelligence system that can understand and generate human-like text. They are trained on massive datasets of text and code, enabling them to perform tasks such as:

- Text generation: Creating stories, articles, and poems

- Translation: Translating between different languages

- Code generation: Writing and debugging code

- Question answering: Providing answers to questions

- Summarization: Summarizing lengthy documents

What is quantization?

Quantization is a technique used to reduce the size of LLM models without significantly impacting their performance. It works by reducing the number of bits used to represent each number in the model. This makes the model smaller and faster to load and run.

Why is the RTXA600048GB a good choice for LLMs?

The RTXA600048GB is a powerful GPU designed for demanding tasks like machine learning. It has a large amount of memory (48GB) and a high-performance architecture, making it ideal for running LLMs with a significant number of parameters.

What are the benefits of running LLMs locally?

Running LLMs locally offers several benefits:

- Privacy: You have full control over your data and can run models offline without sending data to the cloud.

- Customization: You can easily modify and fine-tune the models to meet your specific needs.

- Cost-effectiveness: It can be more cost-effective than using cloud-based services, especially for smaller projects.

Keywords:

NVIDIA RTXA600048GB, Llama3 8B, LLM, Large Language Model, Token Generation Speed, Quantization, Q4KM, F16, Performance Analysis, Model Comparison, Use Cases, Workarounds, Cloud Services, Fine-tuning, Offloading, Local Inference, AI, Machine Learning, Text Generation, Translation, Code Generation, Chatbots, Text Summarization,