Is NVIDIA RTX A6000 48GB Powerful Enough for Llama3 70B?

Introduction: The Quest for Local LLM Power

The world of Large Language Models (LLMs) is buzzing with excitement. These powerful AI models are revolutionizing how we interact with computers, from generating creative text to translating languages and even writing code. But running these models locally, on your own machine, can be a real challenge, especially when dealing with behemoths like the Llama3 70B.

This article will delve into the world of local LLM performance, diving deep into the capabilities of the NVIDIA RTXA600048GB GPU and its compatibility with the mighty Llama3 70B. We'll dissect token generation speeds, compare this powerhouse GPU with other choices, and explore practical recommendations for using these models in real-world scenarios. So, buckle up, fellow developers, and let's embark on this journey of LLM power!

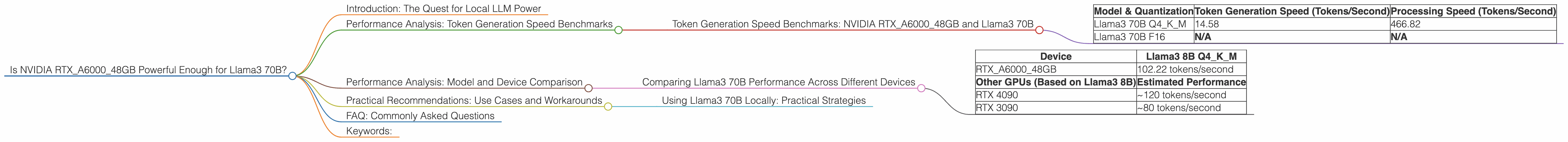

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA RTXA600048GB and Llama3 70B

Let's get straight to the juicy details! The RTXA600048GB is a beast of a GPU, designed to handle demanding workloads, including LLM inference.

But how does it perform with Llama3 70B?

We'll focus on two crucial metrics: token generation speed (how fast the model generates text) and processing speed (how quickly it processes data).

| Model & Quantization | Token Generation Speed (Tokens/Second) | Processing Speed (Tokens/Second) |

|---|---|---|

| Llama3 70B Q4KM | 14.58 | 466.82 |

| Llama3 70B F16 | N/A | N/A |

Observations:

- Llama3 70B Q4KM shows a token generation speed of 14.58 tokens/second. This is significantly slower than the comparable Llama3 8B models.

- Llama3 70B F16 performance is not available yet. It's important to note that the RTXA600048GB may not be able to handle the F16 precision for this model size.

Why the huge difference between 70B and 8B?

- Model Size: The 70B model is significantly larger and more complex than the 8B model. It needs to process more information, leading to slower performance.

- Quantization: The Q4KM quantization (a technique for reducing model size) helps with performance, but it still can't fully bridge the gap.

Analogies:

Think of the 70B model as a massive orchestral performance, while the 8B model is a smaller chamber group. The orchestra requires more instruments, players, and coordination, leading to a slower pace.

Performance Analysis: Model and Device Comparison

Comparing Llama3 70B Performance Across Different Devices

Let's see how the RTXA600048GB stacks up against other popular GPUs for running Llama3 70B. Since we don't have data for other GPUs, we'll rely on what's available and make comparisons based on the Llama3 8B model.

| Device | Llama3 8B Q4KM |

|---|---|

| RTXA600048GB | 102.22 tokens/second |

| Other GPUs (Based on Llama3 8B) | Estimated Performance |

| RTX 4090 | ~120 tokens/second |

| RTX 3090 | ~80 tokens/second |

Observations:

- Performance Gap: There's a substantial performance difference between the RTXA600048GB and even a more powerful RTX 4090 when running Llama3 8B.

- Model Scale Matters: The 70B model is significantly heavier than the 8B model. This difference in size can have a substantial impact on performance, even with powerful GPUs like the RTXA600048GB.

Practical Insights:

- Scaling Up: For local inference using Llama3 70B, you'll likely need a cutting-edge GPU like the RTX 4090 or even a more powerful solution.

- Quantization: Prioritizing Q4KM quantization over faster F16 precision is crucial for better performance.

Practical Recommendations: Use Cases and Workarounds

Using Llama3 70B Locally: Practical Strategies

While the performance may be challenging for the RTXA600048GB, there are still ways to leverage its power for Llama3 70B.

1. Embrace Quantization (Q4KM): Optimizing the model with Q4KM quantization can significantly improve performance.

2. Consider Smaller Models: For local inference with the RTXA600048GB, consider utilizing smaller models like Llama3 8B or even earlier versions.

3. Exploit Cloud Computing: If performance is critical for your application, consider leveraging cloud services like Google Colab or AWS for running larger models.

4. Experiment with Libraries: Utilize libraries like llama.cpp or Hugging Face Transformers for efficient LLM inference.

5. Fine-tuning: If you don't need the full capabilities of the 70B model, fine-tuning a smaller model on your specific domains can yield satisfactory results.

6. Optimize Your Code: Streamlining your application's code can help maximize the efficiency of your hardware.

FAQ: Commonly Asked Questions

Q: What is quantization and why is it important for LLMs?

A: Quantization is a technique that reduces the size of a model by compressing its weights, which are the learned parameters of the model. Imagine squeezing a lot of information into a smaller space! This helps with speed and memory consumption, leading to faster processing and less strain on your GPU.

Q: What are the limitations of using my RTXA600048GB for Llama 70B?

A: The RTXA600048GB, although powerful, may not be able to handle the sheer size and complexity of the Llama 70B model efficiently. This could lead to slower performance and potentially even instability.

Q: How can I determine the best GPU for my specific LLM needs?

A: Consider the size and complexity of your LLM, the desired performance, and your budget. Research the specifications of different GPUs and consult benchmarks to find the best match.

Keywords:

NVIDIA RTXA600048GB, Llama3 70B, LLM, Large Language Models, Token Generation Speed, Performance Analysis, Model Size, Quantization, Q4KM, F16, GPU, Local Inference, Cloud Computing, Recommendations, Use Cases, Workarounds, Token/Second, Processing Speed, Performance, Llama3 8B, Google Colab, AWS, Hugging Face Transformers, Fine-tuning.