Is NVIDIA RTX A6000 48GB a Good Investment for AI Startups?

Introduction

The world of AI is buzzing with excitement, and at the heart of this excitement are Large Language Models (LLMs). These intelligent systems are capable of generating human-like text, translating languages, and even writing creative content. But harnessing the power of LLMs requires serious hardware, and one of the most popular choices for AI startups is the NVIDIA RTX A6000 48GB.

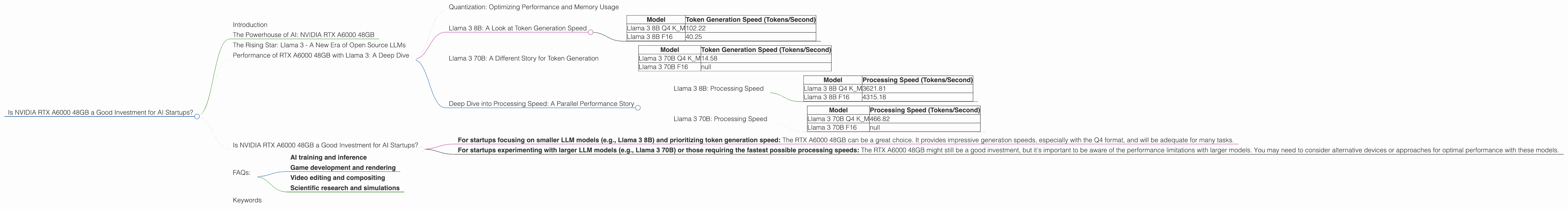

This article will delve into the capabilities of the RTX A6000 48GB, exploring its performance with different LLM models like Llama 3, and analyzing if it’s a worthwhile investment for budding AI businesses. We'll examine the performance of the RTX A6000 48GB with specific Llama 3 models, taking into account the crucial factors of quantization, inference speed, and processing speed.

The Powerhouse of AI: NVIDIA RTX A6000 48GB

This beast of a graphics card is equipped with 48GB of GDDR6 memory and boasts 10,752 CUDA cores. It's designed for demanding tasks like AI training and inference, game development, and scientific research. The A6000 is a workhorse in the AI world, capable of handling complex calculations and large datasets with ease.

The Rising Star: Llama 3 - A New Era of Open Source LLMs

Llama 3, the latest iteration of the Llama series, has taken the open-source AI community by storm. This powerful LLM, developed by Meta, has significant potential for various applications, from text generation to natural language understanding. It comes in various sizes including 7B, 8B, and 70B, and each size boasts a unique set of capabilities. The larger models are especially impressive in terms of their ability to handle complex tasks and generate nuanced responses.

Performance of RTX A6000 48GB with Llama 3: A Deep Dive

Let's examine the performance of the RTX A6000 48GB with Llama 3, focusing on token generation speed and processing speed. The numbers below represent tokens per second, which essentially measures how fast the system can process and generate text.

Quantization: Optimizing Performance and Memory Usage

Before diving into the figures, it's important to understand quantization, a technique used to improve the performance and memory efficiency of LLMs. Quantization involves reducing the number of bits needed to represent the model's data, thereby lessening the computational load and memory requirements.

Think of it like encoding a photograph in different resolutions - a high-resolution image requires more storage space but provides greater detail, whereas a low-resolution image takes up less space but sacrifices some detail.

In this case, the RTX A6000 48GB can handle Llama 3 models quantized to Q4 (four bits) and F16 (half-precision floating point). Q4 is the most memory-efficient format, while F16 offers a balance between performance and memory usage.

Llama 3 8B: A Look at Token Generation Speed

| Model | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama 3 8B Q4 K_M | 102.22 |

| Llama 3 8B F16 | 40.25 |

The RTX A6000 48GB demonstrates impressive token generation speed with the Llama 3 8B model. The Q4 format, with its smaller memory footprint, leads to faster token generation speeds than the F16 format.

In simple terms, the RTX A6000 can process and generate more text per second with the Q4 format.

Llama 3 70B: A Different Story for Token Generation

| Model | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama 3 70B Q4 K_M | 14.58 |

| Llama 3 70B F16 | null |

The token generation speed with the Llama 3 70B model is noticeably slower compared to the 8B model. This difference can be attributed to the larger size of the 70B model, which involves significantly more parameters and calculations. It's also important to note that the data for the F16 format is unavailable.

Deep Dive into Processing Speed: A Parallel Performance Story

Now, let's look at the RTX A6000's processing speed, which measures how quickly it can process information and perform calculations.

Llama 3 8B: Processing Speed

| Model | Processing Speed (Tokens/Second) |

|---|---|

| Llama 3 8B Q4 K_M | 3621.81 |

| Llama 3 8B F16 | 4315.18 |

The RTX A6000 48GB exhibits impressive processing speed with the Llama 3 8B model, exceeding the token generation speed by a considerable margin. Interestingly, the F16 format demonstrates even faster processing speed than the Q4 format. This might be because the F16 format, despite its larger memory footprint, allows for faster calculations.

Llama 3 70B: Processing Speed

| Model | Processing Speed (Tokens/Second) |

|---|---|

| Llama 3 70B Q4 K_M | 466.82 |

| Llama 3 70B F16 | null |

Similar to token generation, the processing speed with the Llama 70B model is slower than the 8B model due to the increased complexity of the larger model. The data for the F16 format is again unavailable.

Is NVIDIA RTX A6000 48GB a Good Investment for AI Startups?

The answer to this question depends on your specific needs and goals.

- For startups focusing on smaller LLM models (e.g., Llama 3 8B) and prioritizing token generation speed: The RTX A6000 48GB can be a great choice. It provides impressive generation speeds, especially with the Q4 format, and will be adequate for many tasks.

- For startups experimenting with larger LLM models (e.g., Llama 3 70B) or those requiring the fastest possible processing speeds: The RTX A6000 48GB might still be a good investment, but it's important to be aware of the performance limitations with larger models. You may need to consider alternative devices or approaches for optimal performance with these models.

Remember, these are general guidelines. The best decision depends on your specific project requirements, budget, and desired performance levels.

FAQs:

Q1: What are the differences between Llama 3 7B, 8B, and 70B?

A1: The number (like 7B, 8B, and 70B) represents the number of parameters in the model. Larger models have more parameters, making them more powerful and capable of handling complex tasks and generating more nuanced responses. However, they also require more computing resources and memory.

Q2: What is quantization?

A2: Imagine shrinking a large image into a smaller one, but instead of losing detail, you're focusing on the most important parts. Quantization does this by reducing the precision of model weights, thereby shrinking the file size and speeding up computations.

Q3: Why are the F16 token generation speeds missing?

A3: Unfortunately, the data for the F16 format with the Llama 3 70B model is not available yet. This information might be added in the future as these tools and techniques are constantly being developed.

Q4: How do other GPUs compare to the RTX A6000 48GB for LLM inference?

A4: The RTX A6000 48GB is a top-tier graphics card optimized for AI workloads. However, other powerful options exist, such as the NVIDIA A100 and the AMD MI200. The best choice depends on your specific needs and budget.

Q5: Can I use the RTX A6000 48GB for other purposes besides LLMs?

A5: Absolutely! The RTX A6000 48GB is a versatile powerhouse that can be used for a wide range of tasks including:

- AI training and inference

- Game development and rendering

- Video editing and compositing

- Scientific research and simulations

Keywords

NVIDIA RTX A6000 48GB, Llama 3, AI startup, LLM, token generation, processing speed, quantization, Q4, F16, GPU, inference, performance, open-source, AI, machine learning, deep learning