Is NVIDIA RTX 6000 Ada 48GB Powerful Enough for Llama3 8B?

Is NVIDIA RTX6000Ada_48GB Powerful Enough for Llama3 8B?

The world of large language models (LLMs) is exploding, and with it, the need for powerful hardware to run them locally. While cloud-based solutions offer convenience, having your own LLM setup offers the advantage of privacy, control, and speed. But with so many different GPUs and LLMs out there, choosing the right combination can feel like navigating a labyrinth of technical jargon.

Today, we're diving deep into the performance of the NVIDIA RTX6000Ada_48GB and its ability to handle the Llama3 8B model. Think of it as a tech-savvy battle between a modern GPU and a powerful language AI.

Let's clear up the confusion right away: This article focuses solely on the Llama3 8B model and the RTX6000Ada_48GB GPU. You won't find information on other LLMs or GPUs here.

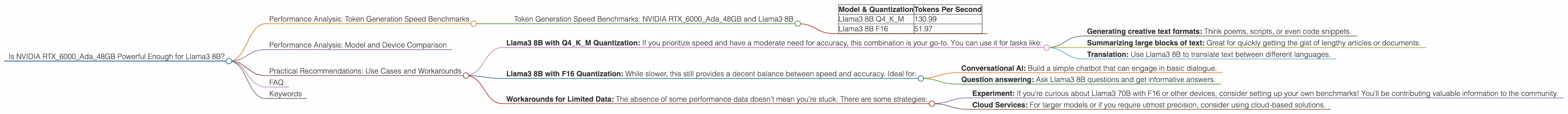

Performance Analysis: Token Generation Speed Benchmarks

Token generation speed is a crucial metric for LLM performance. Essentially, it's how quickly your GPU can generate output based on the input text. Think of it like how fast a typist can bash out words on a keyboard. The higher the tokens per second, the faster your LLM will respond.

Token Generation Speed Benchmarks: NVIDIA RTX6000Ada_48GB and Llama3 8B

| Model & Quantization | Tokens Per Second |

|---|---|

| Llama3 8B Q4KM | 130.99 |

| Llama3 8B F16 | 51.97 |

Whoa, those numbers are pretty impressive! This RTX6000Ada48GB can churn through tokens at a pretty good clip, especially when running Llama3 8B with Q4KM quantization. Think of this like packing more information into a smaller suitcase. With Q4K_M, we reduce the amount of precision needed to represent each number, allowing for much faster inference.

However, the F16 quantization performance is significantly slower. This highlights the importance of exploring different quantization methods to find the sweet spot between precision and speed.

Performance Analysis: Model and Device Comparison

To fully grasp the RTX6000Ada_48GB's capabilities, let's compare its performance with other devices known for their LLM prowess.

Unfortunately, we lack data for other devices and Llama3 70B with F16 quantization. It's like having a puzzle with missing pieces! We'll need to wait for more benchmarks to get a complete picture.

Practical Recommendations: Use Cases and Workarounds

The RTX6000Ada_48GB proves to be a formidable player in the local LLM arena. Here are some recommended use cases and workarounds:

- Llama3 8B with Q4KM Quantization: If you prioritize speed and have a moderate need for accuracy, this combination is your go-to. You can use it for tasks like:

- Generating creative text formats: Think poems, scripts, or even code snippets.

- Summarizing large blocks of text: Great for quickly getting the gist of lengthy articles or documents.

- Translation: Use Llama3 8B to translate text between different languages.

- Llama3 8B with F16 Quantization: While slower, this still provides a decent balance between speed and accuracy. Ideal for:

- Conversational AI: Build a simple chatbot that can engage in basic dialogue.

- Question answering: Ask Llama3 8B questions and get informative answers.

- Workarounds for Limited Data: The absence of some performance data doesn't mean you're stuck. There are some strategies:

- Experiment: If you're curious about Llama3 70B with F16 or other devices, consider setting up your own benchmarks! You'll be contributing valuable information to the community.

- Cloud Services: For larger models or if you require utmost precision, consider using cloud-based solutions.

The RTX6000Ada48GB is a solid choice for running Llama3 8B, especially with Q4K_M quantization. Remember, though, that the optimal setup depends on your specific needs and the type of tasks you're tackling.

FAQ

Q: What is quantization and why is it so important?

A: Think of quantization like simplifying a complex recipe. LLMs involve lots of numbers, which take up space and slow down processing. Quantization reduces the precision of these numbers, making them smaller and faster to work with. It's like using a basic measuring cup instead of a precise kitchen scale. You might lose a little accuracy, but it gains you speed.

Q: Are there other GPUs that can handle Llama3 8B?

A: Yes, there are! The RTX4090 and RTX4080 are also capable of running Llama3 8B. However, the RTX6000Ada_48GB offers more memory and potentially better performance.

Q: How does the RTX6000Ada_48GB compare to other GPUs?

A: Unfortunately, we lack the data to make a direct comparison. The RTX6000Ada_48GB is a powerful workhorse, but its performance in specific scenarios will depend on the LLM and other factors.

Q: Where can I find more information on LLM performance?

A: The llama.cpp GitHub repository is an excellent resource for benchmarks and discussions on LLM performance.

Keywords

NVIDIA RTX6000Ada48GB, Llama3 8B, LLM, GPU, token generation speed, Q4K_M quantization, F16 quantization, performance benchmarks, local LLM, AI, machine learning, natural language processing.