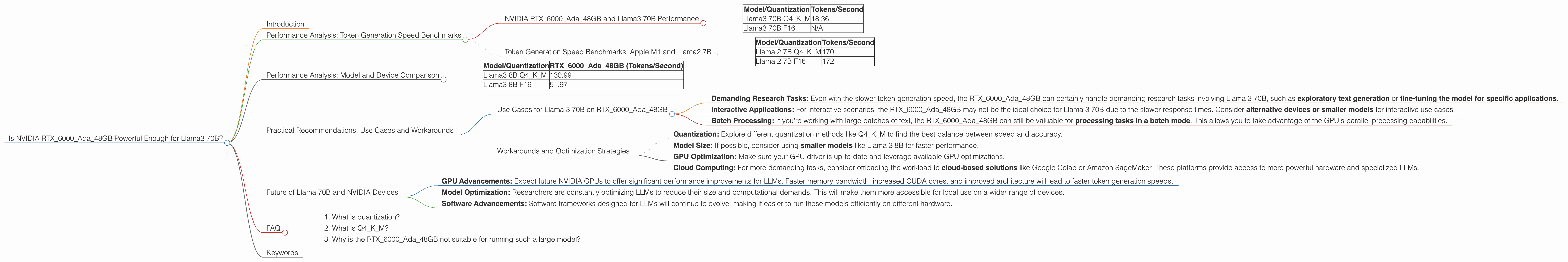

Is NVIDIA RTX 6000 Ada 48GB Powerful Enough for Llama3 70B?

Introduction

The world of large language models (LLMs) is buzzing with excitement, and for good reason! These powerful AI systems can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models locally can be a real challenge, especially if you're working with the giants like Llama 3 70B, one of the most impressive LLMs out there.

This article delves into the performance of the NVIDIA RTX6000Ada_48GB graphics card, a popular choice for AI workloads, and examines its suitability for running Llama 3 70B. We'll analyze the performance of the model, provide practical recommendations, and explore use cases that fit the capabilities of this device.

Performance Analysis: Token Generation Speed Benchmarks

NVIDIA RTX6000Ada_48GB and Llama3 70B Performance

Let's get down to the nitty-gritty. How does the RTX6000Ada_48GB handle the task of generating text with Llama 3 70B?

| Model/Quantization | Tokens/Second |

|---|---|

| Llama3 70B Q4KM | 18.36 |

| Llama3 70B F16 | N/A |

Important Note: F16 (half-precision floating-point) quantization is not available for running Llama 3 70B on the RTX6000Ada48GB. This means we only have data for Q4K_M (4-bit quantization with K-means clustering and mixed-precision).

What does this mean?

Slower Token Generation: The RTX6000Ada48GB generates 18.36 tokens per second with Llama 3 70B in Q4K_M. This is significantly slower compared to smaller Llama models. To put this into perspective, imagine you typed 18.36 words per second. It would take you a while to generate a whole paragraph!

F16 Limitation: The lack of F16 support for Llama 3 70B on this device implies a trade-off between speed and accuracy. F16 typically results in slightly lower accuracy but faster performance.

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

To illustrate the differences in performance, let's take a look at a different model and device combination.

The Apple M1 chip, a powerhouse in its own right, exhibits much faster token generation speeds for the smaller Llama 2 7B model.

| Model/Quantization | Tokens/Second |

|---|---|

| Llama 2 7B Q4KM | 170 |

| Llama 2 7B F16 | 172 |

Using the M1 chip, you can achieve a speed of around 170 tokens per second for both Q4KM and F16 quantization. This is about 9 times faster than the RTX6000Ada_48GB with Llama 3 70B.

Performance Analysis: Model and Device Comparison

Let's look at the differences in performance between the RTX6000Ada_48GB and Llama3 8B.

| Model/Quantization | RTX6000Ada_48GB (Tokens/Second) |

|---|---|

| Llama3 8B Q4KM | 130.99 |

| Llama3 8B F16 | 51.97 |

What can we learn from this data?

Smaller Models, Faster Performance: The RTX6000Ada_48GB performs significantly better with Llama 3 8B compared to Llama 3 70B. This is due to the smaller model size and reduced computation demands.

F16 vs. Q4KM: The difference in token generation speeds between F16 and Q4KM for Llama 3 8B highlights the trade-off between speed and accuracy. Larger models often benefit more from F16, but it's important to consider your specific requirements.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama 3 70B on RTX6000Ada_48GB

Demanding Research Tasks: Even with the slower token generation speed, the RTX6000Ada_48GB can certainly handle demanding research tasks involving Llama 3 70B, such as exploratory text generation or fine-tuning the model for specific applications.

Interactive Applications: For interactive scenarios, the RTX6000Ada_48GB may not be the ideal choice for Llama 3 70B due to the slower response times. Consider alternative devices or smaller models for interactive use cases.

Batch Processing: If you're working with large batches of text, the RTX6000Ada_48GB can still be valuable for processing tasks in a batch mode. This allows you to take advantage of the GPU's parallel processing capabilities.

Workarounds and Optimization Strategies

Quantization: Explore different quantization methods like Q4KM to find the best balance between speed and accuracy.

Model Size: If possible, consider using smaller models like Llama 3 8B for faster performance.

GPU Optimization: Make sure your GPU driver is up-to-date and leverage available GPU optimizations.

Cloud Computing: For more demanding tasks, consider offloading the workload to cloud-based solutions like Google Colab or Amazon SageMaker. These platforms provide access to more powerful hardware and specialized LLMs.

Future of Llama 70B and NVIDIA Devices

GPU Advancements: Expect future NVIDIA GPUs to offer significant performance improvements for LLMs. Faster memory bandwidth, increased CUDA cores, and improved architecture will lead to faster token generation speeds.

Model Optimization: Researchers are constantly optimizing LLMs to reduce their size and computational demands. This will make them more accessible for local use on a wider range of devices.

Software Advancements: Software frameworks designed for LLMs will continue to evolve, making it easier to run these models efficiently on different hardware.

FAQ

1. What is quantization?

Quantization is a technique used to reduce the memory footprint and computational requirements of LLMs. It involves representing numbers with fewer bits, sacrificing some precision for speed. Think of it like using a smaller ruler to measure something – you might not get the exact measurement but it's faster.

2. What is Q4KM?

Q4KM is a specific quantization method where numbers are represented using 4 bits with K-means clustering and mixed-precision. It's a popular choice for achieving a good balance between speed and accuracy.

3. Why is the RTX6000Ada_48GB not suitable for running such a large model?

The RTX6000Ada_48GB is a powerful GPU, but it's not designed for running large LLMs like Llama 3 70B at lightning speed. The large size and complexity of Llama 3 70B require a substantial amount of processing power, so it can be challenging to achieve smooth and interactive performance with this GPU.

Keywords

Llama 3 70B, NVIDIA RTX6000Ada48GB, LLM, large language model, performance, speed, token generation, quantization, Q4K_M, F16, GPU, CUDA, local inference, cloud computing, use cases, research, interactive applications, batch processing, optimizations, model size, future of LLMs, advancements