Is NVIDIA RTX 5000 Ada 32GB Powerful Enough for Llama3 8B?

Introduction

The world of large language models (LLMs) is rapidly evolving, and with it, the need for specialized hardware to handle their immense computational demands. LLMs like Llama 3 are revolutionizing how we interact with technology – from generating creative content to assisting with complex tasks. But the question on every developer's mind is, "Can my hardware handle this?"

This deep dive explores the performance capabilities of the NVIDIA RTX5000Ada_32GB graphics card when it comes to running the Llama 3 8B model. We'll analyze token generation speeds and processing power, providing you with the information you need to make informed decisions about your hardware setup.

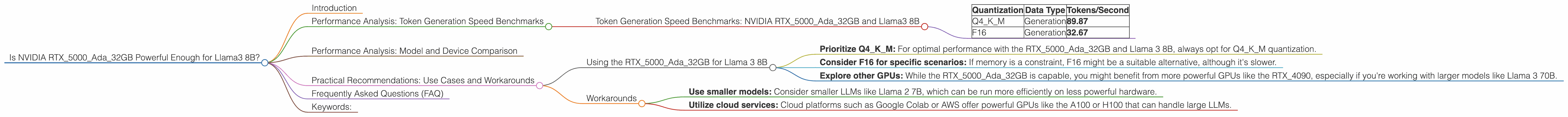

Performance Analysis: Token Generation Speed Benchmarks

First, let's dive into the critical metric of token generation speed, the rate at which the model produces words or units of text. We'll focus on the Llama 3 8B model, comparing the performance of the RTX5000Ada_32GB using different quantization (quantization is a technique to compress models to use less memory) and data types:

Token Generation Speed Benchmarks: NVIDIA RTX5000Ada_32GB and Llama3 8B

| Quantization | Data Type | Tokens/Second |

|---|---|---|

| Q4KM | Generation | 89.87 |

| F16 | Generation | 32.67 |

What does this mean?

This data reveals that the RTX5000Ada32GB GPU performs significantly better with Q4K_M quantization compared to F16, achieving nearly 3 times the token generation speed.

- Analogical Explanation: Imagine you are a typist, and you need to produce a document. With Q4KM quantization, you're like a seasoned speed typist, generating text quickly and efficiently. With F16, you're like a beginner, slower but still getting the job done.

Key Takeaways:

- Q4KM is the clear winner: When choosing quantization, stick to Q4KM for the best token generation speeds with the RTX5000Ada_32GB and Llama 3 8B.

- F16 might be adequate: While F16 is slower, it might be suitable for specific scenarios where memory is a concern. Think of it as a trade-off between speed and resource utilization.

Performance Analysis: Model and Device Comparison

Let's compare the performance of different LLM models with the RTX5000Ada_32GB. Unfortunately, we only have data for Llama 3 8B. Data for other models like Llama 3 70B is unavailable at this time.

Practical Recommendations: Use Cases and Workarounds

Using the RTX5000Ada_32GB for Llama 3 8B

Here are some practical recommendations based on the analyzed data:

- Prioritize Q4KM: For optimal performance with the RTX5000Ada32GB and Llama 3 8B, always opt for Q4K_M quantization.

- Consider F16 for specific scenarios: If memory is a constraint, F16 might be a suitable alternative, although it's slower.

- Explore other GPUs: While the RTX5000Ada32GB is capable, you might benefit from more powerful GPUs like the RTX4090, especially if you're working with larger models like Llama 3 70B.

Workarounds

If your hardware is limited:

- Use smaller models: Consider smaller LLMs like Llama 2 7B, which can be run more efficiently on less powerful hardware.

- Utilize cloud services: Cloud platforms such as Google Colab or AWS offer powerful GPUs like the A100 or H100 that can handle large LLMs.

Frequently Asked Questions (FAQ)

1. What is quantization? Quantization is a technique used to compress LLMs to reduce memory usage. It involves converting the model's weights, which are typically stored as 32-bit floating-point numbers, to smaller data types like 8-bit integers (Q4KM) or 16-bit floating-point numbers (F16).

2. What are the trade-offs of using different quantization levels? While reducing memory requirements, quantization can sometimes decrease the model's accuracy. Q4KM typically offers the best performance but may slightly impact accuracy compared to F16.

3. What are the differences between Llama 2 7B and Llama 3 8B? Llama 3 8B is a newer and more advanced model compared to Llama 2 7B. It boasts better performance on various language tasks and is often considered more sophisticated.

4. How does the RTX5000Ada32GB compare to other GPUs for LLM inference? The RTX5000Ada32GB is a capable GPU for running LLMs, especially smaller models like Llama 3 8B. However, more powerful GPUs like the RTX_4090 or A100 excel with larger models and offer higher token generation speeds.

5. Should I be concerned about the RTX5000Ada32GB's memory capacity? For Llama 3 8B, the RTX5000Ada32GB's memory is adequate. However, if you plan to work with larger models like Llama 3 70B, you might need a GPU with a higher memory capacity.

Keywords:

NVIDIA RTX5000Ada32GB, Llama 3 8B, LLM, large language model, token generation speed, quantization, Q4K_M, F16, GPU, inference, performance, benchmarks, practical recommendations, use cases, workarounds, model comparison, memory capacity.