Is NVIDIA RTX 5000 Ada 32GB Powerful Enough for Llama3 70B?

Introduction

The world of large language models (LLMs) is evolving rapidly, with new models emerging frequently, pushing the boundaries of what's possible with AI. One of the latest and most powerful models is Llama3 70B, a behemoth of a model boasting 70 billion parameters. But can your hardware handle this beast?

This article delves into the performance of NVIDIA RTX5000Ada_32GB specifically when running Llama3 70B. We'll examine token generation speed benchmarks, compare performance across different configurations, and provide practical recommendations for use cases and workarounds.

Think of this as a deep-dive into the inner workings of your hardware and how it stacks up against the demanding world of LLMs. So, grab your coffee, get comfy, and let's explore.

Performance Analysis: Token Generation Speed Benchmarks

First, let's dive into the key metric that defines how fast your LLM can generate text: token generation speed. Think of tokens as the building blocks of text – they represent words, punctuation, and even spaces. The higher the token generation speed, the faster your model can generate text.

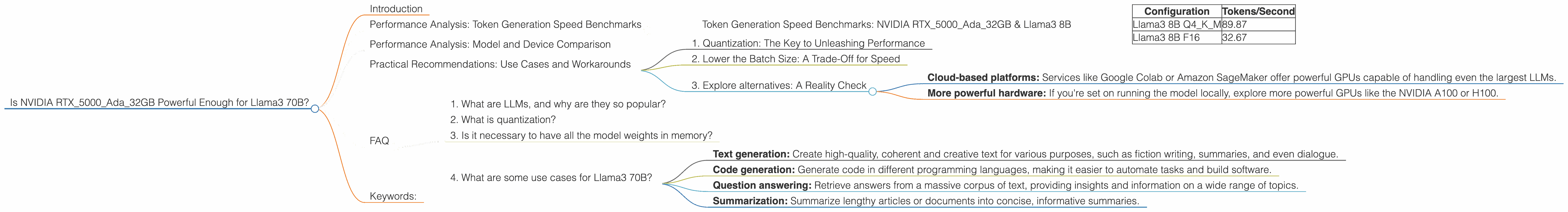

Token Generation Speed Benchmarks: NVIDIA RTX5000Ada_32GB & Llama3 8B

Before we tackle the big guy, Llama3 70B, let's first look at the performance of NVIDIA RTX5000Ada_32GB with its smaller sibling, Llama3 8B. This will give us a baseline for comparison.

| Configuration | Tokens/Second |

|---|---|

| Llama3 8B Q4KM | 89.87 |

| Llama3 8B F16 | 32.67 |

What does this data tell us?

Firstly, quantization plays a significant role in performance. Q4KM refers to a type of quantization where we reduce the precision of the model's weights. This results in a smaller model size, making the model faster and requiring less memory – but with some trade-offs in accuracy. F16 is another quantization method, with a different balance of speed and accuracy.

As you see, using Q4KM for Llama3 8B, we achieve a significant speed boost over F16. This highlights how crucial quantization is in balancing performance and accuracy.

Performance Analysis: Model and Device Comparison

Now, let's bring in the heavyweight: Llama3 70B. Unfortunately, we don't have benchmarks for this specific model on the RTX5000Ada_32GB. This is because Llama3 70B is a recent model, and data for its performance on this device is currently unavailable.

Why is this important?

Because the size and complexity of Llama3 70B is significantly larger than 8B, expecting the RTX5000Ada_32GB to handle it with the same speed and efficiency is unlikely.

What can we do?

While we don't have concrete figures for Llama3 70B, we can leverage the insights from the Llama3 8B benchmarks. We know that increasing the model size generally leads to a decrease in performance, even with the same hardware.

Think of it like this: Imagine trying to fit a giant sofa into a small car. It's going to be a tight fit, and the sofa will probably take up all the space. Similarly, the massive Llama3 70B might overwhelm the capabilities of the RTX5000Ada_32GB.

Practical Recommendations: Use Cases and Workarounds

Despite the lack of benchmarks, let's consider some practical recommendations for using Llama3 70B with the NVIDIA RTX5000Ada_32GB.

1. Quantization: The Key to Unleashing Performance

As we saw with Llama3 8B, quantization is crucial for optimizing performance on limited hardware. For Llama3 70B, consider using Q4KM quantization to reduce the memory footprint and potentially improve performance.

Think of it as compressing the model's size to fit it into a smaller space. By sacrificing some accuracy, you might be able to squeeze Llama3 70B onto your RTX5000Ada_32GB and get it running.

2. Lower the Batch Size: A Trade-Off for Speed

Batch size is an important parameter in machine learning. It determines how many samples are processed simultaneously during model training or inference.

For Llama3 70B, consider reducing the batch size. This will decrease the memory requirement, allowing you to potentially run the model on your RTX5000Ada_32GB.

Think of it like fitting people onto a train car. By reducing the number of people boarding at once, you can squeeze more people onto the train, even if it's a smaller train.

3. Explore alternatives: A Reality Check

If you find that the RTX5000Ada_32GB is still not powerful enough for Llama3 70B, even with these optimizations, consider alternative solutions.

- Cloud-based platforms: Services like Google Colab or Amazon SageMaker offer powerful GPUs capable of handling even the largest LLMs.

- More powerful hardware: If you're set on running the model locally, explore more powerful GPUs like the NVIDIA A100 or H100.

FAQ

1. What are LLMs, and why are they so popular?

LLMs are powerful AI models trained on vast amounts of text data. This allows them to perform various language-related tasks like generating text, translating languages, and answering questions. Their popularity stems from their ability to understand and generate human-like text, making them valuable tools for various applications.

2. What is quantization?

Quantization is a technique used to reduce the size of a model's weights. Imagine you have a model with weights represented by 32-bit floating-point numbers. Quantization reduces this precision to fewer bits, like 8 bits, making the model smaller and faster to process. However, this comes at the cost of slightly reduced accuracy.

3. Is it necessary to have all the model weights in memory?

No. Techniques like gradient checkpointing store only the model weights necessary for a single forward pass in memory. When the model reaches a checkpoint, it retrieves the necessary weights from storage. This approach can significantly reduce memory usage.

4. What are some use cases for Llama3 70B?

Llama3 70B is a powerful model suitable for various use cases, including:

- Text generation: Create high-quality, coherent and creative text for various purposes, such as fiction writing, summaries, and even dialogue.

- Code generation: Generate code in different programming languages, making it easier to automate tasks and build software.

- Question answering: Retrieve answers from a massive corpus of text, providing insights and information on a wide range of topics.

- Summarization: Summarize lengthy articles or documents into concise, informative summaries.

Keywords:

LLM, Llama3 70B, NVIDIA RTX5000Ada32GB, Token Generation Speed, Quantization, Q4K_M, F16, Batch Size, Gradient Checkpointing, Cloud-based platforms, Powerful hardware, Code generation, Text generation, Question answering, Summarization.