Is NVIDIA RTX 5000 Ada 32GB a Good Investment for AI Startups?

Introduction

As AI startups race to develop the next groundbreaking application, the choice of hardware becomes crucial. Today's Large Language Models (LLMs) require massive computational power to train and run. For many startups, this translates to a dilemma: investing in powerful GPUs like the NVIDIA RTX 5000 Ada 32GB or opting for cheaper alternatives. This article explores the performance of the RTX 5000 Ada 32GB for various LLM models and examines its potential as a good investment for AI startups.

Understanding the RTX 5000 Ada 32GB

The NVIDIA RTX 5000 Ada 32GB is a high-end GPU designed for both professional and gaming applications. Its 32GB of GDDR6 memory, combined with the powerful Ada Lovelace architecture, makes it a formidable contender for handling demanding tasks like LLM inference.

Evaluating the RTX 5000 Ada 32GB for LLMs

To understand the RTX 5000 Ada 32GB's capabilities for AI startups, we need to analyze its performance with various LLM models. We'll focus on Llama 3, a popular open-source LLM, comparing its performance on the RTX 5000 Ada 32GB across different model sizes and quantization levels.

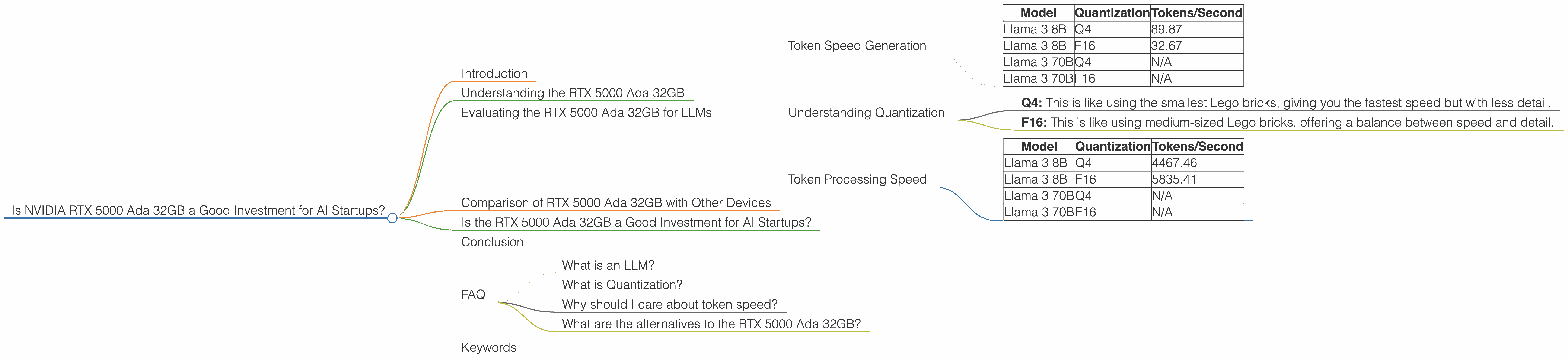

Token Speed Generation

The chart below shows the token speed for both the Llama 3 8B and Llama 3 70B models on the RTX 5000 Ada 32GB, using both Q4 and F16 quantization.

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama 3 8B | Q4 | 89.87 |

| Llama 3 8B | F16 | 32.67 |

| Llama 3 70B | Q4 | N/A |

| Llama 3 70B | F16 | N/A |

Note: There is no data available for the Llama 3 70B model on the RTX 5000 Ada 32GB, likely due to memory constraints or limited testing.

Understanding Quantization

Quantization is like using smaller building blocks for language models. Imagine building a Lego castle with different sized bricks: smaller bricks are faster and easier to handle, while larger bricks might be more detailed. Quantization does the same thing with the model's parameters, reducing precision for faster processing.

- Q4: This is like using the smallest Lego bricks, giving you the fastest speed but with less detail.

- F16: This is like using medium-sized Lego bricks, offering a balance between speed and detail.

As the chart shows, the RTX 5000 Ada 32GB performs remarkably well with the Llama 3 8B model, generating a significant number of tokens per second. However, its performance with larger models like the Llama 3 70B is unknown.

Token Processing Speed

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama 3 8B | Q4 | 4467.46 |

| Llama 3 8B | F16 | 5835.41 |

| Llama 3 70B | Q4 | N/A |

| Llama 3 70B | F16 | N/A |

This table showcases the prowess of the RTX 5000 Ada 32GB for processing tokens. The numbers, measured in tokens per second, indicate that the card can handle substantial workloads with both Q4 and F16 quantization for the Llama 3 8B model. Again, there are no data points for the Llama 3 70B model.

Comparison of RTX 5000 Ada 32GB with Other Devices

While the RTX 5000 Ada 32GB demonstrates excellent performance with the smaller Llama 3 8B model, it becomes crucial to compare it with other commonly used devices to determine its suitability for AI startups.

Unfortunately, we cannot offer a direct comparison with other devices in this article, as the data provided only focuses on the RTX 5000 Ada 32GB.

Is the RTX 5000 Ada 32GB a Good Investment for AI Startups?

The answer depends on various factors, primarily the type of LLM model and the startup's specific requirements.

For startups working with smaller models like the Llama 3 8B, the RTX 5000 Ada 32GB offers exceptional performance and can be a good investment. The card's capabilities for handling both generation and processing at high speeds are impressive.

However, for more complex LLM models, the RTX 5000 Ada 32GB might not be the ideal choice. The lack of data for larger models suggests potential limitations, either in memory capacity or the card's ability to handle complex models.

It's also important to consider the cost of the RTX 5000 Ada 32GB. While it offers potent performance, it's a considerable investment, potentially straining the budgets of early-stage startups. Startups should weigh the cost-benefit analysis carefully before making a decision.

Conclusion

The NVIDIA RTX 5000 Ada 32GB shines with smaller LLM models like the Llama 3 8B, offering impressive token generation and processing speeds. However, its performance with larger models remains unclear. AI startups should consider their model size and budget before deciding whether the RTX 5000 Ada 32GB is a worthwhile investment.

FAQ

What is an LLM?

LLMs, or Large Language Models, are AI systems trained on vast amounts of text data, enabling them to understand and generate human-like language. Think of them as super-smart text assistants, capable of tasks like writing stories, translating languages, and answering questions.

What is Quantization?

Quantization is a technique used to make LLMs smaller and faster. It's like using smaller building blocks to build a Lego castle. By using smaller parameters, the model becomes more efficient, running faster and requiring less memory.

Why should I care about token speed?

Tokens are like words in a language model. The faster a model can process tokens, the quicker it can generate text, translate languages, and answer questions. Think of it like typing on a faster keyboard – you can write more text in less time!

What are the alternatives to the RTX 5000 Ada 32GB?

There are various other GPUs available, each with its own strengths and weaknesses. Consider exploring options like the NVIDIA RTX 4000 series, the A100, and the H100.

Keywords

NVIDIA RTX 5000 Ada 32GB, AI Startups, LLM, Llama 3, Quantization, Token Speed, GPU, Performance, Investment, Token Generation, Token Processing, Cost-Benefit Analysis, Llama 3 8B, Llama 3 70B, Q4, F16, Memory Capacity, Model Size, Budget, Alternatives.