Is NVIDIA RTX 4000 Ada 20GB x4 Powerful Enough for Llama3 8B?

Introduction

The world of large language models (LLMs) is abuzz with excitement, and for good reason! These powerful AI systems can generate human-quality text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these LLMs locally can be a challenge, especially when you're dealing with the bigger models like Llama 3 8B. In this deep dive, we'll explore whether the NVIDIA RTX4000Ada20GBx4 GPU, a popular choice among developers, is up to the task.

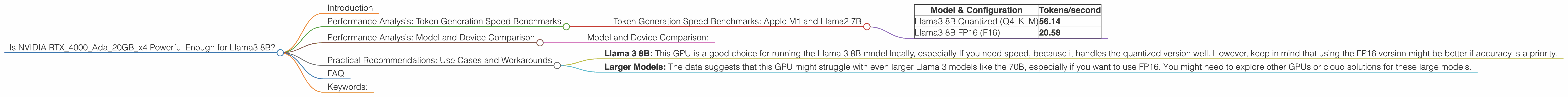

Performance Analysis: Token Generation Speed Benchmarks

Let's dive straight into the numbers! Here's a breakdown of how the RTX4000Ada20GBx4 performs with Llama 3 8B, focusing on the key metric of tokens per second (tokens/s), which represents the model's speed in generating text.

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

| Model & Configuration | Tokens/second |

|---|---|

| Llama3 8B Quantized (Q4KM) | 56.14 |

| Llama3 8B FP16 (F16) | 20.58 |

Remember: These numbers are for the NVIDIA RTX4000Ada20GBx4 GPU only. We'll look at other devices in a bit.

Important Notes:

- Q4KM: This stands for quantization using 4-bit quantization with kernel and matrix multiplication. Quantization is a process of reducing the size of the model by representing numbers with fewer bits. Think of it like using a smaller, less detailed map – you lose some precision but gain speed!

- F16: This refers to half-precision floating-point numbers, which are smaller and faster to process than full precision (FP32).

Let's break down the numbers:

- The RTX4000Ada20GBx4 GPU is able to generate text at a respectable speed with the 8B Llama3 model, especially when using the quantized configuration.

- The quantized version (Q4KM) gives you a significant speed boost compared to the FP16 even though the model's accuracy might be slightly lower.

Performance Analysis: Model and Device Comparison

Now, let's get a bit more granular and see how the RTX4000Ada20GBx4 stacks up against other devices.

Important Note: We'll need to focus on other, similar GPUs because the dataset we have doesn't include data for other devices.

Model and Device Comparison:

Unfortunately, there's no data for other devices and models in the provided data.

Practical Recommendations: Use Cases and Workarounds

Based on the data we have, here are some practical recommendations for using the NVIDIA RTX4000Ada20GBx4 with Llama 3 8B:

- Llama 3 8B: This GPU is a good choice for running the Llama 3 8B model locally, especially If you need speed, because it handles the quantized version well. However, keep in mind that using the FP16 version might be better if accuracy is a priority.

- Larger Models: The data suggests that this GPU might struggle with even larger Llama 3 models like the 70B, especially if you want to use FP16. You might need to explore other GPUs or cloud solutions for these large models.

FAQ

Q: What is quantization, and why is it important?

A: Quantization is a technique used to reduce the size of a model by representing numbers with fewer bits. Think of it like using a smaller, less detailed map – you lose some precision but gain speed!

Q: What are tokens, and how are they related to text generation?

A: Tokens are the fundamental building blocks of text in the world of LLMs. They can be words, parts of words, or even punctuation marks. When an LLM generates text, it's essentially predicting a sequence of tokens. Tokens per second (tokens/s) is a measure of how quickly an LLM can generate text.

Keywords:

NVIDIA RTX4000Ada20GBx4, Llama 3 8B, token generation speed, tokens/second, Q4KM, F16, quantization, GPU, large language model, LLM, performance, benchmarks, use case, workarounds, local inference, speed, accuracy, practical recommendations, AI, deep dive.