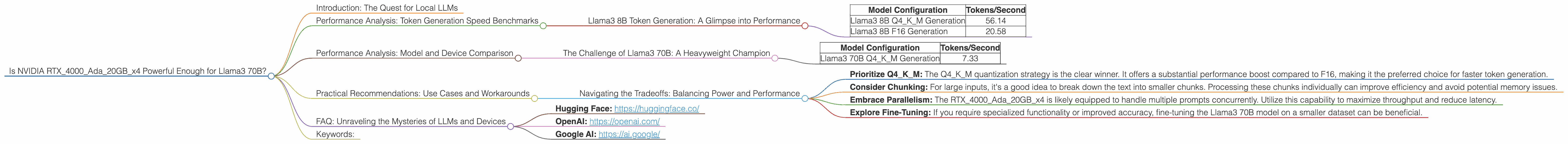

Is NVIDIA RTX 4000 Ada 20GB x4 Powerful Enough for Llama3 70B?

Introduction: The Quest for Local LLMs

The world of artificial intelligence is buzzing with excitement about Large Language Models (LLMs), like the impressive Llama 3 series. These models can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But using them often requires hefty processing power and a hefty cloud bill! That's where local LLMs come in: running these models directly on your own hardware.

This article dives deep into the capabilities of the NVIDIA RTX4000Ada20GBx4 graphics card, evaluating its suitability for running the Llama3 70B model. We'll explore performance benchmarks, compare different configurations, and offer practical advice for developers looking to unleash the power of local LLM models. So, grab your coffee, put on your thinking cap, and let's embark on this computational journey!

Performance Analysis: Token Generation Speed Benchmarks

Llama3 8B Token Generation: A Glimpse into Performance

Before diving into the behemoth that is Llama3 70B, let's take a look at the Llama3 8B model. Think of this as a warm-up, where we gauge the RTX4000Ada20GBx4's performance with a slightly smaller model.

| Model Configuration | Tokens/Second |

|---|---|

| Llama3 8B Q4KM Generation | 56.14 |

| Llama3 8B F16 Generation | 20.58 |

The table above shows the token generation speed for Llama3 8B with two different quantization configurations. Let's break it down:

- Q4KM: This configuration uses 4-bit quantization for the model weights. Quantization is essentially a way to compress the model size, reducing the memory footprint and potentially speeding up inference.

- F16: This configuration uses 16-bit floating-point precision. While it requires more memory, it generally yields higher accuracy.

As you can see, the RTX4000Ada20GBx4 performs significantly better with the Q4KM configuration, generating nearly three times more tokens per second compared to the F16 configuration.

Key Takeaway: Quantization can considerably boost token generation speed on the RTX4000Ada20GBx4.

Performance Analysis: Model and Device Comparison

The Challenge of Llama3 70B: A Heavyweight Champion

Now, let's get to the real deal: the Llama3 70B model. This model packs a massive 70 billion parameters, making it a heavyweight in the LLM world. Let's see how the RTX4000Ada20GBx4 fares:

| Model Configuration | Tokens/Second |

|---|---|

| Llama3 70B Q4KM Generation | 7.33 |

The Llama3 70B model is a formidable contender! While the RTX4000Ada20GBx4 handles it with the Q4KM configuration, the token generation speed is significantly lower compared to the Llama3 8B model. This is expected; a larger model naturally requires more computational resources.

Key Takeaway: While the RTX4000Ada20GBx4 can handle Llama3 70B, the performance is noticeably impacted by the model's sheer size.

Practical Recommendations: Use Cases and Workarounds

Navigating the Tradeoffs: Balancing Power and Performance

For developers, optimizing for token generation speed is crucial. Here's a breakdown of how to use the RTX4000Ada20GBx4 effectively with Llama3 70B:

- Prioritize Q4KM: The Q4KM quantization strategy is the clear winner. It offers a substantial performance boost compared to F16, making it the preferred choice for faster token generation.

- Consider Chunking: For large inputs, it's a good idea to break down the text into smaller chunks. Processing these chunks individually can improve efficiency and avoid potential memory issues.

- Embrace Parallelism: The RTX4000Ada20GBx4 is likely equipped to handle multiple prompts concurrently. Utilize this capability to maximize throughput and reduce latency.

- Explore Fine-Tuning: If you require specialized functionality or improved accuracy, fine-tuning the Llama3 70B model on a smaller dataset can be beneficial.

Key Takeaway: Carefully consider model size, quantization, and deployment strategies to optimize the performance of Llama3 70B on the RTX4000Ada20GBx4.

FAQ: Unraveling the Mysteries of LLMs and Devices

Q: What does "Q4KM" mean?

A: "Q4KM" refers to a specific quantization technique. Quantization reduces the precision of the model's weights, shrinking its memory footprint and often improving inference speed. Think of it like compressing an image; it retains the essential details but sacrifices some fidelity.

Q: Are there any other devices that are better suited for larger LLMs?

A: Absolutely! Devices like the NVIDIA A100 and H100 are known for their high memory bandwidth and computational power, making them ideal for running large-scale LLMs.

Q: Can I run Llama3 70B on my CPU?

A: It's possible, but not recommended. CPUs are generally not as efficient for parallel processing tasks like LLM inference as GPUs. You'll likely see significantly slower performance.

Q: Where can I learn more about LLMs and their applications?

A: There are countless resources available online, including:

- Hugging Face: https://huggingface.co/

- OpenAI: https://openai.com/

- Google AI: https://ai.google/

Keywords:

NVIDIA RTX4000Ada20GBx4, Llama3 70B, Llama3 8B, LLM, Large Language Model, Deep Learning, Token Generation, Quantization Q4KM, Processing Speed, GPU, Hardware, Performance Benchmarks, Local LLMs, Inference, Fine-tuning, Developer, Use Cases, Workarounds, AI, Artificial Intelligence