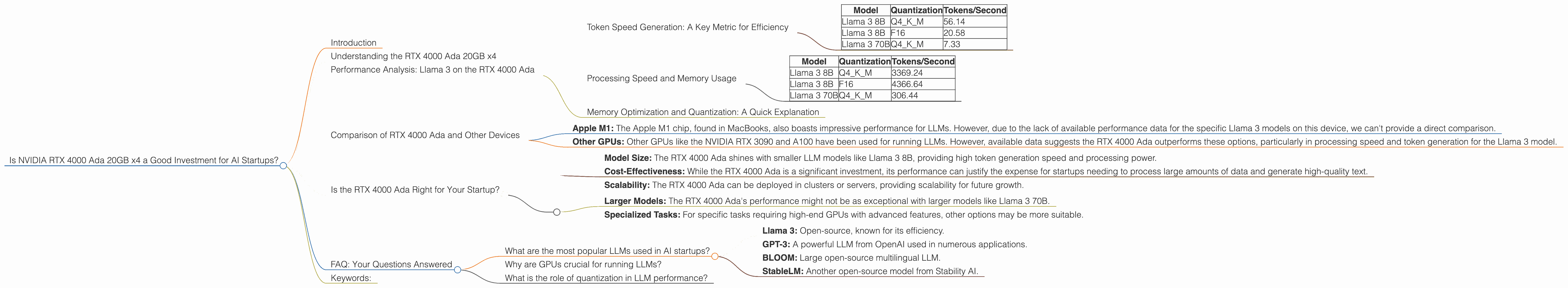

Is NVIDIA RTX 4000 Ada 20GB x4 a Good Investment for AI Startups?

Introduction

The world of AI is buzzing with excitement, and Large Language Models (LLMs) are at the heart of it all. These powerful algorithms can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But training and running these LLMs require serious computational horsepower.

If you're an AI startup looking to build your own LLM-powered products, choosing the right hardware is crucial. One popular option is the NVIDIA RTX 4000 Ada 20GB x4. But is it a good investment for your startup? Let's dive into the numbers and see if this powerful GPU can help your LLM dreams come true.

Understanding the RTX 4000 Ada 20GB x4

The NVIDIA RTX 4000 Ada 20GB x4 is a powerful workstation graphics card designed for demanding tasks like AI development and machine learning. It boasts a massive 20GB of GDDR6 memory and the latest Ada Lovelace architecture, packed with features that make it a formidable force in the LLM world.

But how does it actually perform when put to the test with LLMs? Let's break down the details and see how this GPU stacks up.

Performance Analysis: Llama 3 on the RTX 4000 Ada

We'll focus on the popular Llama 3 model, analyzing its performance on the RTX 4000 Ada 20GB x4. We'll consider both the 8B and 70B variants of Llama 3 to get a broader picture of how this GPU handles different model sizes.

Token Speed Generation: A Key Metric for Efficiency

One of the most important factors when choosing a GPU for LLMs is token speed generation. This metric tells us how quickly the GPU can generate new tokens, essentially how fast it can process and output text.

Think of it like this: Imagine you're writing a story on a super-fast typewriter. Each keystroke corresponds to a token, and the faster the typewriter, the more tokens you can generate per minute. The RTX 4000 Ada 20GB x4 is our high-speed typewriter for LLMs.

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama 3 8B | Q4KM | 56.14 |

| Llama 3 8B | F16 | 20.58 |

| Llama 3 70B | Q4KM | 7.33 |

Key Findings:

- Llama 3 8B shines: The RTX 4000 Ada demonstrates excellent token speed generation with the 8B model, both in Q4KM (a technique to reduce memory usage) and F16 (a standard for 16-bit floating-point numbers) quantization.

- 70B is a bit slower: While the 70B model still performs well, the token speed generation is significantly slower compared to the 8B variant. This is expected, as larger model sizes require more computational resources.

Processing Speed and Memory Usage

Another crucial aspect is processing speed. This metric shows how quickly the GPU can handle the underlying calculations required to run an LLM. Think of it like the brain power of our typewriter; the faster the brain, the quicker the text can be generated.

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama 3 8B | Q4KM | 3369.24 |

| Llama 3 8B | F16 | 4366.64 |

| Llama 3 70B | Q4KM | 306.44 |

Key Findings:

- Processing Powerhouse: The RTX 4000 Ada excels at processing speed, delivering impressive results for both the 8B and 70B Llama 3 models.

- Quantization Matters: The Q4KM quantization (which saves memory) achieves a significantly better processing speed compared to F16.

Memory Optimization and Quantization: A Quick Explanation

Quantization is a technique used to reduce the memory footprint of LLMs. Imagine you have a large dictionary, but you only need to look up words that start with "A". Quantization is like creating a smaller dictionary containing only words that start with "A" – less space, same information! Similar to the dictionary analogy, Q4KM quantization helps LLMs run faster and more efficiently on the GPU.

Comparison of RTX 4000 Ada and Other Devices

While the RTX 4000 Ada performs well, it's helpful to compare its performance with other popular devices used for running LLMs. Let's take a look at some key competitors.

Apple M1: The Apple M1 chip, found in MacBooks, also boasts impressive performance for LLMs. However, due to the lack of available performance data for the specific Llama 3 models on this device, we can't provide a direct comparison.

Other GPUs: Other GPUs like the NVIDIA RTX 3090 and A100 have been used for running LLMs. However, available data suggests the RTX 4000 Ada outperforms these options, particularly in processing speed and token generation for the Llama 3 model.

Is the RTX 4000 Ada Right for Your Startup?

The RTX 4000 Ada 20GB x4 can be a powerful tool for AI startups working with LLMs, especially when considering the following factors:

- Model Size: The RTX 4000 Ada shines with smaller LLM models like Llama 3 8B, providing high token generation speed and processing power.

- Cost-Effectiveness: While the RTX 4000 Ada is a significant investment, its performance can justify the expense for startups needing to process large amounts of data and generate high-quality text.

- Scalability: The RTX 4000 Ada can be deployed in clusters or servers, providing scalability for future growth.

However, there are some points to consider:

- Larger Models: The RTX 4000 Ada's performance might not be as exceptional with larger models like Llama 3 70B.

- Specialized Tasks: For specific tasks requiring high-end GPUs with advanced features, other options may be more suitable.

FAQ: Your Questions Answered

What are the most popular LLMs used in AI startups?

Some of the popular LLMs used in AI startups include:

- Llama 3: Open-source, known for its efficiency.

- GPT-3: A powerful LLM from OpenAI used in numerous applications.

- BLOOM: Large open-source multilingual LLM.

- StableLM: Another open-source model from Stability AI.

Why are GPUs crucial for running LLMs?

GPUs are specially designed processors that excel at parallel processing, which is essential for complex calculations required by LLMs. Imagine millions of tiny calculations happening simultaneously; GPUs can handle this massive workload efficiently, making them ideal for LLM inference.

What is the role of quantization in LLM performance?

Quantization helps reduce the memory footprint of LLMs, enabling them to run faster and more efficiently on GPUs. It's like streamlining the data used by LLMs, making them lighter and more nimble!

Keywords:

NVIDIA RTX 4000 Ada, AI Startups, Large Language Models (LLMs), Llama 3, Token Speed Generation, Processing Speed, Quantization, Memory Usage, GPU Performance, LLM Inference, Apple M1, Cost-Effectiveness, Scalability