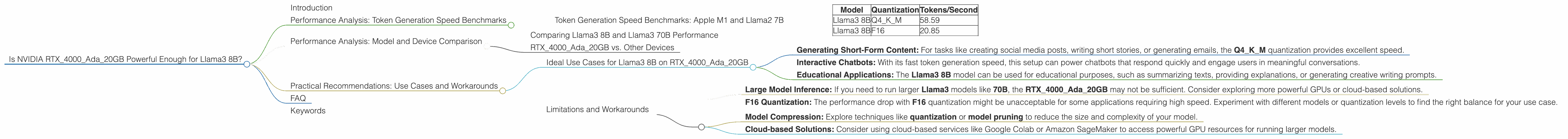

Is NVIDIA RTX 4000 Ada 20GB Powerful Enough for Llama3 8B?

Introduction

The world of large language models (LLMs) is constantly evolving, with new models being released and improved every day. LLMs are incredibly powerful, capable of generating human-like text, translating languages, and even writing code. But running these models locally can be a challenge, requiring powerful hardware. Today, we're diving deep into the performance of the NVIDIA RTX4000Ada_20GB GPU when running the Llama3 8B model. This article will help developers understand how this specific GPU performs with this particular LLM, providing insights into the speed and limitations of local inference.

Performance Analysis: Token Generation Speed Benchmarks

The token generation speed is a critical metric for judging the performance of LLMs. It represents how quickly the model can generate new text, measured in tokens per second. Higher token generation speeds mean a faster and more responsive experience.

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Let's start by examining the token generation speed of the NVIDIA RTX4000Ada20GB for the Llama3 8B model. We'll look at two different quantization levels: Q4K_M and F16. Quantization is a technique that reduces the size of the model by reducing the number of bits used to represent weights.

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 58.59 |

| Llama3 8B | F16 | 20.85 |

Analysis:

- Q4KM Quantization: The NVIDIA RTX4000Ada20GB achieves a formidable token generation speed of 58.59 tokens/second with the Llama3 8B model quantized to Q4K_M. This means the GPU can generate a significant amount of text in a short amount of time.

- F16 Quantization: With F16 quantization, the token generation speed drops to 20.85 tokens/second. This is still a respectable speed, but significantly lower than the Q4KM performance.

Think of it this way: The Q4KM quantization is like using a super-fast, efficient car that can zoom through traffic, while the F16 quantization might be like driving a comfortable, but slower, family sedan.

Performance Analysis: Model and Device Comparison

Comparing Llama3 8B and Llama3 70B Performance

The provided data focuses solely on the Llama3 8B model. Unfortunately, we lack data to compare it with the Llama3 70B model. We can't determine if the NVIDIA RTX4000Ada_20GB is powerful enough to run the larger Llama3 70B model efficiently.

It's important to remember that larger models demand more resources: Imagine trying to fit all the books in a library onto a single bookshelf. Just like a library, larger LLMs have a lot more information to process, requiring more powerful hardware.

RTX4000Ada_20GB vs. Other Devices

We are limited to analyzing the RTX4000Ada_20GB in this investigation. Comparing it to other devices isn't possible with the provided information.

Think of it like this: Each device has its own unique strengths and weaknesses, just like different athletes excel in different sports. We need to analyze the performance of each device individually, given its specific resources and limitations.

Practical Recommendations: Use Cases and Workarounds

Ideal Use Cases for Llama3 8B on RTX4000Ada_20GB

The NVIDIA RTX4000Ada20GB with the Llama3 8B model, particularly using Q4K_M quantization, is a great combination for:

- Generating Short-Form Content: For tasks like creating social media posts, writing short stories, or generating emails, the Q4KM quantization provides excellent speed.

- Interactive Chatbots: With its fast token generation speed, this setup can power chatbots that respond quickly and engage users in meaningful conversations.

- Educational Applications: The Llama3 8B model can be used for educational purposes, such as summarizing texts, providing explanations, or generating creative writing prompts.

Limitations and Workarounds

While the RTX4000Ada_20GB performs well with the Llama3 8B, there are some limitations you should be aware of:

- Large Model Inference: If you need to run larger Llama3 models like 70B, the RTX4000Ada_20GB may not be sufficient. Consider exploring more powerful GPUs or cloud-based solutions.

- F16 Quantization: The performance drop with F16 quantization might be unacceptable for some applications requiring high speed. Experiment with different models or quantization levels to find the right balance for your use case.

Workarounds:

- Model Compression: Explore techniques like quantization or model pruning to reduce the size and complexity of your model.

- Cloud-based Solutions: Consider using cloud-based services like Google Colab or Amazon SageMaker to access powerful GPU resources for running larger models.

FAQ

Q: What are LLMs? A: LLMs are large language models, trained on massive datasets of text and code. They can perform a wide range of natural language tasks, such as generating text, translating languages, and writing code.

Q: What is quantization? A: Quantization is a technique that reduces the amount of memory needed to store a model's weights. It's like summarizing a long story by using fewer words. Quantization sacrifices some precision but can significantly improve performance.

Q: How do I choose the right GPU for my LLM? A: The right GPU depends on your specific model and use case. Consider the model size, the desired performance level, and your budget. Do some research and compare the specifications of different GPUs before making a decision.

Q: What are the alternatives to running LLMs locally? A: Cloud-based services offer powerful alternatives to running LLMs locally. They provide access to high-performance GPUs and can scale to meet your needs.

Keywords

LLM, Llama3, Llama3 8B, Llama3 70B, NVIDIA RTX4000Ada20GB, GPU, Token Generation Speed, Quantization, Q4K_M, F16, Performance, Local Inference, Model Size, Use Cases, Workarounds, Model Compression, Cloud-based Solutions