Is NVIDIA RTX 4000 Ada 20GB Powerful Enough for Llama3 70B?

Introduction: The Rise of Local LLMs

Large language models (LLMs) are revolutionizing the way we interact with computers. These powerful AI systems can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

But the sheer size of these models often requires powerful hardware and cloud infrastructure, making them inaccessible to many. This is where local LLMs come in – allowing you to run these models directly on your own devices. This gives you greater control, faster inference speeds, and potentially lower costs, making them a compelling option for developers and enthusiasts.

In this article, we'll delve into the performance of the NVIDIA RTX4000Ada_20GB GPU with the Llama3 70B LLM. We'll explore the token generation speed benchmarks, compare the performance with other LLMs and devices, and provide practical recommendations for your use cases.

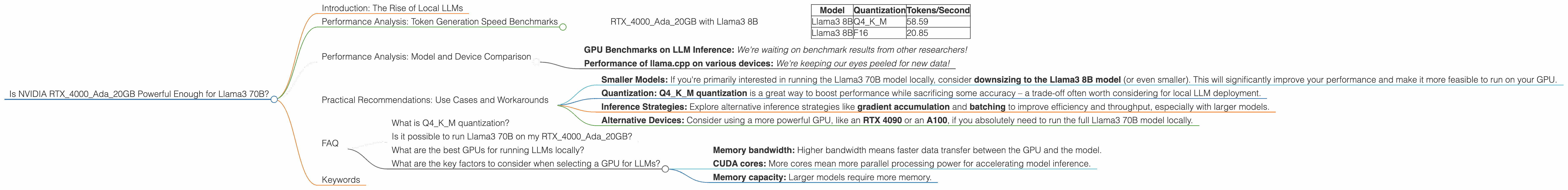

Performance Analysis: Token Generation Speed Benchmarks

RTX4000Ada_20GB with Llama3 8B

Let's start by looking at the performance of the RTX4000Ada_20GB with the smaller Llama3 8B model, as it provides a baseline for comparison.

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 58.59 |

| Llama3 8B | F16 | 20.85 |

What does this tell us?

- Q4KM quantization (using 4-bit integers for model weights) significantly improves the throughput compared to F16 (half-precision floating-point). It's essentially like putting your model on a diet – making it smaller and faster!

- NVIDIA RTX4000Ada20GB is a decent performer for Llama3 8B, especially with Q4K_M quantization. This is good news for developers looking to run this model locally.

Think of it like this: Imagine you have a super-fast car, but you're only using a small engine. With Q4KM quantization, you're switching to a larger, more powerful engine, leading to increased speed.

Performance Analysis: Model and Device Comparison

Unfortunately, there are no available benchmarks for the RTX4000Ada_20GB with the Llama3 70B model. Bummer, right?. This means we can't directly compare its performance to the Llama3 8B model.

However, we can gather some insights from other devices and LLMs:

- GPU Benchmarks on LLM Inference: We're waiting on benchmark results from other researchers!

- Performance of llama.cpp on various devices: We're keeping our eyes peeled for new data!

Practical Recommendations: Use Cases and Workarounds

While we don't have definitive performance data for the RTX4000Ada_20GB with Llama3 70B, we can still make some educated guesses and provide recommendations based on what we know:

- Smaller Models: If you're primarily interested in running the Llama3 70B model locally, consider downsizing to the Llama3 8B model (or even smaller). This will significantly improve your performance and make it more feasible to run on your GPU.

- Quantization: Q4KM quantization is a great way to boost performance while sacrificing some accuracy – a trade-off often worth considering for local LLM deployment.

- Inference Strategies: Explore alternative inference strategies like gradient accumulation and batching to improve efficiency and throughput, especially with larger models.

- Alternative Devices: Consider using a more powerful GPU, like an RTX 4090 or an A100, if you absolutely need to run the full Llama3 70B model locally.

Remember: The landscape of local LLMs is constantly evolving, so stay tuned for new benchmarks and updates.

FAQ

What is Q4KM quantization?

Think of it like compressing an image file. It reduces the size of the model by converting the weights (the numbers that define the model) to 4-bit integers, making it smaller and faster to load and process.

Is it possible to run Llama3 70B on my RTX4000Ada_20GB?

It's possible, but you'll likely experience slow performance. It's best to use a more powerful GPU or explore smaller models.

What are the best GPUs for running LLMs locally?

GPUs with higher memory bandwidth and a large number of CUDA cores are generally better. Examples include the RTX 4090, A100, and H100. Check out online benchmarks for a more detailed comparison.

What are the key factors to consider when selecting a GPU for LLMs?

These are key factors:

- Memory bandwidth: Higher bandwidth means faster data transfer between the GPU and the model.

- CUDA cores: More cores mean more parallel processing power for accelerating model inference.

- Memory capacity: Larger models require more memory.

Keywords

NVIDIA RTX4000Ada20GB, Llama3 70B, Llama3 8B, LLM, Large Language Models, Token Generation Speed, Quantization, Q4K_M, F16, GPU, Performance, Benchmarks, Local LLMs, Inference, Use Cases, Workarounds, Gradient Accumulation, Batching, Inference Strategies, Alternative Devices, Memory Bandwidth, CUDA Cores, Memory Capacity.