Is NVIDIA RTX 4000 Ada 20GB a Good Investment for AI Startups?

Introduction

The world of AI is buzzing with excitement, and Large Language Models (LLMs) are leading the charge. These powerful AI systems are capable of understanding and generating human-like text, revolutionizing fields like customer service, content creation, and even scientific research. But if you're an AI startup looking to leverage LLMs for your projects, you'll need the right hardware to power them. Enter the NVIDIA RTX 4000 Ada 20GB. This powerful graphics card is designed to handle the demanding computational tasks required to run LLMs efficiently. But is it the right investment for your startup?

Let's dive into the world of LLMs, explore the capabilities of the RTX 4000 Ada 20GB, and see if it's the perfect match for your AI endeavors.

RTX 4000 Ada 20GB: A Performance Beast

The RTX 4000 Ada 20GB is the newest addition to the NVIDIA Ada Lovelace series, boasting impressive performance and a generous 20GB of GDDR6 memory. It's a force to be reckoned with, designed specifically for demanding applications like AI model training and inference. This makes it a strong contender for AI startups looking to deploy their LLMs at scale.

Measuring the Power: Token Speed Generation

To understand the RTX 4000 Ada 20GB's power, we need to look at its ability to generate tokens. Imagine tokens as the building blocks of language, each representing a word, punctuation mark, or even a special character. The faster your hardware can process tokens, the more efficiently your LLM can understand and generate text.

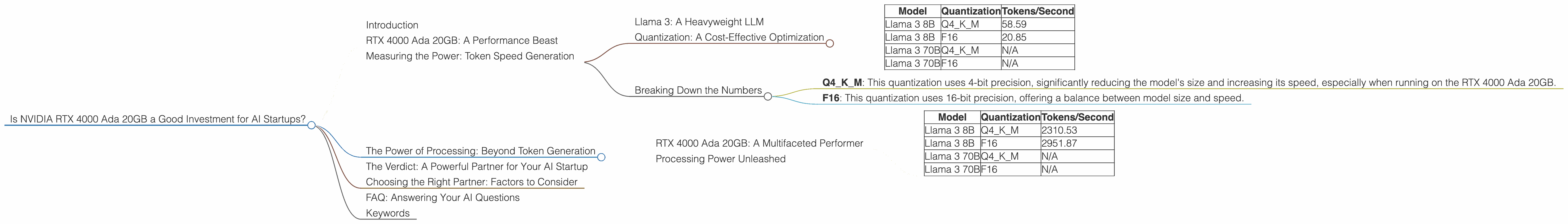

Llama 3: A Heavyweight LLM

For this analysis, we'll focus on the Llama 3 LLM, a popular open-source model known for its impressive performance. We'll measure the RTX 4000 Ada 20GB's performance for the 8B (8 billion parameters) and 70B (70 billion parameters) versions of Llama 3, showcasing its capabilities with different model sizes.

Quantization: A Cost-Effective Optimization

Let's clear up a common term, "quantization." Imagine you have a large, detailed image. You can "quantize" this image by reducing its resolution, making it smaller and easier to store and process. Quantization works similarly with LLMs. It reduces the precision of the model's weights, making it smaller and faster to run, with minimal loss in accuracy. Imagine making a model more "lightweight" without sacrificing much of its performance.

Here's how the RTX 4000 Ada 20GB stacks up:

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama 3 8B | Q4KM | 58.59 |

| Llama 3 8B | F16 | 20.85 |

| Llama 3 70B | Q4KM | N/A |

| Llama 3 70B | F16 | N/A |

Note: Unfortunately, no data is available for the 70B models on this device.

Breaking Down the Numbers

- Q4KM: This quantization uses 4-bit precision, significantly reducing the model's size and increasing its speed, especially when running on the RTX 4000 Ada 20GB.

- F16: This quantization uses 16-bit precision, offering a balance between model size and speed.

The RTX 4000 Ada 20GB shows impressive performance with the 8B Llama 3 model, especially when using the Q4KM quantization. With 58.59 tokens/second, it's a powerhouse for smaller LLMs.

The Power of Processing: Beyond Token Generation

Remember, LLMs are not just about generating text. They also need to process and understand the text they receive – think about analyzing a large dataset of customer reviews or understanding complex instructions. This processing capability is measured in "tokens processed per second," which is another important factor for AI startups.

RTX 4000 Ada 20GB: A Multifaceted Performer

Here's how the RTX 4000 Ada 20GB performs in terms of token processing:

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama 3 8B | Q4KM | 2310.53 |

| Llama 3 8B | F16 | 2951.87 |

| Llama 3 70B | Q4KM | N/A |

| Llama 3 70B | F16 | N/A |

Once again, no data is available for the 70B models on this device.

Processing Power Unleashed

The RTX 4000 Ada 20GB proves its prowess once again with impressive processing speeds for the 8B Llama 3 model. It can process over 2300 tokens per second when using Q4KM quantization and over 2900 tokens per second when using F16 quantization. This makes the card highly effective for tasks involving large datasets and complex analysis.

The Verdict: A Powerful Partner for Your AI Startup

The RTX 4000 Ada 20GB emerges as a strong contender for AI startups, especially those working with smaller LLMs. While it excels with the 8B Llama 3 model, its performance with larger models like the 70B Llama 3 remains unknown, potentially limiting its appeal for complex tasks requiring extensive computational resources.

However, the RTX 4000 Ada 20GB's impressive token generation and processing speeds highlight its potential as an efficient and powerful tool for AI startups. It can be a game changer for projects requiring real-time responses, such as chatbots, or for those working with large datasets needing quick processing.

Choosing the Right Partner: Factors to Consider

While the RTX 4000 Ada 20GB appears promising, it's crucial to consider your specific needs and budget before making a decision. Here's a breakdown of the key factors to consider:

1. LLM Model: If you're working with smaller, 8B LLMs, the RTX 4000 Ada 20GB can be an excellent choice. However, if you plan to use larger models, explore options like the NVIDIA A100 or H100, which are designed for handling the computational demands of larger LLMs.

2. Budget: The RTX 4000 Ada 20GB is a powerful card, but it comes at a price. Consider your budget and its compatibility with your existing hardware infrastructure before making a final decision.

3. Project Needs: Analyze your project's specific requirements. If you need high throughput for tasks like real-time chatbots or large-scale data processing, the RTX 4000 Ada 20GB could be a good fit.

4. Future Scalability: Think about your future plans. If you anticipate needing more powerful hardware as your projects grow, consider a more scalable option like the A100 or H100, which offer superior performance for larger LLMs.

FAQ: Answering Your AI Questions

Q: What are the differences between the RTX 4000 Ada 20GB and other GPUs?

A: Different GPUs offer different performance levels, memory capacities, and power consumption. Research your specific needs and explore various options before making a decision.

Q: How can I choose the right LLM for my AI startup?

A: Choosing the right LLM depends on your specific project needs. Consider factors like model size, language support, and the intended application. Explore available options, test them, and choose the model that best fits your requirements.

Q: Can I use the RTX 4000 Ada 20GB for tasks other than LLM processing?

A: Absolutely! The RTX 4000 Ada 20GB can be used for a wide range of tasks, including graphics rendering, video editing, and even gaming. However, it’s important to remember that it’s a powerful card designed for demanding applications like AI model processing.

Q: What about the future of LLM technology and hardware?

A: The field of LLMs is rapidly evolving, with new models and technologies emerging constantly. Keep an eye on advancements in both models and hardware, and be prepared to adapt your technology choices as needed.

Keywords

AI startups, NVIDIA RTX 4000 Ada 20GB, LLM, Llama 3, token generation, processing speed, quantization, Q4KM, F16, model size, performance, budget, scalability, GPU, AI hardware, AI technology, future of AI, open-source LLMs, inference.