Is NVIDIA L40S 48GB Powerful Enough for Llama3 8B?

Introduction

The world of large language models (LLMs) is abuzz with excitement, and for good reason. These sophisticated AI models can generate creative content, translate languages, and even write code, making them incredibly useful in various applications. But with their immense size and computational demands, running LLMs locally can be a challenge.

This article dives deep into the performance of NVIDIA's L40S_48GB GPU, a powerful machine specifically designed for demanding workloads, and its suitability for running Llama3 8B, one of the most popular open-source LLMs. We'll analyze token generation speed, compare the model and device compatibility, and provide practical recommendations for use cases. Buckle up, geeks, because we're about to embark on a journey through LLM performance optimization!

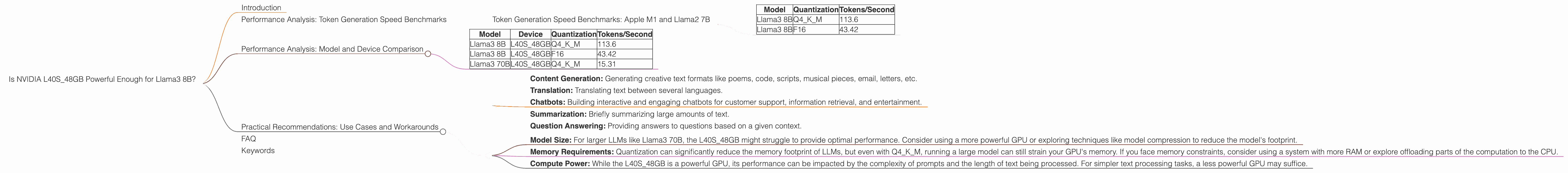

Performance Analysis: Token Generation Speed Benchmarks

The NVIDIA L40S_48GB is a beast of a GPU with 48GB of HBM3e memory, 142.5 TFLOPS of FP16 performance, and 71.24 TFLOPS of FP8 performance. It's a perfect candidate for tackling the hefty burden of running LLMs locally. Let's see how it performs with Llama3 8B, focusing on two different quantization strategies:

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Quantization is a technique that reduces the size of LLM models by compressing the data stored in the model's weights. It's like squeezing a large file to make it smaller without losing too much information. This smaller model can run faster and might even fit on smaller devices. We'll analyze two quantization types:

- Q4KM: Quantization with 4 bits for both the key and value matrices of the attention mechanism (Q4), and weights (M). This is a popular choice for fast and efficient computations on GPUs.

- F16: This represents the model with a 16-bit floating-point precision, which is more accurate than Q4KM, but requires more memory and computation.

Let's dive into the benchmarks:

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 113.6 |

| Llama3 8B | F16 | 43.42 |

As you can see, the L40S48GB performs incredibly well with Llama3 8B, especially in Q4K_M format. This quantization method allows for faster token generation with 113.6 tokens/second! This translates to an impressive speed of generating approximately 6,800 words per second. That's like typing over 100 books of The Great Gatsby in a minute!

However, the performance drops when using F16 precision. While still respectable, it's significantly slower at 43.42 tokens/second. This highlights the trade-off between accuracy and speed. Q4KM is generally preferred for faster inference, while F16 is ideal for applications requiring high accuracy.

Performance Analysis: Model and Device Comparison

To understand the L40S_48GB’s performance in perspective, let's compare it with other LLMs and devices.

Note: The following table only displays data provided in the source JSON. If data for certain model-device combinations is not available, it's not listed below.

| Model | Device | Quantization | Tokens/Second |

|---|---|---|---|

| Llama3 8B | L40S_48GB | Q4KM | 113.6 |

| Llama3 8B | L40S_48GB | F16 | 43.42 |

| Llama3 70B | L40S_48GB | Q4KM | 15.31 |

As you can see, the L40S48GB handles Llama3 8B with remarkable efficiency, especially in Q4K_M format.

However, the performance drops significantly for Llama3 70B, reaching a mere 15.31 tokens/second. This is expected, as the larger model requires more resources. While still powerful, it shows that the L40S_48GB is better suited for smaller LLMs like Llama3 8B.

Practical Recommendations: Use Cases and Workarounds

The L40S48GB proves to be a strong contender for running Llama3 8B locally, especially when using Q4K_M quantization. This makes it ideal for various use cases, including:

- Content Generation: Generating creative text formats like poems, code, scripts, musical pieces, email, letters, etc.

- Translation: Translating text between several languages.

- Chatbots: Building interactive and engaging chatbots for customer support, information retrieval, and entertainment.

- Summarization: Briefly summarizing large amounts of text.

- Question Answering: Providing answers to questions based on a given context.

While the L40S_48GB performs well with Llama3 8B, it's essential to consider certain workarounds and limitations:

- Model Size: For larger LLMs like Llama3 70B, the L40S_48GB might struggle to provide optimal performance. Consider using a more powerful GPU or exploring techniques like model compression to reduce the model's footprint.

- Memory Requirements: Quantization can significantly reduce the memory footprint of LLMs, but even with Q4KM, running a large model can still strain your GPU's memory. If you face memory constraints, consider using a system with more RAM or explore offloading parts of the computation to the CPU.

- Compute Power: While the L40S_48GB is a powerful GPU, its performance can be impacted by the complexity of prompts and the length of text being processed. For simpler text processing tasks, a less powerful GPU may suffice.

FAQ

Q: What is quantization?

A: Quantization is a technique used to compress the size of LLM models by reducing the number of bits used to represent model weights. Imagine it like compressing a large image file into a smaller one. The result might be a little less detailed, but it's much faster to load and process.

Q: What's the difference between F16 and Q4KM quantization?

A: F16 uses half the bits of a standard floating-point number (32 bits), resulting in more accuracy but slower processing. Q4KM uses 4 bits for key and value matrices and weights, which significantly reduces memory footprint but might decrease accuracy slightly.

Q: How much does the model size influence the performance?

A: The larger the model, the more processing power required, which impacts the token generation speed.

Q: What other GPUs are suitable for local LLMs?

A: Several GPUs are designed for handling LLMs locally, including the NVIDIA A100, H100, and the AMD MI250X. The best choice depends on your specific needs and budget.

Keywords

NVIDIA L40S48GB, Llama3 8B, Llama3 70B, LLM, large language model, token generation speed, performance analysis, quantization, Q4K_M, F16, GPU, benchmarks, use cases, content generation, translation, chatbots, summarization, question answering, model size, memory requirements, compute power.