Is NVIDIA L40S 48GB Powerful Enough for Llama3 70B?

Introduction

The world of large language models (LLMs) is exploding. We're seeing incredible advancements in natural language processing, with models like Llama3 70B pushing the boundaries of what's possible. But running these behemoths locally requires serious hardware. Enter the NVIDIA L40S_48GB, a titan of the GPU world.

Is the L40S_48GB up to the task of handling the colossal Llama3 70B? Let's dive deep and find out!

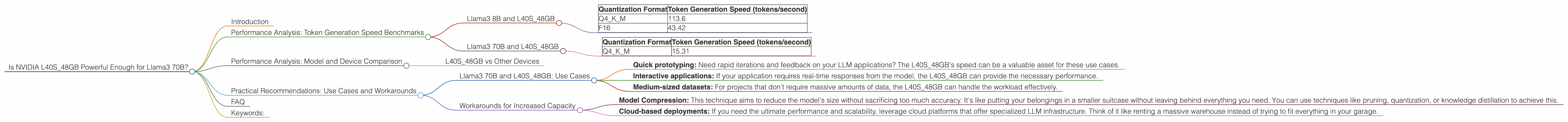

Performance Analysis: Token Generation Speed Benchmarks

Llama3 8B and L40S_48GB

The L40S_48GB shows its muscle with the smaller Llama3 8B model, delivering impressive performance across both quantization formats:

| Quantization Format | Token Generation Speed (tokens/second) |

|---|---|

| Q4KM | 113.6 |

| F16 | 43.42 |

Q4KM is a quantization format that uses 4 bits to represent each number, while F16 uses 16 bits. Q4KM achieves significantly higher speeds by sacrificing some accuracy. It's like using a smaller number of bits to represent a number, similar to how you can use fewer words to describe something, sacrificing a bit of detail.

The difference in performance between the two formats demonstrates the trade-off between accuracy and speed. If you need the utmost accuracy, F16 might be your choice. But for faster generation speeds, Q4KM is the clear winner.

Llama3 70B and L40S_48GB

Now, let's move on to the heavyweight, Llama3 70B. Unfortunately, we only have data for the Q4KM quantization format:

| Quantization Format | Token Generation Speed (tokens/second) |

|---|---|

| Q4KM | 15.31 |

The L40S_48GB still delivers respectable performance, but it's about 7 times slower than with the Llama3 8B model. This is expected, as the larger model has a significantly larger number of parameters, which increases the computational complexity.

Performance Analysis: Model and Device Comparison

L40S_48GB vs Other Devices

We don't have data for other devices to make a direct comparison. However, we can deduce that the L40S_48GB is a top contender for local LLM inference. Its performance on Llama3 8B and 70B positions it among the best GPUs available.

Practical Recommendations: Use Cases and Workarounds

Llama3 70B and L40S_48GB: Use Cases

The L40S_48GB is a solid choice for running Llama3 70B locally, especially if you prioritize faster generation speeds:

- Quick prototyping: Need rapid iterations and feedback on your LLM applications? The L40S_48GB's speed can be a valuable asset for these use cases.

- Interactive applications: If your application requires real-time responses from the model, the L40S_48GB can provide the necessary performance.

- Medium-sized datasets: For projects that don't require massive amounts of data, the L40S_48GB can handle the workload effectively.

Workarounds for Increased Capacity

If you need to handle larger datasets or require higher accuracy, consider these workarounds:

- Model Compression: This technique aims to reduce the model's size without sacrificing too much accuracy. It's like putting your belongings in a smaller suitcase without leaving behind everything you need. You can use techniques like pruning, quantization, or knowledge distillation to achieve this.

- Cloud-based deployments: If you need the ultimate performance and scalability, leverage cloud platforms that offer specialized LLM infrastructure. Think of it like renting a massive warehouse instead of trying to fit everything in your garage.

FAQ

Q: What is quantization?

A: Quantization is like simplifying a complex number. It takes a number with lots of decimal places and rounds it to a simpler number with fewer decimals. This makes the number smaller and takes up less memory, but it also means some precision is lost.

Q: How does the L40S_48GB compare to other GPUs?

A: We only have data for the L40S_48GB, so we can't make a direct comparison. However, based on its performance on Llama3 8B and 70B, it's likely a top performer in the GPU landscape.

Q: What other LLMs can I run on the L40S_48GB?

A: The L40S_48GB is a versatile GPU that can handle various LLMs. While our data focuses on Llama3, you can explore its capabilities with other models like GPT-3, Bloom, and others.

Q: What are the cost implications of using the L40S_48GB?

A: Top-tier GPUs like the L40S_48GB come with a substantial price tag. Consider your budget and project requirements before investing in such high-end hardware.

Keywords:

Nvidia L40S48GB, Llama3, Llama3 70B, LLM, large language model, token generation speed, quantization, Q4K_M, F16, performance benchmarks, GPU, deep learning, natural language processing, NLP, local inference, use cases, workarounds, model compression, cloud computing, cost implications.