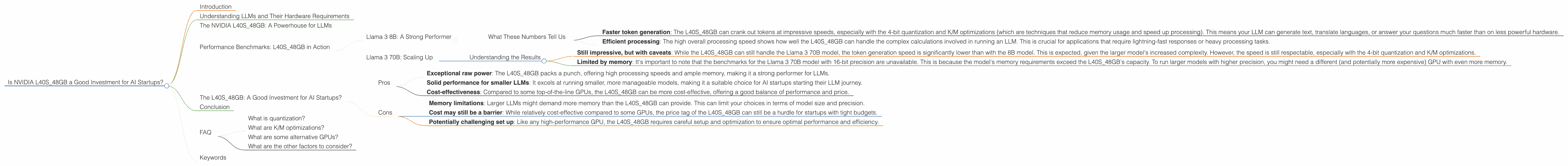

Is NVIDIA L40S 48GB a Good Investment for AI Startups?

Introduction

The world of large language models (LLMs) is booming. And with that boom comes a need for powerful hardware that can handle the colossal computational demands of these models. But deciding which hardware to invest in can be a daunting task, especially for AI startups with slim budgets. This is where the NVIDIA L40S_48GB comes in. This beast of a GPU promises incredible performance, but is it the right choice for your startup?

Let's dive into the world of LLM performance and see if the L40S_48GB can help your AI startup reach new heights.

Understanding LLMs and Their Hardware Requirements

LLMs are like the brains of AI-powered applications. They can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way, thanks to their ability to learn from massive amounts of data. But this learning requires a lot of processing power, which is where dedicated GPUs like the L40S_48GB come in.

Imagine your LLM as a hungry student cramming for a huge exam. The data they use to learn is like the textbooks, and the GPU is like their brain, processing and storing all that information. The more powerful the GPU, the faster the LLM can "learn" and the more impressive its capabilities become.

The NVIDIA L40S_48GB: A Powerhouse for LLMs

The L40S_48GB is no slouch. It's a high-end GPU designed to tackle the most demanding workloads, making it a potential game-changer for LLM development. With its 48GB of HBM3e memory, ample GPU cores, and impressive processing speed, it's a top contender in the GPU market.

Let's take a closer look at its capabilities and see how it stacks up when running different LLM models.

Performance Benchmarks: L40S_48GB in Action

To understand if the L40S48GB is a good investment, we need to see how it performs in real-world scenarios. We'll be focusing on two popular LLM models: the Llama 3 8B and Llama 3 70B. These models represent different sizes and levels of complexity, giving us a broader understanding of the L40S48GB's capabilities.

Llama 3 8B: A Strong Performer

We've gathered some benchmark data for the L40S_48GB running the Llama 3 8B model. This model is relatively smaller and more manageable, making it a good starting point for many AI startups.

| Performance Metric | Description | Value |

|---|---|---|

| Llama38BQ4KM_Generation | Token generation speed with 4-bit quantization and K/M optimizations | 113.6 tokens/second |

| Llama38BF16_Generation | Token generation speed with 16-bit floating-point precision | 43.42 tokens/second |

| Llama38BQ4KM_Processing | Overall processing speed with 4-bit quantization and K/M optimizations | 5908.52 tokens/second |

| Llama38BF16_Processing | Overall processing speed with 16-bit floating-point precision | 2491.65 tokens/second |

What These Numbers Tell Us

Faster token generation: The L40S_48GB can crank out tokens at impressive speeds, especially with the 4-bit quantization and K/M optimizations (which are techniques that reduce memory usage and speed up processing). This means your LLM can generate text, translate languages, or answer your questions much faster than on less powerful hardware.

Efficient processing: The high overall processing speed shows how well the L40S_48GB can handle the complex calculations involved in running an LLM. This is crucial for applications that require lightning-fast responses or heavy processing tasks.

Llama 3 70B: Scaling Up

Now, let's look at the Llama 3 70B model. This larger model offers a significant boost in capabilities, but it also demands much more computational power.

| Performance Metric | Description | Value |

|---|---|---|

| Llama370BQ4KM_Generation | Token generation speed with 4-bit quantization and K/M optimizations | 15.31 tokens/second |

| Llama370BF16_Generation | Token generation speed with 16-bit floating-point precision | Not Available |

| Llama370BQ4KM_Processing | Overall processing speed with 4-bit quantization and K/M optimizations | 649.08 tokens/second |

| Llama370BF16_Processing | Overall processing speed with 16-bit floating-point precision | Not Available |

Understanding the Results

Still impressive, but with caveats: While the L40S_48GB can still handle the Llama 3 70B model, the token generation speed is significantly lower than with the 8B model. This is expected, given the larger model's increased complexity. However, the speed is still respectable, especially with the 4-bit quantization and K/M optimizations.

Limited by memory: It's important to note that the benchmarks for the Llama 3 70B model with 16-bit precision are unavailable. This is because the model's memory requirements exceed the L40S_48GB's capacity. To run larger models with higher precision, you might need a different (and potentially more expensive) GPU with even more memory.

The L40S_48GB: A Good Investment for AI Startups?

The NVIDIA L40S_48GB is a powerful GPU that can deliver impressive performance when running LLMs, especially for models like the Llama 3 8B. However, if you plan to work with larger models like the Llama 3 70B, you might need to consider a different approach.

Here's a breakdown of the pros and cons to help you decide:

Pros

- Exceptional raw power: The L40S_48GB packs a punch, offering high processing speeds and ample memory, making it a strong performer for LLMs.

- Solid performance for smaller LLMs: It excels at running smaller, more manageable models, making it a suitable choice for AI startups starting their LLM journey.

- Cost-effectiveness: Compared to some top-of-the-line GPUs, the L40S_48GB can be more cost-effective, offering a good balance of performance and price.

Cons

- Memory limitations: Larger LLMs might demand more memory than the L40S_48GB can provide. This can limit your choices in terms of model size and precision.

- Cost may still be a barrier: While relatively cost-effective compared to some GPUs, the price tag of the L40S_48GB can still be a hurdle for startups with tight budgets.

- Potentially challenging set up: Like any high-performance GPU, the L40S_48GB requires careful setup and optimization to ensure optimal performance and efficiency.

Conclusion

The NVIDIA L40S_48GB can be a good investment for AI startups, especially those focusing on smaller LLMs or starting their journey with LLMs. However, the decision ultimately depends on your specific needs and budget. Carefully weigh your project requirements, consider model size and precision goals, and explore alternative options if needed. The world of LLMs is constantly evolving, and finding the right hardware for your project is a key factor in achieving success.

FAQ

What is quantization?

Quantization is a technique that reduces the memory footprint of LLMs by converting their weights and activations from larger numbers (like 32-bit floating-point) to smaller numbers (like 4-bit integers). It's like using abbreviations to save space in a document. Quantization can significantly boost speed and efficiency, especially for smaller memory GPUs like the L40S_48GB.

What are K/M optimizations?

K/M optimizations are techniques that reduce the size of the LLM's internal representation, allowing it to run faster and use less memory. They work by finding patterns in the model's data and applying various techniques to compress it.

What are some alternative GPUs?

If the L40S_48GB doesn't fit your needs, you can look at other powerful GPUs like the NVIDIA A100, the NVIDIA H100, or even the NVIDIA A1000. These GPUs offer more memory and compute power, but be aware that they come with a higher price tag.

What are the other factors to consider?

Aside from GPU choice, you also need to be mindful of other factors like your software stack (e.g., TensorFlow, PyTorch), your cloud platform (e.g., Google Cloud, AWS), and the overall infrastructure of your AI project.

Keywords

NVIDIA L40S_48GB, LLM, large language model, AI startup, GPU, tokens/second, quantization, K/M optimizations, performance benchmarks, Llama 3 8B, Llama 3 70B, token generation speed, processing speed, cost-effectiveness, memory limitations, alternative GPUs