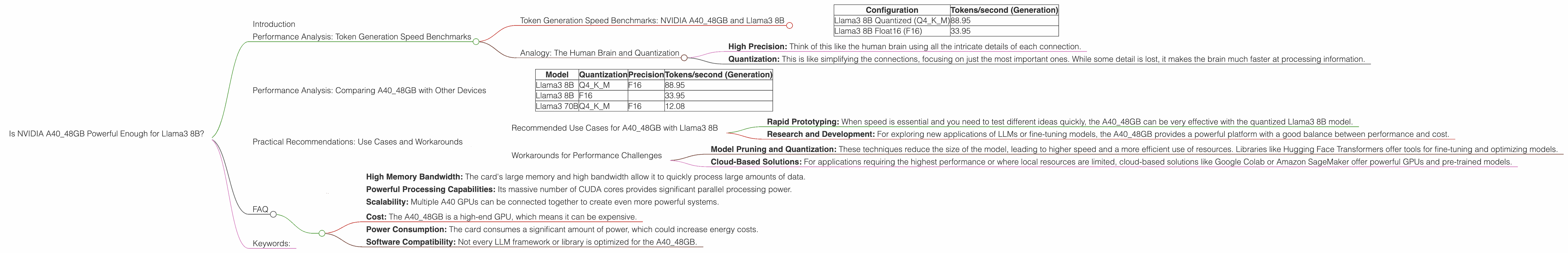

Is NVIDIA A40 48GB Powerful Enough for Llama3 8B?

Introduction

The world of large language models (LLMs) is buzzing with excitement, and everyone wants to get their hands on the latest and greatest models. But with these massive models comes the need for equally powerful hardware. One of the most popular choices for running LLMs locally is the NVIDIA A40 GPU, a powerhouse known for its massive memory and processing capabilities. But can this mighty card handle the demands of the mighty Llama3 8B model? This article dives into the performance of the A40_48GB when running Llama3 8B, exploring its strengths, limitations, and how it stacks up against other possibilities.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA A40_48GB and Llama3 8B

Let's get straight to the point. The NVIDIA A40_48GB is indeed capable of running Llama3 8B, but its performance depends heavily on the quantization and precision levels used. Here's a breakdown of the results:

| Configuration | Tokens/second (Generation) |

|---|---|

| Llama3 8B Quantized (Q4KM) | 88.95 |

| Llama3 8B Float16 (F16) | 33.95 |

Key Observations:

- Quantization Makes a Big Difference: The A40_48GB performs significantly better with quantized versions of Llama3 8B. This is because quantization reduces the model's size, leading to faster processing.

- F16 Precision Still Usable: While F16 precision is slower, it still offers reasonable performance, making it a good option when the full accuracy of the model is required.

Analogy: The Human Brain and Quantization

Imagine the human brain as a giant network of neurons. Each neuron represents a bit of information, and the strength of the connections between them represents the importance of that information.

- High Precision: Think of this like the human brain using all the intricate details of each connection.

- Quantization: This is like simplifying the connections, focusing on just the most important ones. While some detail is lost, it makes the brain much faster at processing information.

Performance Analysis: Comparing A40_48GB with Other Devices

Unfortunately, we don't have performance data for Llama3 8B on other devices. But we can compare the A40_48GB's performance with other models and configurations:

Table: Token Generation Speed Benchmarks on A40_48GB (Tokens per second)

| Model | Quantization | Precision | Tokens/second (Generation) |

|---|---|---|---|

| Llama3 8B | Q4KM | F16 | 88.95 |

| Llama3 8B | F16 | 33.95 | |

| Llama3 70B | Q4KM | F16 | 12.08 |

Key Observations:

- Larger Models, Lower Speed: The larger Llama3 70B model is significantly slower than Llama3 8B, even with the same quantization level. This is expected, as larger models have more parameters to process.

Practical Recommendations: Use Cases and Workarounds

Recommended Use Cases for A40_48GB with Llama3 8B

- Rapid Prototyping: When speed is essential and you need to test different ideas quickly, the A40_48GB can be very effective with the quantized Llama3 8B model.

- Research and Development: For exploring new applications of LLMs or fine-tuning models, the A40_48GB provides a powerful platform with a good balance between performance and cost.

Workarounds for Performance Challenges

- Model Pruning and Quantization: These techniques reduce the size of the model, leading to higher speed and a more efficient use of resources. Libraries like Hugging Face Transformers offer tools for fine-tuning and optimizing models.

- Cloud-Based Solutions: For applications requiring the highest performance or where local resources are limited, cloud-based solutions like Google Colab or Amazon SageMaker offer powerful GPUs and pre-trained models.

FAQ

Q: What does "quantization" mean?

A: Quantization is a technique that reduces the size of a model by representing its weights and activations using fewer bits. This makes the model smaller and faster, but it can also reduce accuracy.

Q: What is "Q4KM" quantization?

*A: * Q4KM stands for "4-bit Quantization for Key, Value, and Matrix" It's a quantization method that stores the key, value, and matrix weights using 4 bits each.

Q: What are the advantages of using the A40_48GB for running LLMs?

A: The A40_48GB offers several advantages:

- High Memory Bandwidth: The card's large memory and high bandwidth allow it to quickly process large amounts of data.

- Powerful Processing Capabilities: Its massive number of CUDA cores provides significant parallel processing power.

- Scalability: Multiple A40 GPUs can be connected together to create even more powerful systems.

Q: Are there any limitations to using the A40_48GB for LLMs?

A: The A40_48GB is not without its limitations:

- Cost: The A40_48GB is a high-end GPU, which means it can be expensive.

- Power Consumption: The card consumes a significant amount of power, which could increase energy costs.

- Software Compatibility: Not every LLM framework or library is optimized for the A40_48GB.

Keywords:

A4048GB, NVIDIA, Llama3, Llama3 8B, LLMs, token generation, speed, benchmarks, performance, quantization, Q4K_M, F16, GPU, deep learning, NLP, natural language processing, inference, model size, accuracy, practical recommendations, use cases, workarounds, cloud-based solutions, Google Colab, Amazon SageMaker, Hugging Face Transformers, model pruning