Is NVIDIA A40 48GB Powerful Enough for Llama3 70B?

Introduction

The world of large language models (LLMs) is buzzing with excitement, and rightfully so! These AI-powered marvels can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But, getting these LLMs to run smoothly and efficiently requires powerful hardware, especially for the larger models.

In this deep dive, we'll explore the capabilities of the NVIDIA A40_48GB GPU and its performance with the Llama 3 70B model. This analysis will delve into the crucial metrics like token generation speed, comparing different quantization methods, and providing practical recommendations for developers looking to leverage this powerful combination.

Performance Analysis: Token Generation Speed Benchmarks

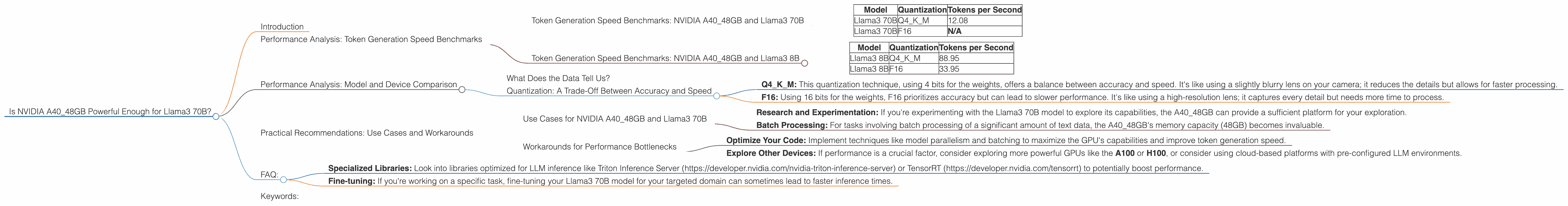

Token Generation Speed Benchmarks: NVIDIA A40_48GB and Llama3 70B

Let's get straight to the numbers! The table below showcases the token generation speed (tokens per second) of Llama3 70B on the A4048GB GPU, using both Q4K_M and F16 quantization methods.

| Model | Quantization | Tokens per Second |

|---|---|---|

| Llama3 70B | Q4KM | 12.08 |

| Llama3 70B | F16 | N/A |

Note: There is no available data for Llama3 70B with F16 quantization on the NVIDIA A40_48GB.

Token Generation Speed Benchmarks: NVIDIA A40_48GB and Llama3 8B

For comparison, let's also look at the performance of Llama3 8B on the same GPU.

| Model | Quantization | Tokens per Second |

|---|---|---|

| Llama3 8B | Q4KM | 88.95 |

| Llama3 8B | F16 | 33.95 |

Observation: The A4048GB GPU significantly outpaces the smaller Llama3 8B model, boasting a much higher token generation speed, especially with the Q4K_M quantization.

Performance Analysis: Model and Device Comparison

What Does the Data Tell Us?

The data clearly indicates that the A4048GB GPU, while capable of handling the Llama3 70B model, isn't particularly fast. Compared to the Llama3 8B model, the performance drops drastically, especially with the Q4K_M quantization. It's akin to having a supercar in a parking lot – it's technically capable of high speeds, but its environment doesn't let it shine.

Quantization: A Trade-Off Between Accuracy and Speed

- Q4KM: This quantization technique, using 4 bits for the weights, offers a balance between accuracy and speed. It's like using a slightly blurry lens on your camera; it reduces the details but allows for faster processing.

- F16: Using 16 bits for the weights, F16 prioritizes accuracy but can lead to slower performance. It's like using a high-resolution lens; it captures every detail but needs more time to process.

Since the A4048GB doesn't seem to be a perfect match for Llama3 70B in F16, we can't accurately compare its performance with the Q4K_M version.

Practical Recommendations: Use Cases and Workarounds

Use Cases for NVIDIA A40_48GB and Llama3 70B

Even though the A40_48GB might not be the champion of speed for Llama3 70B, it can still be a useful combination for specific use cases.

- Research and Experimentation: If you're experimenting with the Llama3 70B model to explore its capabilities, the A40_48GB can provide a sufficient platform for your exploration.

- Batch Processing: For tasks involving batch processing of a significant amount of text data, the A40_48GB's memory capacity (48GB) becomes invaluable.

Workarounds for Performance Bottlenecks

Here are a few strategies to get the most out of your setup:

- Optimize Your Code: Implement techniques like model parallelism and batching to maximize the GPU's capabilities and improve token generation speed.

- Explore Other Devices: If performance is a crucial factor, consider exploring more powerful GPUs like the A100 or H100, or consider using cloud-based platforms with pre-configured LLM environments.

FAQ:

Q: What is quantization?

A: Quantization is a technique used to reduce the size of a model's weights by representing them with fewer bits. Think of it as summarizing a long story in a few sentences – you lose some details but gain a smaller, more manageable story.

Q: What are tokens?

A: Tokens are the units of data that LLMs process. It's like a building block for language. Words, punctuation marks, and even spaces can be considered tokens.

Q: How do I choose the right LLM and device for my needs?

A: The key is to consider your specific task, available resources, and performance requirements. Smaller models might run more efficiently on less powerful hardware, while larger models might need more resources. Check out online benchmarks and performance comparisons to guide your decision.

Q: Is there a way to improve the performance of Llama3 70B on the A40_48GB?

A: While the A40_48GB might not be ideal for blazing-fast token generation with Llama 70B, you can explore:

- Specialized Libraries: Look into libraries optimized for LLM inference like Triton Inference Server (https://developer.nvidia.com/nvidia-triton-inference-server) or TensorRT (https://developer.nvidia.com/tensorrt) to potentially boost performance.

- Fine-tuning: If you're working on a specific task, fine-tuning your Llama3 70B model for your targeted domain can sometimes lead to faster inference times.

Q: What's the future of LLMs and hardware?

A: The world of LLMs and hardware is constantly evolving, with new models and devices emerging rapidly. The future holds even more powerful GPUs tailored specifically for AI workloads, as well as innovative hardware designs that enhance performance and efficiency. It's an exciting time to be involved in this constantly evolving landscape!

Keywords:

NVIDIA A4048GB, Llama3 70B, Llama3 8B, Q4K_M, F16, token generation speed, performance benchmarks, GPU, LLM, quantization, model parallelism, batch processing, Triton Inference Server, TensorRT, fine-tuning, large language model, AI, deep learning, inference,