Is NVIDIA A40 48GB a Good Investment for AI Startups?

Introduction

The world of AI is exploding, and large language models (LLMs) are leading the charge. These powerful models can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way, like a super-smart chatbot!

But running these LLMs requires serious hardware. That's where NVIDIA A40_48GB, a beast of a GPU, comes in. Is it a good investment for AI startups? Let's dive into the details and see if this powerful card can fuel your AI dreams!

Understanding the A40_48GB and LLMs

The A40_48GB is a top-of-the-line GPU designed for demanding workloads like AI training and inference. It boasts an impressive 48GB of HBM2e memory, 48GB of GDDR6X memory, and a massive number of CUDA cores.

To understand why this matters, let's talk about LLMs. Imagine an LLM as a massive brain – it needs enough memory to store all the information it's learned and a fast processor to crunch through the data when you ask it questions. That's where the A40_48GB shines!

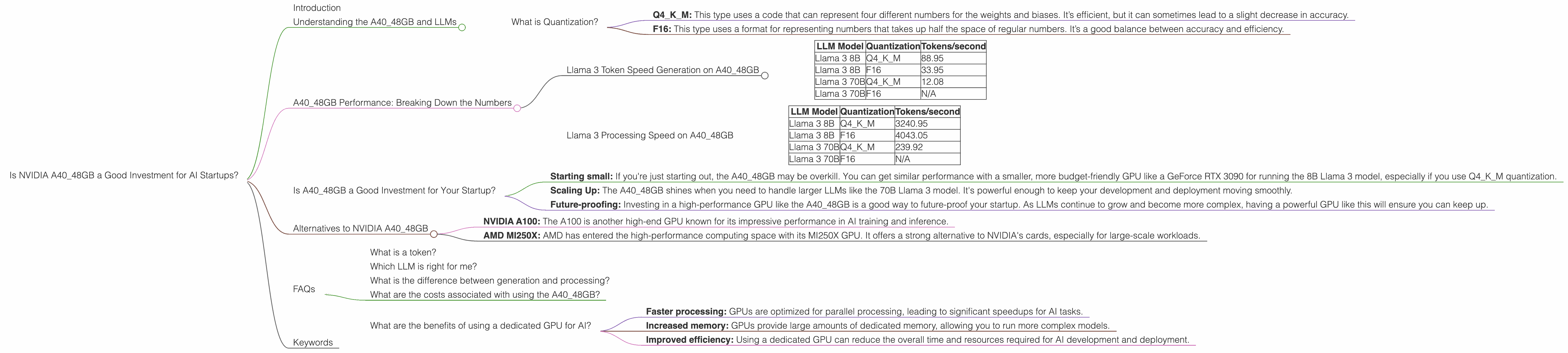

What is Quantization?

Before diving into the specifics, we need to understand a key concept: quantization. Imagine you have a huge book filled with numbers, but you want to create a smaller, more efficient version. Quantization does just that. It takes the big, complex numbers and turns them into smaller, simpler ones. This makes the LLM faster and uses less memory, like compressing a large file.

There are mainly two types of quantization used in LLMs:

- Q4KM: This type uses a code that can represent four different numbers for the weights and biases. It’s efficient, but it can sometimes lead to a slight decrease in accuracy.

- F16: This type uses a format for representing numbers that takes up half the space of regular numbers. It’s a good balance between accuracy and efficiency.

A40_48GB Performance: Breaking Down the Numbers

Now, let's see how the A40_48GB performs with different LLM models. We'll focus on Llama 3: a popular open-source language model that's known for its impressive capabilities.

Llama 3 Token Speed Generation on A40_48GB

Here's a breakdown of how many tokens per second (tokens/second) the A40_48GB can generate with Llama 3, depending on the model size and quantization technique:

| LLM Model | Quantization | Tokens/second |

|---|---|---|

| Llama 3 8B | Q4KM | 88.95 |

| Llama 3 8B | F16 | 33.95 |

| Llama 3 70B | Q4KM | 12.08 |

| Llama 3 70B | F16 | N/A |

Key Takeaways:

- Smaller is faster: The A40_48GB generates tokens much faster with the smaller 8B Llama 3 model compared to the 70B model. This makes sense, as the smaller model has a lighter computational load.

- Quantization matters: Using Q4KM quantization significantly boosts the speed for both the 8B and 70B models compared to F16.

- The power of F16: While the A40_48GB can't handle F16 quantization for the 70B model at this point, it provides a balanced approach for the smaller 8B model.

Llama 3 Processing Speed on A40_48GB

The A40_48GB can also process tokens at blazing speeds. Here's a look at the processing power:

| LLM Model | Quantization | Tokens/second |

|---|---|---|

| Llama 3 8B | Q4KM | 3240.95 |

| Llama 3 8B | F16 | 4043.05 |

| Llama 3 70B | Q4KM | 239.92 |

| Llama 3 70B | F16 | N/A |

Key Observations:

- F16 triumphs: Surprisingly, F16 quantization provides faster processing speeds for the 8B model compared to Q4KM.

- The trade-off: While faster processing is great, remember, quantization usually comes with a slight accuracy trade-off.

- Scaling the 70B model: It's not possible to run F16 quantization with the 70B model on the A4048GB at this time. The A4048GB might be able to handle it if it gets a future update.

Is A40_48GB a Good Investment for Your Startup?

The answer depends on your specific needs. Let's break it down:

- Starting small: If you're just starting out, the A4048GB may be overkill. You can get similar performance with a smaller, more budget-friendly GPU like a GeForce RTX 3090 for running the 8B Llama 3 model, especially if you use Q4K_M quantization.

- Scaling Up: The A40_48GB shines when you need to handle larger LLMs like the 70B Llama 3 model. It's powerful enough to keep your development and deployment moving smoothly.

- Future-proofing: Investing in a high-performance GPU like the A40_48GB is a good way to future-proof your startup. As LLMs continue to grow and become more complex, having a powerful GPU like this will ensure you can keep up.

Alternatives to NVIDIA A40_48GB

While A40_48GB is one of the top choices, other powerful alternatives exist. Here are some popular options:

- NVIDIA A100: The A100 is another high-end GPU known for its impressive performance in AI training and inference.

- AMD MI250X: AMD has entered the high-performance computing space with its MI250X GPU. It offers a strong alternative to NVIDIA's cards, especially for large-scale workloads.

FAQs

What is a token?

Tokens are the building blocks of language models. They represent individual words, punctuation marks, or even parts of words. For example, the word "hello" can be broken down into three tokens: "hel", "lo", and a space.

Which LLM is right for me?

The best LLM for you depends on your specific needs. The size of the model (like 7B or 70B) determines its complexity and performance. Smaller models are typically faster but less knowledgeable, while larger models are more powerful but require more resources.

What is the difference between generation and processing?

Generation refers to creating new text, while processing refers to understanding and analyzing existing text. LLMs perform both tasks, and the performance metrics for each can vary.

What are the costs associated with using the A40_48GB?

The A40_48GB is a premium product, and its cost will vary depending on where you purchase it. Additionally, running a high-performance GPU like this can involve ongoing costs for power consumption and maintenance.

What are the benefits of using a dedicated GPU for AI?

Using a dedicated GPU for your AI workloads offers several advantages:

- Faster processing: GPUs are optimized for parallel processing, leading to significant speedups for AI tasks.

- Increased memory: GPUs provide large amounts of dedicated memory, allowing you to run more complex models.

- Improved efficiency: Using a dedicated GPU can reduce the overall time and resources required for AI development and deployment.

Keywords

NVIDIA A4048GB, AI startups, LLM, Llama 3, GPU performance, token speed, processing speed, quantization, Q4K_M, F16, AI hardware, investment, deep learning, natural language processing, open-source language models, GPU benchmarks, AMD MI250X, NVIDIA A100.