Is NVIDIA A100 SXM 80GB Powerful Enough for Llama3 8B?

Introduction

The world of large language models (LLMs) is buzzing with excitement right now, with new models and ever-increasing capabilities emerging constantly. One of the hottest topics is local LLM inference, the ability to run these models on your own hardware, providing greater control, privacy, and potentially even lower costs. But with the computational demands of these models, you need a serious piece of hardware to handle the load. In this deep dive, we'll take a look at the NVIDIA A100SXM80GB, a powerful GPU, and explore its performance with the Llama3 8B model.

For those less familiar with the jargon, imagine LLMs as super-smart text generators capable of writing stories, translating languages, summarizing information, and much more. The "8B" in Llama3 8B refers to the number of parameters in the model, which is a measure of its complexity and capabilities. Think of it like a giant brain with 8 billion connections.

Performance Analysis

It's time to get technical! We'll dissect the A100SXM80GB's performance with Llama3 8B, focusing on token generation speed. Think of tokens as individual units of text, like words or punctuation marks. The faster the GPU can generate these tokens, the quicker your LLM will respond to prompts.

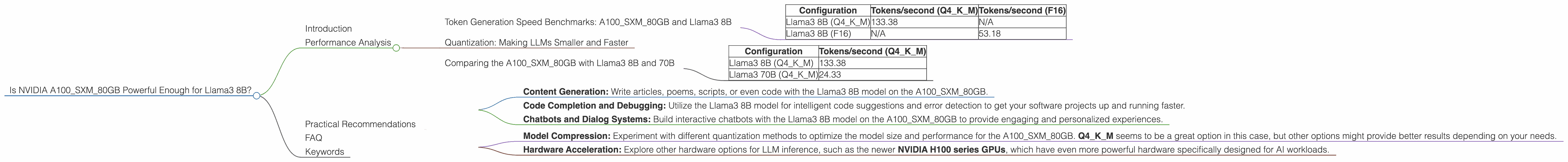

Token Generation Speed Benchmarks: A100SXM80GB and Llama3 8B

| Configuration | Tokens/second (Q4KM) | Tokens/second (F16) |

|---|---|---|

| Llama3 8B (Q4KM) | 133.38 | N/A |

| Llama3 8B (F16) | N/A | 53.18 |

Important Note: The data for Llama38BF16 generation speed is not available. As mentioned before, we'll focus on what we have, and you can see that the A100SXM80GB excels with Llama3 8B when using Q4KM quantization. But what's the deal with "Q4KM"?

Quantization: Making LLMs Smaller and Faster

Imagine you have a massive, high-resolution image. You can compress it using different techniques to make it smaller and faster to download. Quantization is similar for LLMs. It reduces the precision of the model's weights, making it smaller and more lightweight.

The A100SXM80GB shines with the Llama3 8B model when using the Q4KM quantization. This is a specific type of compressed representation that reduces the model's size while preserving significant performance. Think of it as an efficient compression method that allows the A100SXM80GB to process the LLM more quickly.

Comparing the A100SXM80GB with Llama3 8B and 70B

| Configuration | Tokens/second (Q4KM) |

|---|---|

| Llama3 8B (Q4KM) | 133.38 |

| Llama3 70B (Q4KM) | 24.33 |

The A100SXM80GB performs significantly better with the smaller Llama3 8B model, producing over 5 times more tokens per second compared to the larger Llama3 70B model. This is understandable, as a bigger model needs more computational power to process.

Practical Recommendations

Use Cases:

- Content Generation: Write articles, poems, scripts, or even code with the Llama3 8B model on the A100SXM80GB.

- Code Completion and Debugging: Utilize the Llama3 8B model for intelligent code suggestions and error detection to get your software projects up and running faster.

- Chatbots and Dialog Systems: Build interactive chatbots with the Llama3 8B model on the A100SXM80GB to provide engaging and personalized experiences.

Workarounds:

- Model Compression: Experiment with different quantization methods to optimize the model size and performance for the A100SXM80GB. Q4KM seems to be a great option in this case, but other options might provide better results depending on your needs.

- Hardware Acceleration: Explore other hardware options for LLM inference, such as the newer NVIDIA H100 series GPUs, which have even more powerful hardware specifically designed for AI workloads.

FAQ

Q: What is the cost of an NVIDIA A100SXM80GB?

A: The A100SXM80GB is not available for purchase as a standalone product. It is typically found in servers and other data center solutions. The cost can vary depending on the specific configuration.

Q: Are there any other GPUs that can handle Llama3 8B well?

A: Yes, other high-end GPUs like the NVIDIA RTX 4090 and AMD Radeon RX 7900 XTX can also handle Llama3 8B, although the performance might not be as impressive as the A100SXM80GB.

Q: Can I use the A100SXM80GB for personal use?

A: While technically possible, it's more common to find the A100SXM80GB in professional environments due to its hefty price tag and power consumption.

Keywords

LLM, Large Language Model, Llama3, Llama3 8B, Local Inference, NVIDIA A100, NVIDIA A100SXM80GB, GPU, Performance, Token Generation Speed, Token Generation per Second, Quantization, Q4KM, F16, AI, Machine Learning, Natural Language Processing, NLP, Content Generation, Chatbot, Model Compression, Hardware Acceleration, Developers, Geeks