Is NVIDIA A100 SXM 80GB Powerful Enough for Llama3 70B?

Introduction

The world of large language models (LLMs) is exploding, with new models constantly being released and pushing the boundaries of what's possible. One of the hottest topics in this space is local inference – running these powerful models directly on your own hardware. But with massive models like Llama 3 70B, finding the right GPU for the job can be a real head-scratcher.

In this deep dive, we'll explore the performance of the NVIDIA A100SXM80GB with Llama3 70B – a powerhouse GPU paired with a truly massive LLM. We’ll break down the token generation speed benchmarks, compare this combo with other powerful configurations, and offer practical recommendations for use cases and workarounds.

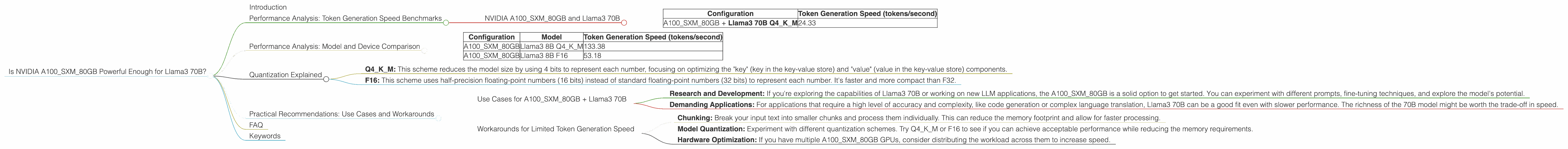

Performance Analysis: Token Generation Speed Benchmarks

The key metric for evaluating LLM performance on a given device is token generation speed. This essentially measures how quickly the model can process information and generate text. Faster token generation means more responsive applications and a smoother user experience.

NVIDIA A100SXM80GB and Llama3 70B

Let's dive into the numbers. The NVIDIA A100SXM80GB, with its impressive 80GB of HBM2e memory, is a beast in the GPU world. But can it handle the gargantuan Llama3 70B model? We've got data to show you:

| Configuration | Token Generation Speed (tokens/second) |

|---|---|

| A100SXM80GB + Llama3 70B Q4KM | 24.33 |

Important Note: The data for A100SXM80GB + Llama3 70B F16 is not available. We'll make sure to update this article as new benchmarks become available.

What does this mean? The A100SXM80GB can process 24.33 tokens per second with Llama3 70B using Q4KM quantization. That might sound like a lot, but remember, Llama3 70B is a massive model, so it takes some serious horsepower to keep up.

Performance Analysis: Model and Device Comparison

For a better understanding of these results, let's compare the A100SXM80GB + Llama3 70B configuration to other powerful device-model combinations.

| Configuration | Model | Token Generation Speed (tokens/second) |

|---|---|---|

| A100SXM80GB | Llama3 8B Q4KM | 133.38 |

| A100SXM80GB | Llama3 8B F16 | 53.18 |

What's the takeaway? The A100SXM80GB handles Llama3 8B with significantly higher speed, both with Q4KM and F16 quantization. This is expected, as Llama3 8B is a smaller model than Llama3 70B.

Quantization Explained

Let's talk about quantization for a moment. It's like a compression technique for your LLM. It reduces the size of the model and makes it easier to run on different hardware.

Think of it like this: you've got a high-resolution image, but you want to send it over a low-bandwidth connection. You can compress this image into a lower resolution version, sacrificing some detail for a smaller file size. Quantization is similar – it reduces the size of the model by using fewer bits to represent each number, but the quality of the model output might be slightly affected.

Two popular quantization schemes are:

- Q4KM: This scheme reduces the model size by using 4 bits to represent each number, focusing on optimizing the "key" (key in the key-value store) and "value" (value in the key-value store) components.

- F16: This scheme uses half-precision floating-point numbers (16 bits) instead of standard floating-point numbers (32 bits) to represent each number. It's faster and more compact than F32.

Practical Recommendations: Use Cases and Workarounds

Use Cases for A100SXM80GB + Llama3 70B

While the A100SXM80GB might not be blazing fast with Llama3 70B compared to smaller models, it can still be a good choice for certain use cases:

- Research and Development: If you're exploring the capabilities of Llama3 70B or working on new LLM applications, the A100SXM80GB is a solid option to get started. You can experiment with different prompts, fine-tuning techniques, and explore the model's potential.

- Demanding Applications: For applications that require a high level of accuracy and complexity, like code generation or complex language translation, Llama3 70B can be a good fit even with slower performance. The richness of the 70B model might be worth the trade-off in speed.

Workarounds for Limited Token Generation Speed

Here are some strategies you can employ to overcome the speed limitations of A100SXM80GB with Llama3 70B:

- Chunking: Break your input text into smaller chunks and process them individually. This can reduce the memory footprint and allow for faster processing.

- Model Quantization: Experiment with different quantization schemes. Try Q4KM or F16 to see if you can achieve acceptable performance while reducing the memory requirements.

- Hardware Optimization: If you have multiple A100SXM80GB GPUs, consider distributing the workload across them to increase speed.

FAQ

Q: What are the best GPUs for running Llama3 70B?

A: The A100SXM80GB is a strong contender for Llama3 70B with its ample memory, but for maximum speed, you'll generally want to explore models with higher token generation speed. Keep an eye on the latest benchmarks and consider options from NVIDIA, AMD, and other manufacturers.

Q: What is quantization?

A: Quantization is a technique used to reduce the size of a model by reducing the precision of its weights and biases. It's like compressing the model into a smaller file without losing too much information.

Q: How does Llama3 70B compare to other large language models?

A: Llama3 70B is an impressive model known for its high performance on various tasks, including text generation, translation, and code generation. It's considered to be a major advancement in the field of LLMs.

Keywords

NVIDIA A100SXM80GB, Llama3 70B, LLMs, local inference, token generation speed, quantization, Q4KM, F16, performance benchmarks, use cases, workarounds, GPU benchmarks