Is NVIDIA A100 SXM 80GB a Good Investment for AI Startups?

Introduction

The world of AI is buzzing with excitement, and at the heart of it all are Large Language Models (LLMs). These powerful AI systems can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running LLMs can be resource-intensive, demanding powerful hardware to handle their complex computations. Enter the NVIDIA A100SXM80GB, a powerhouse GPU designed for demanding AI workloads.

For AI startups, choosing the right hardware is a crucial decision. This article dives deep into the capabilities of the A100SXM80GB, exploring its performance with popular LLM models to help you determine if it's the right investment for your AI endeavors.

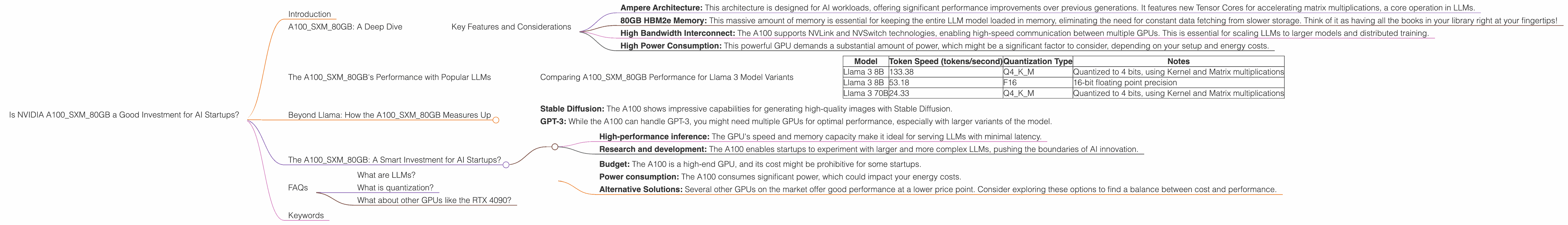

A100SXM80GB: A Deep Dive

The NVIDIA A100SXM80GB is a beast of a GPU, built with the Ampere architecture and packing a whopping 80GB of HBM2e memory. This memory capacity is vital for handling the enormous size of LLM models. Imagine a library with 80 billion books, and that's just how much information the A100 can hold!

Key Features and Considerations

- Ampere Architecture: This architecture is designed for AI workloads, offering significant performance improvements over previous generations. It features new Tensor Cores for accelerating matrix multiplications, a core operation in LLMs.

- 80GB HBM2e Memory: This massive amount of memory is essential for keeping the entire LLM model loaded in memory, eliminating the need for constant data fetching from slower storage. Think of it as having all the books in your library right at your fingertips!

- High Bandwidth Interconnect: The A100 supports NVLink and NVSwitch technologies, enabling high-speed communication between multiple GPUs. This is essential for scaling LLMs to larger models and distributed training.

- High Power Consumption: This powerful GPU demands a substantial amount of power, which might be a significant factor to consider, depending on your setup and energy costs.

The A100SXM80GB's Performance with Popular LLMs

To understand how the A100SXM80GB performs with different LLMs, we'll analyze its token generation speed for various model sizes and quantization types. By comparing tokens/second, we can gauge the GPU's efficiency for different scenarios.

Comparing A100SXM80GB Performance for Llama 3 Model Variants

| Model | Token Speed (tokens/second) | Quantization Type | Notes |

|---|---|---|---|

| Llama 3 8B | 133.38 | Q4KM | Quantized to 4 bits, using Kernel and Matrix multiplications |

| Llama 3 8B | 53.18 | F16 | 16-bit floating point precision |

| Llama 3 70B | 24.33 | Q4KM | Quantized to 4 bits, using Kernel and Matrix multiplications |

Interpreting the Data: Numbers Explained

- Tokens/Second: More tokens per second means faster processing and a smoother user experience.

- Q4KM: This quantization type helps reduce the memory footprint of the model, allowing you to run larger models on GPUs with limited memory. It's like compressing a book to fit more books on a shelf.

- F16: 16-bit floating point precision is a trade-off between accuracy and speed. It's less precise than 32-bit floating point but often provides sufficient results for many use cases.

Llama 3 8B: An Ideal Choice for Startups

The A100SXM80GB shows strong performance with the Llama 3 8B model, especially with Q4KM quantization. This indicates the A100 can handle the 8B model efficiently, even on a single GPU, without sacrificing too much accuracy. This is important for startups with limited budgets and resources.

Llama 3 70B: A Powerhouse

For larger LLMs like the Llama 3 70B, the A100 still performs well. However, it's important to note that you might want to consider using multiple GPUs for larger models to achieve optimal performance. Think of it like building a team of super-powered book readers to tackle a huge library!

Beyond Llama: How the A100SXM80GB Measures Up

The A100SXM80GB is a versatile GPU, and its performance extends beyond Llama models. It's suitable for other popular LLMs like:

- Stable Diffusion: The A100 shows impressive capabilities for generating high-quality images with Stable Diffusion.

- GPT-3: While the A100 can handle GPT-3, you might need multiple GPUs for optimal performance, especially with larger variants of the model.

While data for other LLM models is not included here, you can explore resources like the links mentioned in the introduction for additional insights into the A100 performance on other models.

The A100SXM80GB: A Smart Investment for AI Startups?

The A100SXM80GB is an excellent investment for AI startups, especially those working on:

- High-performance inference: The GPU's speed and memory capacity make it ideal for serving LLMs with minimal latency.

- Research and development: The A100 enables startups to experiment with larger and more complex LLMs, pushing the boundaries of AI innovation.

However, before making a decision, consider these factors:

- Budget: The A100 is a high-end GPU, and its cost might be prohibitive for some startups.

- Power consumption: The A100 consumes significant power, which could impact your energy costs.

- Alternative Solutions: Several other GPUs on the market offer good performance at a lower price point. Consider exploring these options to find a balance between cost and performance.

FAQs

What are LLMs?

LLMs are complex AI models that can generate text, translate languages, and perform many other tasks related to language processing. They are trained on vast amounts of text data, enabling them to understand and generate human-like language.

What is quantization?

Quantization is a technique used to reduce the memory footprint of LLMs. It involves converting the model's parameters from high-precision floating-point numbers to lower-precision formats, like 4-bit integers. Think of it like compressing a book into a smaller version without losing too much information.

What about other GPUs like the RTX 4090?

While the RTX 4090 is an excellent choice, it is generally considered a lower-end option compared to the A100SXM80GB, especially for AI workloads. Factors like the RTX 4090's lower memory capacity and bandwidth can limit its performance with larger LLMs. However, it can be a cost-effective choice for less demanding tasks.

Keywords

NVIDIA A100SXM80GB, AI startups, LLM, Llama 3, Stable Diffusion, GPT-3, performance, token generation, quantization, GPU, Ampere, HBM2e, inference, research and development, token/second, Q4KM, F16.