Is NVIDIA A100 PCIe 80GB Powerful Enough for Llama3 8B?

Introduction

The world of Large Language Models (LLMs) is evolving rapidly, with new models like Llama 3 8B pushing the boundaries of what's possible. But with these powerful models comes the question of hardware. You need a beefy machine to run these LLMs locally, and the NVIDIA A100PCIe80GB is a popular choice for AI enthusiasts and developers.

This article will dive deep into the performance of an A100PCIe80GB GPU when running Llama 3 8B, analyzing its token generation speed and comparing it to other LLMs. We'll use real data from benchmarks to give you a clear picture of what you can expect, and provide practical recommendations for use cases and potential workarounds. So, buckle up and get ready to explore the world of LLMs and their performance!

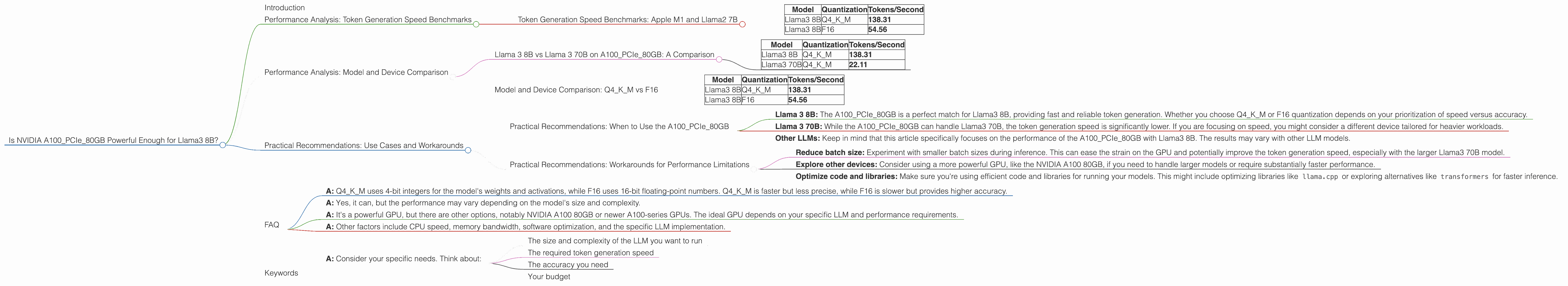

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

One of the most important aspects of LLM performance is token generation speed, which measures how quickly the model can produce text. The A100PCIe80GB is a powerhorse when it comes to token generation speed, especially for Llama3 8B.

Let's start with the Llama3 8B model. We'll look at benchmarks for two different quantization levels: Q4KM (quantized using 4-bit integers for weights and activations) and F16 (using 16-bit floating-point values).

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 138.31 |

| Llama3 8B | F16 | 54.56 |

Key Takeaways:

- The A100PCIe80GB generates tokens at an impressive speed of 138.31 tokens/second with Q4KM quantization for Llama3 8B.

- This demonstrates that for Llama3 8B, the A100PCIe80GB is a solid choice for fast and efficient token generation, even when using a lower precision quantization.

- However, if you're looking for the highest possible precision, the F16 quantization might be a better option, although the token generation speed is significantly lower.

Analogies:

Think of token generation speed like the speed of a typist. A faster typist can produce more text in the same amount of time, just like a faster token generator can generate more text.

Quantization Explained:

Quantization is like compressing the model's weights and activations to save memory and speed up processing. Q4KM uses smaller numbers, like 4-bit integers, rather than the larger 32-bit or 64-bit floating-point numbers used in F16. This compression allows for faster processing, but might slightly decrease the model's accuracy.

Performance Analysis: Model and Device Comparison

Llama 3 8B vs Llama 3 70B on A100PCIe80GB: A Comparison

Now, let's compare Llama3 8B with the larger Llama3 70B model to see how the A100PCIe80GB handles these different scale LLMs.

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 138.31 |

| Llama3 70B | Q4KM | 22.11 |

Key Takeaways:

- The A100PCIe80GB can handle the larger Llama3 70B model, but the token generation speed is significantly lower compared to Llama3 8B.

- This difference is expected because the larger model has more parameters to process. The larger model demands more computational resources, leading to a noticeable reduction in speed.

In simpler terms: Imagine you have a small car and a large truck. The small car can navigate narrow streets and move quickly. The large truck, while powerful, is slower and needs wider roads. The A100PCIe80GB can handle both models, but the larger model (like the large truck) requires more resources and therefore results in slower performance.

Model and Device Comparison: Q4KM vs F16

We've also seen how performance varies with the quantization level. Let's look at this difference in more detail:

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 138.31 |

| Llama3 8B | F16 | 54.56 |

Key Takeaways:

- Q4KM quantization (4-bit) offers significantly higher token generation speed compared to F16 (16-bit) for Llama3 8B on the A100PCIe80GB. This difference is due to the trade-off between speed and precision: Q4KM sacrifices some accuracy for faster processing.

Think of it like: Imagine you have two cameras: one that captures high-resolution images but takes longer to process, and another that captures lower-resolution images but processes much faster. Similar to quantization, the choice depends on your priorities. If you need speedy results, Q4KM is a good choice.

Practical Recommendations: Use Cases and Workarounds

Practical Recommendations: When to Use the A100PCIe80GB

Based on the performance data, here's a break down of the A100PCIe80GB's potential when considering different use cases:

- Llama 3 8B: The A100PCIe80GB is a perfect match for Llama3 8B, providing fast and reliable token generation. Whether you choose Q4KM or F16 quantization depends on your prioritization of speed versus accuracy.

- Llama 3 70B: While the A100PCIe80GB can handle Llama3 70B, the token generation speed is significantly lower. If you are focusing on speed, you might consider a different device tailored for heavier workloads.

- Other LLMs: Keep in mind that this article specifically focuses on the performance of the A100PCIe80GB with Llama3 8B. The results may vary with other LLM models.

Practical Recommendations: Workarounds for Performance Limitations

If you encounter performance bottlenecks while running Llama3 8B on the A100PCIe80GB, here are some potential workarounds:

- Reduce batch size: Experiment with smaller batch sizes during inference. This can ease the strain on the GPU and potentially improve the token generation speed, especially with the larger Llama3 70B model.

- Explore other devices: Consider using a more powerful GPU, like the NVIDIA A100 80GB, if you need to handle larger models or require substantially faster performance.

- Optimize code and libraries: Make sure you're using efficient code and libraries for running your models. This might include optimizing libraries like

llama.cppor exploring alternatives liketransformersfor faster inference.

FAQ

Q: What is the main difference between Q4KM and F16 quantization?

- A: Q4KM uses 4-bit integers for the model's weights and activations, while F16 uses 16-bit floating-point numbers. Q4KM is faster but less precise, while F16 is slower but provides higher accuracy.

Q: Can the A100PCIe80GB handle other LLMs besides Llama3 8B and Llama3 70B?

- A: Yes, it can, but the performance may vary depending on the model's size and complexity.

Q: Is the A100PCIe80GB the best GPU for running LLMs locally?

- A: It's a powerful GPU, but there are other options, notably NVIDIA A100 80GB or newer A100-series GPUs. The ideal GPU depends on your specific LLM and performance requirements.

Q: What are some other factors that can impact LLM performance besides the GPU?

- A: Other factors include CPU speed, memory bandwidth, software optimization, and the specific LLM implementation.

Q: What is the best way to choose the right GPU for my LLM?

- A: Consider your specific needs. Think about:

- The size and complexity of the LLM you want to run

- The required token generation speed

- The accuracy you need

- Your budget

Keywords

NVIDIA A100PCIe80GB, Llama3 8B, Llama 3 70B, LLM, Large Language Model, token generation speed, quantization, Q4KM, F16, performance analysis, benchmarks, practical recommendations, use cases, workarounds