Is NVIDIA A100 PCIe 80GB a Good Investment for AI Startups?

Introduction

The world of artificial intelligence (AI) is booming, with large language models (LLMs) like ChatGPT and Bard capturing the imagination of the world. But for AI startups looking to develop and deploy their own cutting-edge applications, the question of hardware becomes crucial. One popular choice for AI workloads is the NVIDIA A100PCIe80GB, a powerful graphics processing unit (GPU) designed for demanding tasks like training and inference.

This article delves into the capabilities of the A100PCIe80GB GPU, exploring its suitability for AI startups working with LLMs. We'll examine the performance of the GPU with various LLM models and quantization techniques, ultimately helping you decide if this powerhouse is the right investment for your AI journey.

Understanding the A100PCIe80GB GPU

The NVIDIA A100PCIe80GB is a true beast in the world of GPUs, equipped with a massive 80GB of HBM2e memory and boasting impressive processing power thanks to 40GB/s bandwidth and 5120 CUDA cores. This makes it ideally suited for tasks that require a lot of memory and computational power, like training and running large AI models.

Comparing the A100PCIe80GB Performance with Llama 3 Models

Now, let's get into the nitty-gritty and see how the A100PCIe80GB performs with Llama 3, a popular open-source LLM. We'll look at its speed with different model sizes and quantization techniques, which are like efficient ways to shrink the model while maintaining its accuracy.

Llama 3 8B Model Performance

First, let's take a look at the performance of the A100PCIe80GB when working with the Llama 3 8B model. We'll compare two quantization approaches:

- Q4 (K, M): This method uses 4-bit quantization, which reduces the memory footprint of the model significantly, making it possible to run it on smaller devices.

- F16 (FP16): This approach uses 16-bit floating-point numbers, which is a common format for AI models, offering a balance between performance and accuracy.

Remember, we are not comparing the A100PCIe80GB to other devices in this article. We are focusing on the performance of the A100PCIe80GB with different models and quantization techniques.

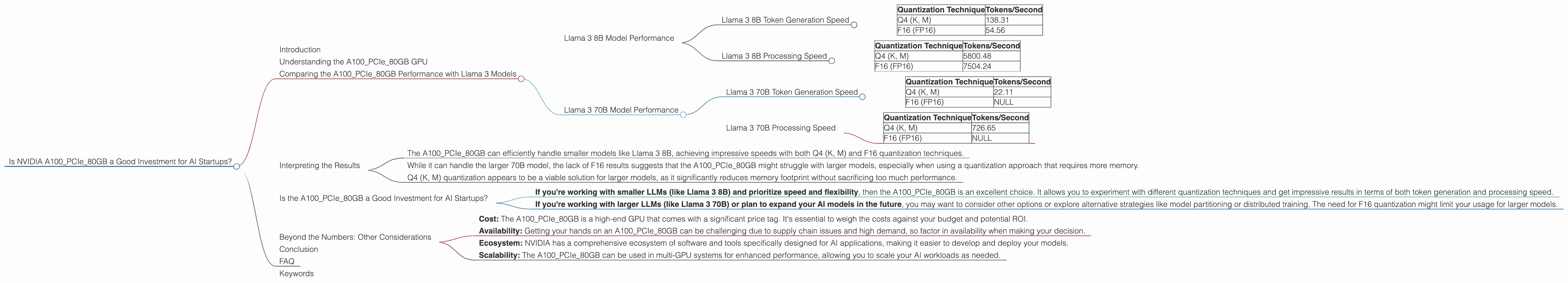

Llama 3 8B Token Generation Speed

| Quantization Technique | Tokens/Second |

|---|---|

| Q4 (K, M) | 138.31 |

| F16 (FP16) | 54.56 |

As you can see from the table, the A100PCIe80GB is quite efficient at generating tokens for the Llama 3 8B model. It achieves a remarkable 138.31 tokens/second with Q4 (K, M) quantization, which is notably faster than the 54.56 tokens/second achieved with F16. This suggests that using Q4 (K, M) can significantly boost performance without sacrificing too much accuracy, at least for this specific model.

Llama 3 8B Processing Speed

Now, let's look at the processing speed, which relates to how quickly the model analyzes and understands information.

| Quantization Technique | Tokens/Second |

|---|---|

| Q4 (K, M) | 5800.48 |

| F16 (FP16) | 7504.24 |

For processing, the A100PCIe80GB achieves a speed of 5800.48 tokens/second with Q4 (K, M) quantization and 7504.24 tokens/second with F16. Interestingly, in this case, the F16 approach slightly outperforms the Q4 (K, M) approach, highlighting the trade-offs involved in quantization. While Q4 (K, M) brings a significant memory reduction, it may slightly impact processing performance.

Llama 3 70B Model Performance

Now let's move on to a larger LLM, the Llama 3 70B model.

Llama 3 70B Token Generation Speed

| Quantization Technique | Tokens/Second |

|---|---|

| Q4 (K, M) | 22.11 |

| F16 (FP16) | NULL |

As you can see, the A100PCIe80GB can handle the 70B model, achieving a token generation speed of 22.11 tokens/second with Q4 (K, M) quantization. However, it's important to note that there are no results for F16 for the 70B model, suggesting that this configuration might be too memory-intensive for the A100PCIe80GB.

Llama 3 70B Processing Speed

| Quantization Technique | Tokens/Second |

|---|---|

| Q4 (K, M) | 726.65 |

| F16 (FP16) | NULL |

Similar to token generation, F16 results for the 70B model are not available, indicating the limitations of the A100PCIe80GB in handling that model size with this specific quantization approach. The Q4 (K, M) processing speed is 726.65 tokens/second.

Interpreting the Results

Based on the data analyzed, we can draw some conclusions about the A100PCIe80GB's performance with Llama 3 models:

- The A100PCIe80GB can efficiently handle smaller models like Llama 3 8B, achieving impressive speeds with both Q4 (K, M) and F16 quantization techniques.

- While it can handle the larger 70B model, the lack of F16 results suggests that the A100PCIe80GB might struggle with larger models, especially when using a quantization approach that requires more memory.

- Q4 (K, M) quantization appears to be a viable solution for larger models, as it significantly reduces memory footprint without sacrificing too much performance.

Is the A100PCIe80GB a Good Investment for AI Startups?

The answer is a resounding "it depends." The A100PCIe80GB is a powerful GPU capable of handling even large models, and it offers flexibility with quantization techniques. However, its suitability depends on the specific needs of your AI startup.

- If you're working with smaller LLMs (like Llama 3 8B) and prioritize speed and flexibility, then the A100PCIe80GB is an excellent choice. It allows you to experiment with different quantization techniques and get impressive results in terms of both token generation and processing speed.

- If you're working with larger LLMs (like Llama 3 70B) or plan to expand your AI models in the future, you may want to consider other options or explore alternative strategies like model partitioning or distributed training. The need for F16 quantization might limit your usage for larger models.

It's important to consider not only the GPU itself but also your overall AI infrastructure and the capabilities of your development team. For instance, you might need specialized expertise to handle the complex aspects of quantization and model optimization.

Beyond the Numbers: Other Considerations

While the performance numbers are crucial, it's essential to consider other factors when choosing hardware for AI startups:

- Cost: The A100PCIe80GB is a high-end GPU that comes with a significant price tag. It's essential to weigh the costs against your budget and potential ROI.

- Availability: Getting your hands on an A100PCIe80GB can be challenging due to supply chain issues and high demand, so factor in availability when making your decision.

- Ecosystem: NVIDIA has a comprehensive ecosystem of software and tools specifically designed for AI applications, making it easier to develop and deploy your models.

- Scalability: The A100PCIe80GB can be used in multi-GPU systems for enhanced performance, allowing you to scale your AI workloads as needed.

Conclusion

The NVIDIA A100PCIe80GB is an impressive GPU that can be a valuable asset for AI startups working with LLMs. Its performance with smaller models like Llama 3 8B is remarkable, and its ability to handle larger models with Q4 (K, M) quantization opens up possibilities for those working with more complex models. However, it's essential to consider the cost, availability, and your specific needs before making a decision.

FAQ

Q: What is quantization? A: Quantization is a technique used in machine learning to reduce the size of models while maintaining their accuracy. It involves converting the numbers used in a model's calculations from standard floating-point values (like 32-bit) to smaller representations (like 4-bit or 8-bit). This makes the model more efficient, allowing it to run on less powerful hardware or with faster inference times.

Q: How does the A100PCIe80GB compare to other GPUs? A: The A100PCIe80GB is a high-end GPU that ranks among the best for AI workloads. However, other GPUs like the NVIDIA A100SXM440GB and the AMD MI250X offer similar or even higher performance in specific tasks. This article focuses on the A100PCIe80GB, but you can explore other GPUs and compare their specifications and performance based on your specific needs.

Q: What are some other considerations for choosing hardware for AI startups? A: Aside from performance and cost, other factors to consider include the availability of software and tools, the size of your development team, and your experience with AI development. You may need to factor in the costs of setting up a cloud infrastructure or purchasing additional hardware to support your AI workloads.

Q: Is working with open-source models like Llama 3 a good option for AI startups? A: Open-source LLMs like Llama 3 offer a cost-effective and flexible option for AI exploration. They allow you to customize and train models according to your specific needs, and their open nature fosters a vibrant community of developers who contribute to ongoing development and research.

Keywords

NVIDIA A100PCIe80GB, AI startup, LLMs, Llama 3, large language models, GPU, performance, token generation, processing speed, quantization, Q4, F16, AI infrastructure, cost, availability, scalability, open-source, AI development