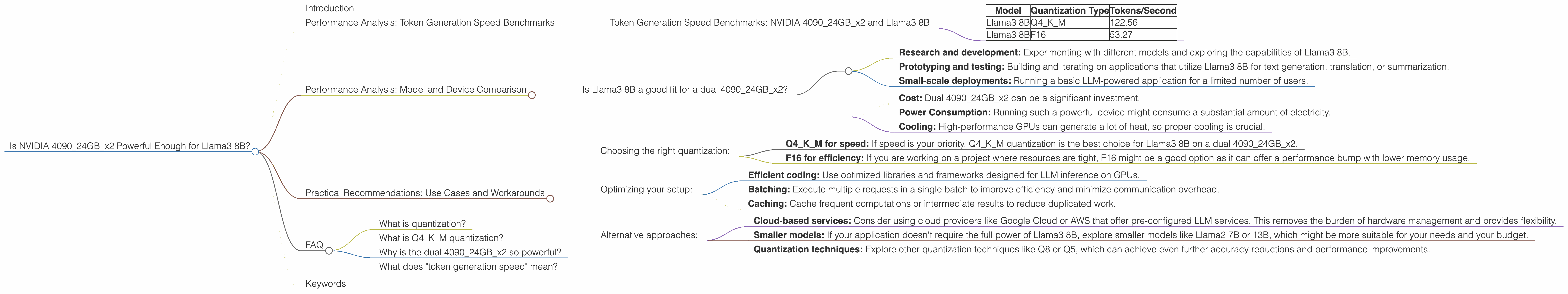

Is NVIDIA 4090 24GB x2 Powerful Enough for Llama3 8B?

Introduction

The world of large language models (LLMs) is abuzz with excitement. These powerful AI systems are changing how we interact with computers, but running them locally can feel like a race against time and resources. Imagine trying to fit a giant whale into a tiny bathtub - that's what it can feel like trying to squeeze the latest Llama3 8B onto your average PC.

So, is a dual NVIDIA 4090 24GB setup enough to tame this beast? Let's dive into the depths of performance and see if this hardware configuration can deliver the speed and efficiency you need for local LLM experiments.

Performance Analysis: Token Generation Speed Benchmarks

To understand how well the 409024GBx2 handles Llama3 8B, we need to look at the token generation speed. This is the key metric that determines how quickly your LLM can generate text. Think of tokens as building blocks for words - more tokens generated per second mean faster responses and a smoother user experience.

Token Generation Speed Benchmarks: NVIDIA 409024GBx2 and Llama3 8B

| Model | Quantization Type | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 122.56 |

| Llama3 8B | F16 | 53.27 |

Observations:

- The dual 409024GBx2 delivers impressive token generation speeds for Llama3 8B, especially when using Q4KM quantization. This means you can expect faster response times and smoother text generation from your LLM.

- Using F16 quantization significantly reduces the token generation speed. This is expected, as F16 quantization sacrifices some accuracy to gain performance, leading to a slower but potentially more efficient model.

Performance Analysis: Model and Device Comparison

It's always helpful to compare performance across different devices, especially when dealing with resource-hungry LLMs.

Is Llama3 8B a good fit for a dual 409024GBx2?

Yes, the 409024GBx2 is a powerful enough device for Llama3 8B, particularly if you need high-speed token generation. It's a good combination for tasks like:

- Research and development: Experimenting with different models and exploring the capabilities of Llama3 8B.

- Prototyping and testing: Building and iterating on applications that utilize Llama3 8B for text generation, translation, or summarization.

- Small-scale deployments: Running a basic LLM-powered application for a limited number of users.

However, keep in mind that performance is not the only factor. You might also consider other considerations like:

- Cost: Dual 409024GBx2 can be a significant investment.

- Power Consumption: Running such a powerful device might consume a substantial amount of electricity.

- Cooling: High-performance GPUs can generate a lot of heat, so proper cooling is crucial.

Important note: We must remember that this data is based on specific benchmarks and might differ depending on various factors like model settings, software libraries, and your specific workload.

Practical Recommendations: Use Cases and Workarounds

Choosing the right quantization:

- Q4KM for speed: If speed is your priority, Q4KM quantization is the best choice for Llama3 8B on a dual 409024GBx2.

- F16 for efficiency: If you are working on a project where resources are tight, F16 might be a good option as it can offer a performance bump with lower memory usage.

Optimizing your setup:

- Efficient coding: Use optimized libraries and frameworks designed for LLM inference on GPUs.

- Batching: Execute multiple requests in a single batch to improve efficiency and minimize communication overhead.

- Caching: Cache frequent computations or intermediate results to reduce duplicated work.

Alternative approaches:

- Cloud-based services: Consider using cloud providers like Google Cloud or AWS that offer pre-configured LLM services. This removes the burden of hardware management and provides flexibility.

- Smaller models: If your application doesn't require the full power of Llama3 8B, explore smaller models like Llama2 7B or 13B, which might be more suitable for your needs and your budget.

- Quantization techniques: Explore other quantization techniques like Q8 or Q5, which can achieve even further accuracy reductions and performance improvements.

FAQ

What is quantization?

Quantization is like simplifying a recipe by using fewer ingredients and smaller portions. For LLMs, we compress the model's weights, which are the numbers that determine the model's behavior. This compression makes the model smaller and faster, but it can slightly reduce accuracy.

What is Q4KM quantization?

Q4KM quantization is a specific technique that uses 4 bits for each weight. It stands for "Q4 Kernel Matrix" and is optimized for matrix multiplication, which is a core operation in LLMs.

Why is the dual 409024GBx2 so powerful?

The NVIDIA 4090_24GB is a high-performance GPU designed for demanding tasks like gaming and AI. Two of these GPUs working together provide a massive amount of parallel processing power, making them ideal for running large language models.

What does "token generation speed" mean?

Token generation speed refers to how fast the LLM can produce tokens, which are the building blocks of words. Higher token generation speeds translate to faster responses and smoother text generation.

Keywords

Llama3, 8B, NVIDIA, 4090, GPU, Token generation speed, LLM, Quantization, Q4KM, F16, Performance, Benchmark, Inference, GPU-based LLM inference, Local LLMs.