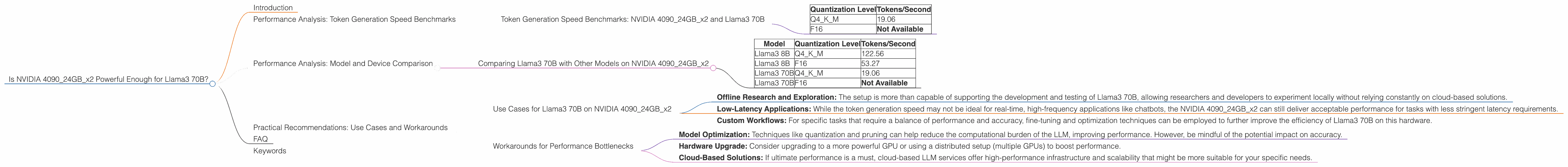

Is NVIDIA 4090 24GB x2 Powerful Enough for Llama3 70B?

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement, and rightfully so! These powerful AI systems are revolutionizing various industries, from natural language processing to content creation and beyond. But running these behemoths locally on your machine requires serious hardware muscle.

This article dives deep into the performance of the NVIDIA 409024GBx2 configuration, a powerhouse of a setup, when tasked with handling the gargantuan Llama3 70B model. We'll explore different quantization levels, analyze token generation speeds, and ultimately answer the burning question: Can this gaming beast handle the mighty Llama3 70B?

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA 409024GBx2 and Llama3 70B

Let's get right to the heart of the matter: token generation speed. This metric is crucial when it comes to real-world applications of LLMs. It tells us how quickly the model can churn out responses to your prompts, directly impacting the user experience.

Here's a glimpse of the token generation speed benchmarks for the NVIDIA 409024GBx2 setup paired with Llama3 70B:

| Quantization Level | Tokens/Second |

|---|---|

| Q4KM | 19.06 |

| F16 | Not Available |

Note: The F16 quantization data is not available for the Llama3 70B model on the NVIDIA 409024GBx2 setup.

What does this mean?

The NVIDIA 409024GBx2 can process 19.06 tokens per second with Llama3 70B using Q4KM quantization. While this is a respectable number, it's crucial to understand the context:

- Smaller Models, Higher Speeds: For smaller LLMs like Llama3 8B, this setup can handle around 122.56 tokens/second (Q4KM) and even 53.27 tokens/second with F16 quantization.

- Quantization Trade-offs: Quantization, a technique to compress model size, can impact performance. While the Q4KM setting offers better performance with the Llama3 70B model, it comes at the cost of accuracy.

- Real-World Implications: These figures reflect the underlying hardware power. It's important to consider the complexity of your application and the latency tolerance of your users.

Performance Analysis: Model and Device Comparison

Comparing Llama3 70B with Other Models on NVIDIA 409024GBx2

How does the Llama3 70B model stack up against other Llama models on the NVIDIA 409024GBx2? Let's examine the following table:

| Model | Quantization Level | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 122.56 |

| Llama3 8B | F16 | 53.27 |

| Llama3 70B | Q4KM | 19.06 |

| Llama3 70B | F16 | Not Available |

Key Takeaways:

- Scaling Up: The performance difference between Llama3 8B and Llama3 70B is significant, highlighting the computational demands of larger models.

- Quantization Impact: The Q4KM quantization offers noticeable performance advantages, but F16 may be more desirable for certain use cases where accuracy is prioritized.

- Hardware Limitations: While the NVIDIA 409024GBx2 is a powerful setup, it might not be the ideal choice for running the largest LLM models if real-time performance is a critical factor.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 70B on NVIDIA 409024GBx2

The NVIDIA 409024GBx2 configuration is suitable for running the Llama3 70B model for certain use cases:

- Offline Research and Exploration: The setup is more than capable of supporting the development and testing of Llama3 70B, allowing researchers and developers to experiment locally without relying constantly on cloud-based solutions.

- Low-Latency Applications: While the token generation speed may not be ideal for real-time, high-frequency applications like chatbots, the NVIDIA 409024GBx2 can still deliver acceptable performance for tasks with less stringent latency requirements.

- Custom Workflows: For specific tasks that require a balance of performance and accuracy, fine-tuning and optimization techniques can be employed to further improve the efficiency of Llama3 70B on this hardware.

Workarounds for Performance Bottlenecks

If the NVIDIA 409024GBx2 setup falls short of your performance expectations, there are a few workarounds you can explore:

- Model Optimization: Techniques like quantization and pruning can help reduce the computational burden of the LLM, improving performance. However, be mindful of the potential impact on accuracy.

- Hardware Upgrade: Consider upgrading to a more powerful GPU or using a distributed setup (multiple GPUs) to boost performance.

- Cloud-Based Solutions: If ultimate performance is a must, cloud-based LLM services offer high-performance infrastructure and scalability that might be more suitable for your specific needs.

FAQ

1. What is Quantization?

Quantization is a technique used to reduce the size of a neural network model by representing the weights and biases of the model using fewer bits. This results in a lighter model that takes up less memory and can be run on devices with limited resources. Think of it like compressing a high-resolution photo: it's not as detailed, but it's much smaller and takes up less space.

2. How does GPU power impact LLM performance?

The GPU power of your setup directly affects how fast your local LLM runs. A more powerful GPU with more cores and memory can handle the complex computations involved in running an LLM, leading to faster token generation speeds and more responsive interactions.

3. Is the NVIDIA 409024GBx2 suitable for all LLMs?

While the NVIDIA 409024GBx2 is a powerful setup, it's not a one-size-fits-all solution. For the most demanding models, it may be necessary to explore more specialized hardware configurations or utilize cloud-based resources.

4. How do I choose the right quantization level?

The choice of quantization level depends on the specific application requirements. If accuracy is paramount, then lower levels like F16 may be preferred. But if performance is critical, then higher levels like Q4KM could be more suitable, even if it comes at the cost of some accuracy.

Keywords

LLM, Llama3, NVIDIA 409024GBx2, Token Generation Speed, Quantization, Q4KM, F16, Performance, Hardware, Local Deployment, GPU, Benchmarks, Practical Recommendations, Use Cases, Workarounds